Margaret Hamilton’s impact on computing would be hard to overstate. For one thing, I nearly wrote “impact on software engineering” but apparently that’s a term she had a lot to do with promoting, during her tenure at NASA.

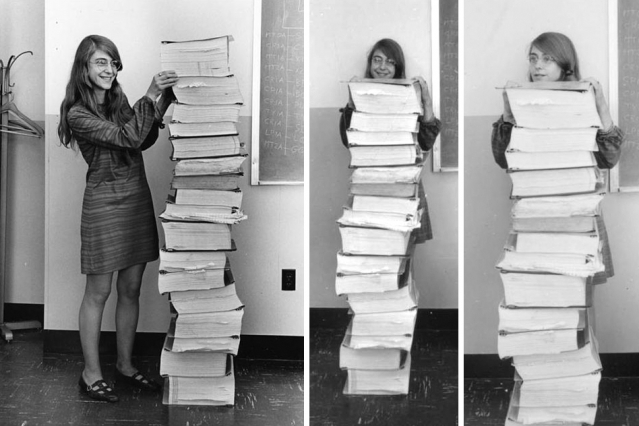

Margaret Hamilton ~1968 (age ~32)

Hamilton did see computing as an engineering discipline – she had early experience that made her understand that software is not just coding a “pong” game; reliability is not just an option when you’re coding the control software for a manned lunar rocket. I wish the Internet Of Things programmers of today understood what Margaret Hamilton understood in the 1960s.

This was the “bearskins and flint knives” era of computing: magnetic core, paper punch tape, 24k of memory. Programmers wrote code in pencil, then typed it in, fixed the syntax errors, and tested it. Application frameworks were nonexistent; you wrote each application from the ground up and programmers were still in the process of figuring out what parts of a software system were common and shared, versus project-specific.

Richard Feynman, writing about the software in the Challenger disaster,* said:

The software is checked very carefully in a bottom-up fashion. First, each new line of code is checked, then sections of code or modules with special functions are verified. The scope is increased step by step until the new changes are incorporated into a complete system and checked. This complete output is considered the final product, newly released. But completely independently there is an independent verification group, that takes an adversary attitude to the software development group, and tests and verifies the software as if it were a customer of the delivered product. There is additional verification in using the new programs in simulators, etc. A discovery of an error during verification testing is considered very serious, and its origin studied very carefully to avoid such mistakes in the future. Such unexpected errors have been found only about six times in all the programming and program changing (for new or altered payloads) that has been done. The principle that is followed is that all the verification is not an aspect of program safety, it is merely a test of that safety, in a non-catastrophic verification. Flight safety is to be judged solely on how well the programs do in the verification tests. A failure here generates considerable concern.

250,000 lines of code stacked up against Margaret Hamilton. Windows runs to 6 million LOC

Margaret Hamilton’s work was significant not simply because she was responsible for the code for the command module and the LEM, but because she figured out how reliable software is developed. The methods that she helped pioneer are the methods that are used today in the development of critical control systems for aircraft and industrial systems. Next time you’re napping at high altitude in some modern jet, you may spare a thought for how casually you’re trusting your life to complex flight control software, written by very fallible humans. Reliability became Hamilton’s life’s work.

The Apollo missions were flown before there were operating systems that aggregated common/core system services behind standardized programming interfaces.** The spacecraft ran applications that were loaded and unloaded specifically from punch-tape: now it’s time to load your “how to land” software! Each piece of software was basically a control-loop that monitored multiple inputs and outputs, and had to prioritize where it was spending its CPU cycles – stuff that’s all done automatically in the bowels of an operating system (it’s called the process scheduler, in most O/S now) the software flying the Apollo missions had to figure that all out using a technique called pre-emptive scheduling. That’s basically like the way iOS or a smartphone appears to work: whatever app is at the “top” of your screen has most of the CPU’s attention, and all of the user inputs and interactions, with the home/attention button serving as a wakeup to the scheduling executive.

Hamilton realized that part of system design was figuring out special cases for where the system needed to deal with something expected, as well as figuring out general cases where the system encountered something unexpected, but had to do the right thing. At one point during the Apollo landing, the system began spuriously spitting a distracting stream of error warnings, which suddenly cut off at the point where the system had been programmed to detect that it was in mid-landing and needed to shut off extraneous processing in order not to distract the pilot. The best programmers today still engage in such “defensive programming” – not just assuming things will go right, but predicting how they may go wrong, and having stable operations the code can fall back to when it gets into an unexpected state.

After the space program, Hamilton went on to start a business oriented toward “Higher Order Software”*** She tried to teach other people how to do it right. The idea of modular software developed with cooperating function and architectural specifications lives on. Hamilton’s co-evolved with general systems theory – the idea that architecture and systems could be decomposed and reasoned about bottom-up and top-down – which became part of the structured software development model of the 1980s; a model now seen as slow and ossified. Today’s “agile” and “rapid” development is a direct reaction to the costs and time involved in producing structured architectures. And, if you’ve used “Internet Of Things” devices, you can see the proof is in the pudding.

“From my own perspective, the software experience itself (designing it, developing it, evolving it, watching it perform and learning from it for future systems) was at least as exciting as the events surrounding the mission. … There was no second chance. We knew that. We took our work seriously, many of us beginning this journey while still in our 20s. Coming up with solutions and new ideas was an adventure. Dedication and commitment were a given. Mutual respect was across the board. Because software was a mystery, a black box, upper management gave us total freedom and trust. We had to find a way and we did. Looking back, we were the luckiest people in the world; there was no choice but to be pioneers.”

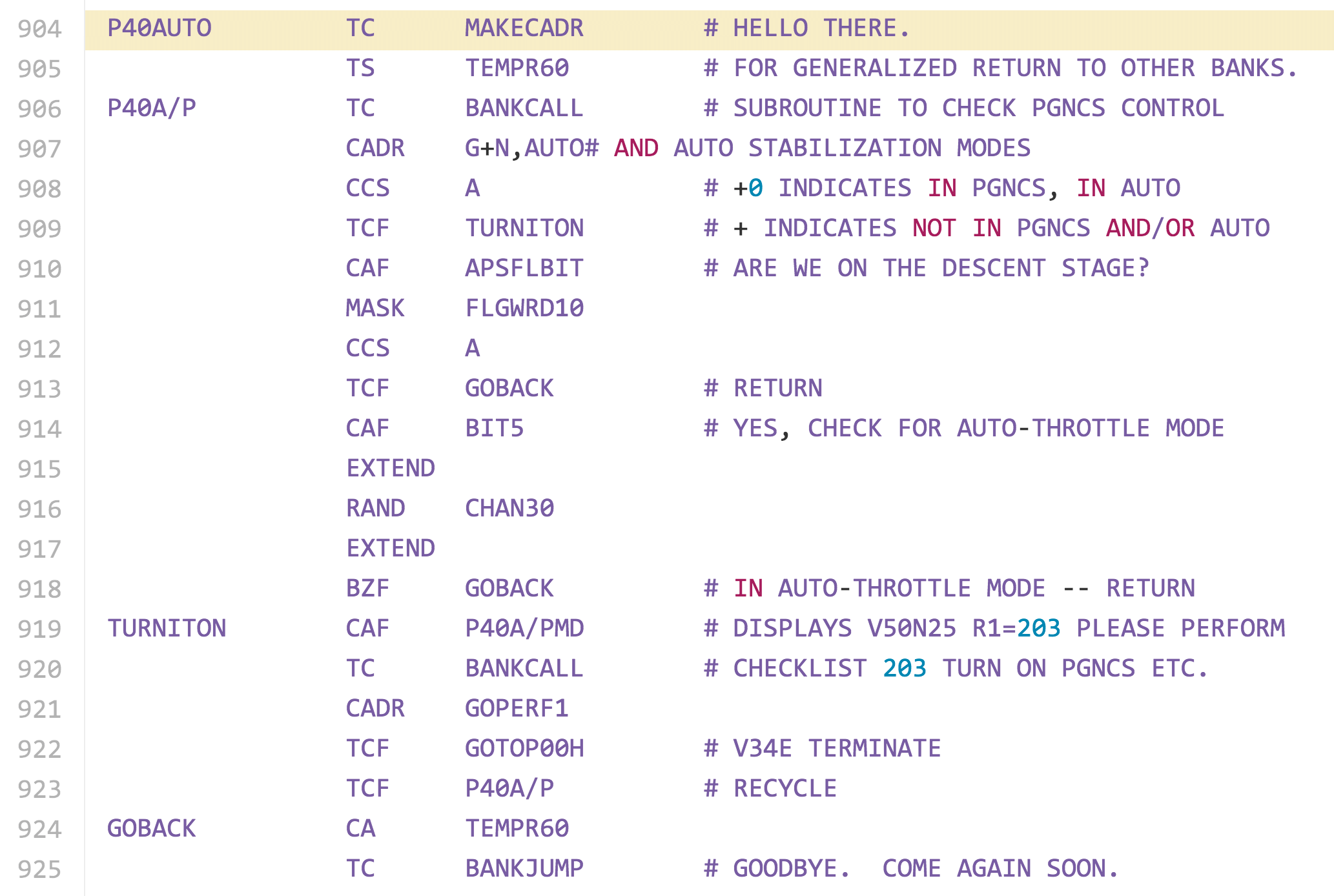

The source code for the moon control software has recently been published on github.

Hardcore of Yore

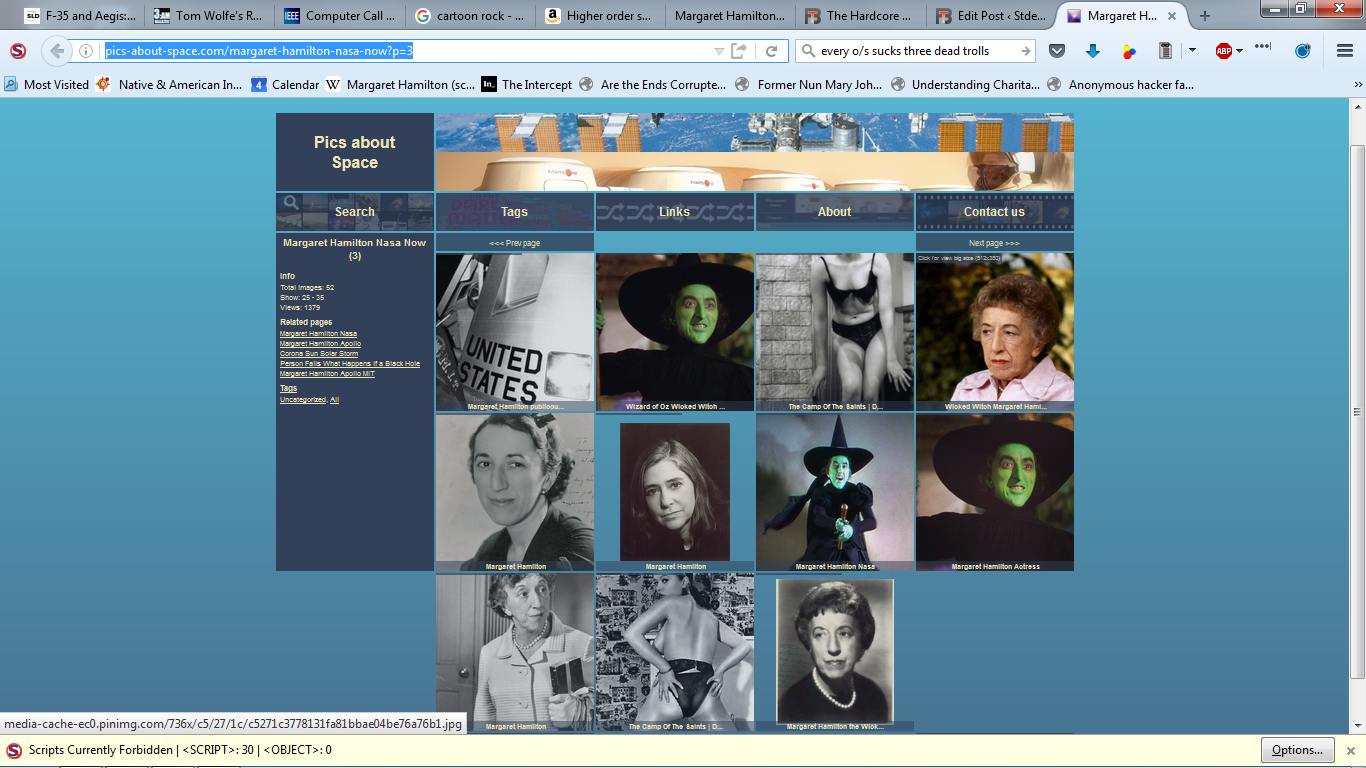

On a related, but less inspiring topic: boy’s club misogyny in computing. When I was looking for decorator images of Margaret Hamilton, I stumbled upon what appears to be an attempt to stuff image-search algorithms with “wicked witch” pictures.

What is wrong with people?

I do not understand the haters. I’m fairly sure that none of them will ever accomplish a fraction, in their lives, of what Margaret Hamilton has done. Maybe that’s their problem.

WIRED: Her Code Got Humans On The Moon – and Invented Software Itself

Margaret Hamilton: Higher Order Software – A Methodology For Defining Software (Amazon)

Margaret Hamilton: Universal Systems Language for Preventative Systems Engineering

(* Feynman was generally critical about safety in most of the crucial systems of the space program in the 1980s; the only aspect where he seemed to approve was the flight control software – Margaret Hamilton’s legacy.)

(** In that sense, an operating system is just a form of code re-use.)

(*** This is a typical Hamiltonian play on words. “low level code” usually refers to assembler. “High-level languages” usually refers to a compiler/linker or interpreter/compiler that provides a more human-language-like interface to programming. Hamilton is implying that there is always a higher level of both maturity and abstraction.)

Every O/S Sucks, by Three Dead Trolls in a Baggie

Feeeeeeeeeeeemales.

They’re probably related to the haters that deny the role of women in genetics, too.

Margaret Hamilton is a overloaded function, which I’m inclined to think the image search engines don’t understand. I’d have to give up my gay card if I wasn’t able to connect the identifier with the Wicked Witch in “The Wizard of Oz”.

Thanks for this, I had never heard of the “recent” Margaret Hamilton, I only knew of the “oh, what a world!” one.

Too bad that she didn’t work for Morton-Thiokol, they could really have used a mind like that.

“Bearskins and stone knives” – I remember that episode!

I’m guessing this was a joke? With two different people with the same name, spelled the same way, the pictures will obviously get mixed together.

Thanks for the article — I didn’t know anything about the non-witchy one either!

Margaret Hamilton was the name of the actress who played the witch in The Wizard of Oz.

I notice that both her names are dactyls.

Lunacy, moonacy,

Margaret Hamilton

Guided the LEM

By the force of her mind.

So Neil and her handiwork

Flight-codedependently

Built from her steps,

A great leap for mankind.

When I first heard that Margaret Hamilton worked in computing on the moon landings for NASA, it blew my mind a bit. I mean, she’d have been in her sixties, and here she was at the cutting edge still, decades after appearing in one of the most enduring films ever made. Good for her.

I was a little disappointed to learn it was a different Margaret Hamilton.

The reason I didn’t immediately assume it was a different Margaret Hamilton – that I didn’t assume an actress could be involved at a high level in some very cool and deeply techie stuff – was that I knew about Hedy Lamarr. Hedy Lamarr made a big impression on me when I was about six and I saw her play Delilah opposite Victor Mature’s Samson. Damn, she was beautiful. She also developed a radio guidance system for submarine torpedoes that used spread spectrumn frequency hopping to prevent jamming (it says here). Pretty hardcore, and pretty yore.

“didn’t assume an actress could not be involved “, obvs.

sonofrojblake:

Lamarr was pretty badass too. A friend of mine made this image of her, which I rather like. If you look closely you can see bits from a lab book, and at least one patent number.

Thank you all for your corrections; I should have realized it was probably an artificial ignorance that was name-matching images!! That’s a relief.

Johnny Vector@#6:

It’s neat that you can do that!!

I was born without a poetry gland.

Marcus@#10:

Thanks. Cuttlefish hasn’t been posting a lot in the last months you see, so I have to do it myself. It’s hard work and nowhere near as good as his, but still fun!

[OT]

The best thing about using assembler on old architectures was the ability to write self-modifying code.

(I know it’s deprecated, but, O so elegant!)

John Morales@#12:

Well, technically setjmp and longjmp are self-modifying code, and arrays of pointers to functions are computed GOTOs. Higher level languages just implement their abstractions in ways that should be familiar to old machine programmers, they just automate it and make sure (in principle) that you can’t do it wrong. If you want to see a beautiful example of that, check out the loop unrolling in “Duff’s Device” That was one of those “gee I wish I had thought of that” moments for most of us.

I saw some really scary code in xTank where Josh Osborne used setjmp/longjmp to implement threading. It actually worked better than some of the “official” threading mechanisms that came along, later.

I’m sure Margaret Hamilton had to put up with a bunch of “Wicked Witch” jokes in her time. Then Maxwell House ones as the actress was their spokes-person during the ’70s.