All across America, campus protests are flaring against Israel’s war on Gaza. I have a story to contribute that hasn’t gotten as much coverage, but I think it’s even more important for what it reveals about the mindset of Israel’s defenders.

At my alma mater, Binghamton University, the student association passed a hard-fought resolution in support of the Boycott/Divestment/Sanction movement. According to Pipe Dream, the campus newspaper:

With the resolution’s passage, Binghamton University becomes one of the first SUNYs to pass student legislation divesting from institutions supporting Israel’s military campaign. It also directs the SA to recognize Israel’s military campaign in Gaza as a genocide and Israel as an apartheid state.

One of the authors of the resolution, Tyler Brechner, is himself Jewish. His words are worth quoting:

“Tonight, we have a political and moral question on the agenda — not a religious one,” Brechner said. “Opposition to Israeli apartheid and genocide is a necessary and just stance, not an antisemitic one. Jews are not a monolith — I do not speak for all Jews, and neither does the opposition to this legislation. Conflating the Jewish community with support of Israel, however, assumes a bigoted, antisemitic trope that all Jews must be loyal to Israel.”

I want to emphasize his last point, because it’s important. Israel isn’t equivalent to Judaism, and Judaism isn’t equivalent to Israel. Israel is the only Jewish state, but that doesn’t mean that the interests of Israel are, or should be, identical to the interests of all Jewish people wherever they may live.

If someone passed a resolution that called for boycotting all businesses owned by Jews as a way of protesting Israel, I’d agree that would be antisemitic. It partakes of the “dual loyalty” trope – a bigoted canard which claims that Jewish people are a fifth column that’s always more loyal to Israel than to the place where they live.

However, Jewish people and their allies aren’t immune to this either. The defenders of Israel commit the exact same fallacy when they argue that BDS and other movements protesting Israel’s actions are antisemitic, because to be against Israel is to be against Judaism.

Speaking as a person of Jewish ancestry, there’s a clear difference. The BDS movement is motivated by opposition to the government and policies of the state of Israel. That’s different from antisemitism, which is hate directed at Jewish people simply for the fact of their being Jewish. Of course, Israel can change its policies, whereas Jewish people can’t change who they are.

To state the obvious, the Binghamton resolution is symbolic. Nothing in the present conflict will change because of it. Netanyahu and the IDF aren’t watching the outcome of a vote at an American state university.

However, some American defenders of Israel see this resolution as their cue to leap to the barricades. Angry feelings and over-the-top rhetoric are only to be expected. What you might not have expected is that elected officials would call for the First Amendment to be demolished so they can crack down on all dissenting opinions.

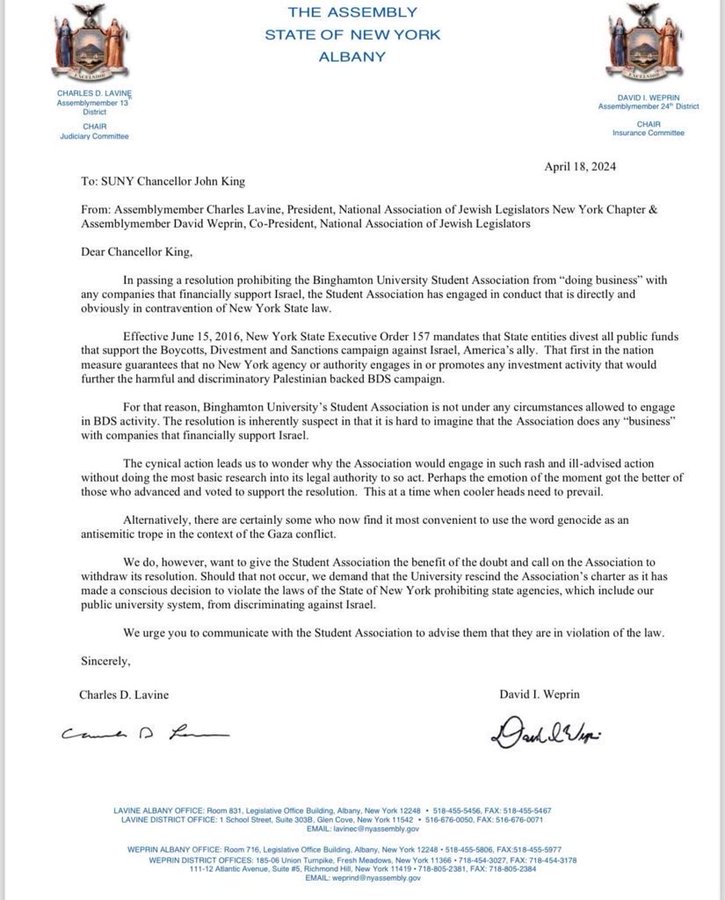

Two New York state assembly members, Charles Lavine and David Weprin – both Democrats – sent SUNY chancellor John King a blustery threat letter. It demands the withdrawal of the resolution, or if not, it calls on Binghamton University to suspend the SA’s charter.

It contains this breathtaking line: “Binghamton University’s Student Association is not under any circumstances allowed to engage in BDS activity.”

A little context here. New York doesn’t have an anti-BDS law, as some states do. But it does have an executive order, issued by former governor Andrew Cuomo, which bans state investment in entities that support the BDS campaign.

To my knowledge, anti-BDS laws have never been challenged in court. But they’re obviously, blatantly unconstitutional. They’re an attempt by the state to mandate which political opinions people are permitted to hold and how they’re permitted to express them. This isn’t just unconstitutional, it’s backwards. In a democracy, voters tell the government what positions it should advocate, not vice versa. Imagine if Jim Crow Alabama had made it illegal to boycott segregated lunch counters.

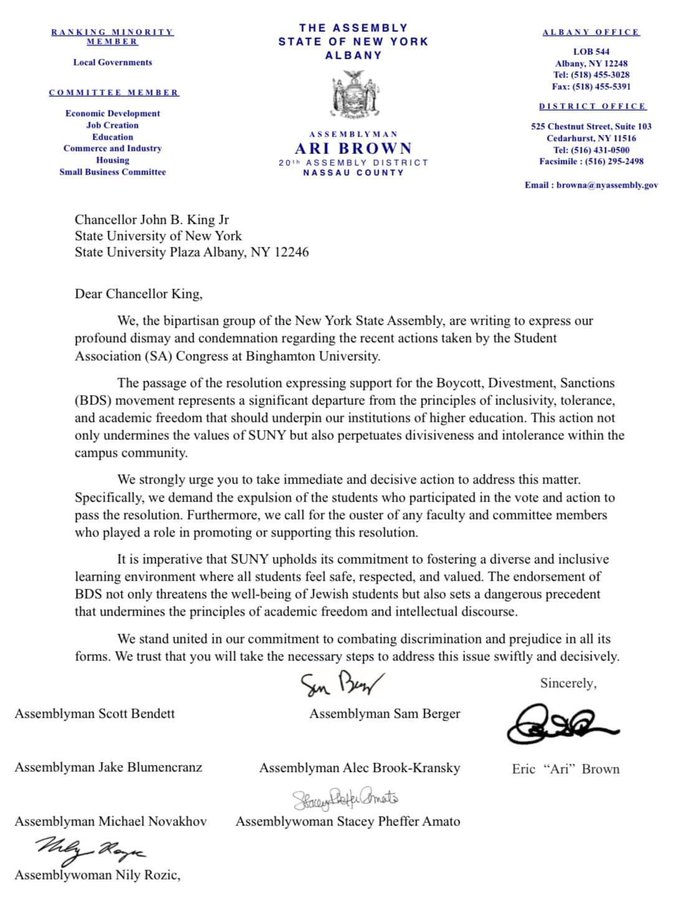

However, it gets worse. In a second letter, eight members of the New York state assembly (none of them the same two as before) called for the expulsion of Binghamton students who voted for the BDS resolution, and the firing of any faculty member who supported it. Yes, you read that right.

Here’s the relevant section of the letter:

The passage of the resolution expressing support for the Boycott, Divestment, Sanctions (BDS) movement represents a significant departure from the principles of inclusivity, tolerance, and academic freedom that should underpin our institutions of higher education. This action not only undermines the values of SUNY but also perpetuates divisiveness and intolerance within the campus community.

We strongly urge you to take immediate and decisive action to address this matter. Specifically, we demand the expulsion of the students who participated in the vote and action to pass the resolution. Furthermore, we call for the ouster of any faculty and committee members who played a role in promoting or supporting this resolution.

Or, to summarize:

“Support academic freedom and tolerance! Also, expel all students and fire all faculty who don’t think like we do!”

You might expect this kind of McCarthyist trash from hooded hatemongers, but these are elected legislators. They’re people, presumably, who have some familiarity with constitutional law. Yet they persist in the delusion that the First Amendment contains an Israel exception.

In all likelihood, these are empty threats. Binghamton University and the SA haven’t shown any intention of bowing to them. Still, even if these legislators only meant it as an over-the-top sign of how much they’re committed to Israel, they’re playing with fire. It’s an incredibly dangerous message that free speech ends where they say it does.

If Israel’s defenders accepted that the war is unpopular and that protests are a natural response, that would be one thing. Instead, they’ve adopted a militant “no one is allowed to disagree with us” attitude, and they’re arguing the law should punish dissent.

In the past, they’ve used antisemitism as a cudgel to shut down any criticism of Israel’s actions. That tactic doesn’t appear to be working anymore. It may be a sign of panic that they’re now trying to outlaw their critics, and in some places, calling in the police to silence them by force.