In 2009 I was a consultant to a company that produces cop cameras and nonlethal weapons. My remit was specific: they were setting up a cloud service and wanted a security design expert to review their system.

My methodology was always simple: sit down with the senior systems engineers responsible for a component, and ask them “why do you think this is secure?” It’s a trick an old friend of mine (now a top-tier consultant at IBM) and I developed; there’s really only one answer that gets credit and that is, “what do you mean by ‘secure’ in this context?”

Integrity, audit, etc – it’s “security 101” stuff

In that context, what they were worried about was the integrity of the data being offloaded from cop-cams. My client was planning on offering a new technology: a cloud service that would store evidence from the cameras where it was tamper-proof and safe-stored. I proposed that my deliverables be in the form of two reports, one which was technical recommendations, strategic recommendations, and system design notes – the other was to be something that could be shared with a potential customer to help explain the system and demonstrate that impressively-credentialed outside experts had examined it and felt it was good. I used to get marketing/sales requests like that, sometimes, and I finally decided that I was alright with them as long as I stuck to the truth and kept things dry and straightforward.

At the time, there was no competition at all except for expensive in-house solutions. For example, LAPD had a pretty powerful system for collecting cop-cam data, involving allegedly a locked secure room with all the video servers, a cleared system administrator, and a set of policies and procedures that outlined system governance. For a security design, a look at the procedures is highly desirable – the system was being designed to automate exactly such a system and knowing the customers’ procedures was a view-port into their (implied) expectations. I innocently asked for a copy, which request was relayed to the LAPD, and turned down flat. As the process continued, one of the sales guys took me aside and said, “they don’t really have any idea what they are doing with the stuff. They have some low-paid guy who barely knows how to run the system, and they like it that way.” That was about the time that I realized that all the stuff I had been doing, data flows, cryptography, audit trail, etc., needed to be filed under “solving parts of the problem that the customer does not care about.” In any IT project, that’s a great big red flag because it means you are about to build a system that nobody wants.

I did some interesting work (some of my best actually, and I wish I had been able to patent it, see explanation below the divider) but I began to realize that the problem I thought I was working on was not really the problem that my client had. The police departments were deeply concerned about who had authorization to administer the system, but they wanted to maintain the ability to access the data untraceably. Finally, I realized that they were not coming directly out and saying it, but they did not want a system that was reliable. They wanted a system that they could cause to fail in certain specific ways. If the body cam unit encrypted data and directly submitted it for ingestion, there wasn’t anyplace where the data could get – you know – “lost”. Worse, if someone tried to “lose” any of it, it would be completely obvious. A security system designer would think that was a desirable property of the system, but it turned out that integrity and audit was a problem for several police departments, not just LAPD. It doesn’t take a genius, at this point, to realize that the ability to cause a “system error” that resulted in data loss, was a requirement.

My work done, I wrote my report, and made a few oblique references to the need to do a better requirements analysis in order to ensure alignment with market needs or something like that.

There are a few other attributes of such a system that are notable:

- Since it is server-centric, its got a limited attack-surface from the outside. The ability to interfere with the system is limited to only the command options that are exposed by the system. If the system does not give a “delete this file” option, then files can’t be deleted.

- When a system exposes limited command options, the system can maintain an audit trail that is highly specific to the commands that are allowed. If every file access is logged and attached to the user who did it, it’s still possible to copy a file to somewhere else, but it’s easy to see who did it and when.

- The limited interface of the system can be used to control image operations – for example, the ability to drop “highlight circles” that brighten an image in a specific location to illustrate some part of a scene. Or the ability to blur out faces, or whatever. The system could tag such edited images as ‘altered’ and annotate who had performed the alteration.

- The system can provide limited-detail options for review, and can completely control the review process. For example an officer might be able to review a down-sampled version of the video of an incident, in the form of thumbnail interval-streams. But a review committee might be able to access the full video. Naturally, all of such operations would be logged in an audit trail.

- The system might allow, under no circumstances, a full-resolution copy of the video to be downloaded. By only providing a down-sampled copy it would be impossible for someone to edit the down-sampled copy to make it look like the original. Alternatively, all downloaded copies of a video might be water-marked by the system using cryptographic time-codes embedded in the data-stream. Edited versions would be unable to duplicate the time-codes without the encryption key for the file, which would make it extremely obvious where an edit began or stopped, since the time-code would vanish.

- The system can enforce options that are tailored to an organization’s governance policy. I suggested that the system include a “governance engine” that offered a few options for enforceable work-flows.

When I finished the project, I felt pretty satisfied that it was an interesting system that represented an improvement over many of the other things that were out there, especially the in-house options. It was the in-house options that made me uncomfortable.

I was really naive.

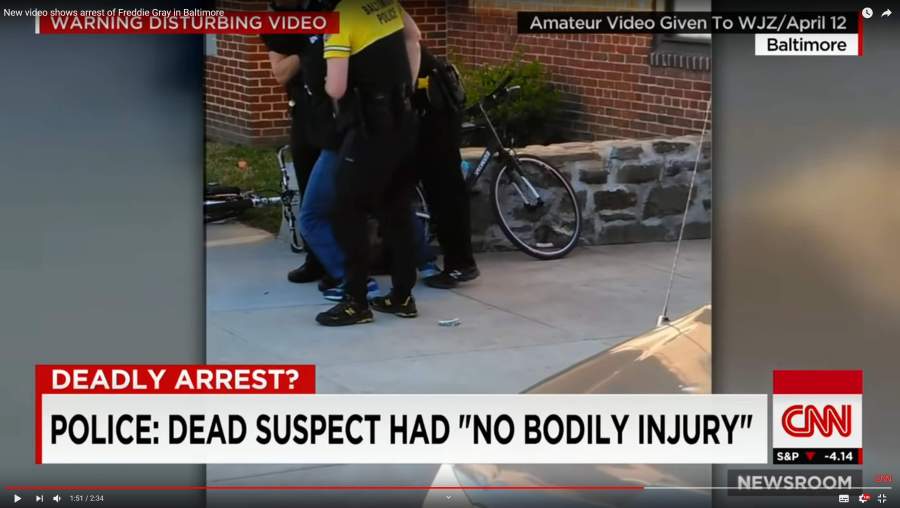

Recently, I have been listening to a podcast about the murder of Freddie Gray, by the Baltimore Police Department. They don’t call it that, of course, but that’s what it is. They call it “The Killing of Freddie Gray” and, in doing so, they participate a little bit in the white-wash that sanitized the whole affair. I eventually intend to do a posting about the whole series, which is excellent but they drag it out a bit much; I think it could be half as long. The whole podcast is an excruciating blow-by-blow of who was where and when and there’s a great deal of discussion about what was shown on various police surveillance cameras, and where, and when. Spoiler Alert: fucking cops broke Freddie’s neck before they put him in the van, and the whole situation unspooled on them when they realized they had a paraplegic (that was their fault) in the back of their van. Needless to say, they didn’t give a shit about the man they had just sent on a lingering death-spiral, but they were worried enough to put together a remarkably incompetent cover-up. A cover-up that the media and local politicians played along with.

The episode I listened to, today, was about the edits that were made to the surveillance videos. Imagine my surprise when it turned out that the system that was storing and manipulating the videos was the one I had worked on. From the sound of it, however, the customers got what they wanted: the ability to obscure, edit, loop – full video edit capabilities. That’s nice if you’re making a cloud video-editing solution, but for an evidence archiving system? It seems that the system’s requirements had changed.

The podcast describes how the police released videos as exonerating evidence, but when the analyst actually sat down and looked at them carefully, there are moments where people jump from place to place and another spot where the camera’s time-code keeps moving forward though the action has frozen on a still frame. I have some experience editing video and working with digital media and I can say categorically that such things do not happen as a result of any unintentional error. The podcasters have to, I suppose, use diminishing language to avoid directly accusing the Baltimore police department of deliberately fabricating evidence – but it’s pretty obvious what is going on.

Cam1A’s rotation pattern, for example, is at its most inconsistent during the 6 minutes Freddie and the various officers are on Presbury St.

This is a surveillance camera that is supposedly on automatic rotation, which allegedly recorded video (released by Baltimore police department) in which the automatic rotation is jerky and pans around irregularly. Automatic cameras don’t do that, but edited video might.

Normally, if you wanted to do forensic analysis for video alterations, it’s pretty straightforward: you start with the original and compare it to the edited version. But Baltimore police didn’t release the original. They released something they said was the video. And that’s where the 16 ton weight fell on me: naturally, I know the system that the video was stored under. It’s the one that I reviewed in 2009. The Undisclosed podcasters discuss a similar case:

https://podcasts.apple.com/us/podcast/undisclosed/id984987791?i=1000384739060 at 59:28 [un]

Is it possible to edit video evidence? The answer is “yes, it certainly is possible.” But can I prove it here in this case? Definitely not without the raw footage, and even then it would be difficult. Proving video evidence has been tampered with is much harder than doing the actual tampering. I fully expect that there will be those who think I am crazy to suggest such a thing, but I’m not the first to consider the possibility that the police would alter video evidence.

In fact, right now, in Albuquerque New Mexico, the families of two people killed by officers of the Albuquerque police department are claiming just that. And they have damning allegations made by a whistle-blower on their side. Reynaldo Chavez was the central records supervisor and records custodian for the Albuquerque police department from 2011 to 2015. He has filed a whistle-blower lawsuit against the city of Albuquerque alleging that his employment was terminated after he came forward with allegations that he was instructed to withhold information from attorneys and media outlets that filed requests under the state’s inspection of public records act. He also says that he knew of others in the department who were tampering with evidence including the 2014 to 2015 officer-involved shootings of Mary Hox and Jeremy Robertson. The Hox and Robertson families have filed civil rights lawsuits against the city. Chavez has signed a sworn affidavit and gave testimony under oath explaining how body camera and surveillance footage in those shootings was altered, deleted, or hidden using an online evidence-management and cloud storage service called evidence.com.

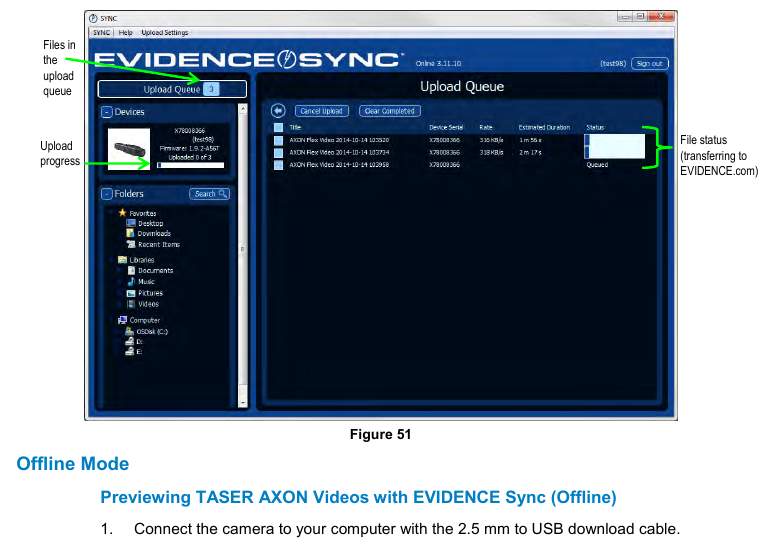

Evidence.com is owned by Taser, best known for manufacturing stun guns, but in recent years they have come to dominate the market for body cameras. Footage from their body cameras automatically uploads to evidence.com where authorized users can assign the footage to a case along with other evidence. Taser has publicly marketed the body cameras and evidence.com as being equally beneficial to both police and civilians. But behind closed doors it’s been clear where the company’s loyalties lie. “We got into this space to try and change this whole conversation,” said Taser International CEO Rick Smith, in February 2015, while giving a presentation at the California highway patrol headquarters in Sacramento. He was referring to the outrage over increasingly common officer-involved shootings and other allegations of misconduct and brutality. He continued, “my personal bias is – you guys get a ton of false complaints.”

Well, I guess “the customer is always right” – though, if the system maintains good audit trail and controls it ought to be impossible to delete evidence or alter it. That tells me either that police departments are being stupidly sloppy, or that the system has changed profoundly from its original design. Or… There’s one other option, which is worse: the actual unedited videos are in the digital vault, un-tampered-with, and the custodians of the vault are choosing to knowingly allow police departments to present edited versions. Imagine if you knew that the Baltimore police’s posted version of the arrest of Freddie Gray was a carefully selected tidbit that didn’t show the moment when the cops broke his neck – imagine you knew that and did nothing, said nothing. The whole notion of body cameras is that they are impartial witnesses, but this system is not impartial at all, it’s concealing evidence. That’s criminal.

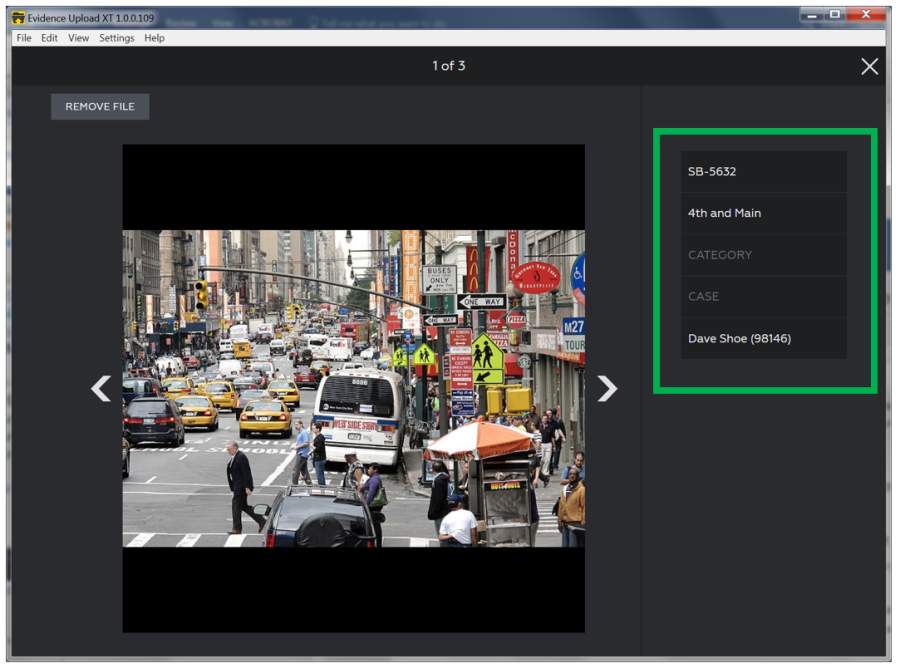

If you search for views of the command interface of the evidence archive, it includes some commands I’d say are rather sketchy for an “evidence” system. Like “remove file.” Or “edit metadata.” If I were working as an expert for the team that is litigating this, I’d be subpoenaing evidence.com’s transaction logs: who edited what and when? If the system doesn’t record that it’s dodging basic IT Security 101 audit trails, which would be odd indeed for a digital evidence archive.

Remember what I said earlier about a limited command interface? Another element of that is that you oughtn’t provide a direct interface to review or access a device, because then device security becomes an issue.

Wow, it’s almost as though the system has morphed into one in which an officer can download their body camera to a laptop and review it, then decide whether or not to put the body camera under the wheel of their squad car and back over it a couple of times. ** As I listened to the podcast, I became more and more uncomfortable with what I was hearing.

Blade Runner: frame, pan right 5, and enhance

More than 5000 law enforcement agencies use evidence.com including police departments in San Diego, San Francisco, Fort Worth, Dallas, Chicago, and yes: Baltimore.

In an email, detective Jeremy Silbert from BPD’s public information office explained that the department signed a contract with Taser to purchase their body cameras along with evidence.com cloud storage in May, 2016 following a two month pilot program in late 2015. So, post Freddie Gray, at least officially. As of April 2017, approximately 1500 BPD employees have evidence.com accounts, as does the state’s attorney’s office. Though Silbert says, “It cannot be characterized as full access.”

I wonder if full access allows the “delete” button. A button which (in my professional opinion) should never have been part of the system design. But it does sound like there is some kind of governance process in place:

“Roles determine each users’ permissions or access and restrictions to various features and functions,” Silbert wrote. None of our patrol officers have administrative privileges to evidence.com. So what does full access to evidence.com look like? Here is how Chavez described some of the capabilities during his sworn deposition: “again, it goes back to if you can upload on a case file, if you can upload an image, then you can actually go in, you can size the image – you can place it into an actual file. A mask… it can be a circle, it can be a square, and it’s like Photoshop, you can go into an actual frame and you can mask something so – and this is just a ‘for example’ – if the perpetrator is holding a gun, you can actually mask that where if it’s a perpetrator or an officer, you can mask it so now you can’t tell if that’s a gun or he’s holding a comb in his hand so it’s hard to differentiate.

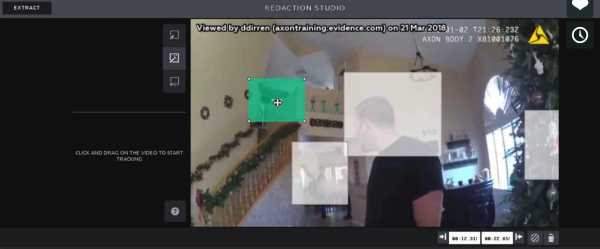

The manual for the redaction system is [here] What Chavez is referring to is an auto-blurring capability that the system includes so presumably you can blur out the faces of someone who is not involved.

It appears that the edits are performed on a copy, but I’m not entirely sure. If a security system designer who was concerned with the integrity of evidence designed such a system, and videos produced that had redacted regions would have a clear indication of the redaction, as we’d expect for evidence. Here’s what I imagine it might look like:

The system would, naturally, maintain an unredacted copy and a complete audit trail of all redactions. For sure, the redactions would be obvious; really obvious, as in my example.

[Chavez continues] Same token with that square on an officer’s item. You can just do the square on the entire clip and mask that. And that’s where the gradients come in; so at that point you can start making it – you know – it’s a very subtle change. You’re looking at a slide variable [presumably a ‘strength of effect’ controller in the software] it’s going along pretty good and now you start seeing a little discoloration and it’s not as bright, it’s darker. Usually when it’s darker that means there’s a mask because its a gradient now that’s put on it.

I’ve done expert testimony and affidavits and I’ve got to say Chavez’s testimony sucks. It’s technically accurate but it’s a very poor description of what’s going on. In the edit I did above, I would call the elliptical areas “selection regions” (per Photoshop) with an “effect” applied to the selection regions. What Chavez is talking about with regard to ‘gradients’ is the ability of advanced editing software to control the degree to which an effect is applied in a selection region. This is all cracking good and interesting stuff if you’re talking about digital image editing but it’s “WTF” if you’re talking about evidence. If I were presenting evidence that the guy with the black hair and the neat beard was at a protest, it doesn’t matter if the rest of the scene is messed up. There is absolutely no need for edits to evidence to be subtle.

[The podcast narrator resumes] Taser claims that any modifications made to evidence in the system will be reflected in the audit trail. In addition, the original piece of evidence always remains intact. But whether that audit trail is immediately provided with evidence that is turned over, is up to the department. Never mind the fact that Chavez’ testimony makes it clear that very it’s easy to hide what evidence, including videos has been edited.

[Chavez again] “You upload a video clip to evidence.com, that becomes the parent – at that time you go in and do whatever operations you want on that original, then it becomes the orphan – it becomes the orphan so at the end of the operation you still have the original which hasn’t been altered and now you have the new orphan which you’ve added/deleted/redacted, whatever operation you’ve done. So you store that file – you can go in at the same time, close everything up, and the one that was the parent, actually go ahead and do the deletion, come back, make your orphan and upload it as if it were a new piece of evidence that came in that was uploaded to the cloud.

That’s reasonable behavior if the system you’re building is a cloud photo scrapbook, but evidence? You want the system to function so that only authorized devices and authorized users are allowed to create chains of evidence under tightly controlled circumstances.

What I’m getting at is that it sounds like the system has built-in failure-points that don’t have to be there. I suspect that the police departments wouldn’t buy it if it didn’t. The point is: there were people who looked at the system and tried to make it better. If it’s got these problems it’s because the system’s requirements changed so they could cater to their real customers: the cops.

In the case of the edited “evidence” that the Baltimore police department released, if it was edited using the evidence.com online tools, that would be reflected in evidence.com’s logs and the audit trails of the videos that were released. That information ought to be public, but it won’t be. Because (if you listen to the whole series) it’s pretty clear that everyone involved in the Freddie Gray murder, from the cops who broke his neck, to the driver of the van who ignored the newly paraplegic in the back, to the commissioners and even the prosecutors, were corrupt and engaging in a cover-up. Not only that, they provoked a crowd, called it a “riot” and used it as an excuse to tear-gas civilians who were sitting in their homes in the vicinity.

The whole story is layered shit. And under the shit, there’s more shit. It’s shit from the top to the bottom, and that’s a pretty good metaphor for American policing.

640×480 jpeg@20% quality, 30kb

Interesting work: one of the problems was that the cop cam unit was battery powered and did not have enough energy to run any fancy encryption. That was presented to me as the reason why the cam unit did not simply stream data automatically to the service’s ingestion routines, which would have provided a strong guarantee that data loss or corruption might not occur. Assuming some magic encryption fairy dust. How to do it? As I explored the system I learned that the cam unit spent its non-duty time in a battery-charger/holder that also synchronized data to a local server. The local server then handed the data off to the service’s ingestion routines. After the synchronization was complete, the cam unit’s local memory was overwritten with zeroes by an erasure routine in the cam unit’s software. While the cam unit was in the charger, it had lots of CPU cycles to burn since it was not running on battery – so I proposed that the local server generate a session-ID each time the cam unit was put in the holder, then the cam unit would use a device-key (populated into the cam unit when it was first put in service) and the session-ID to encrypt all of the system’s zeroized memory. When the data was transmitted off the cam unit, all it needed to do was XOR the encrypted zeroized blocks against the captured video, and transmit the results. The fun part of the whole thing is that, once that process is complete, the device no longer needs to hold the session-ID. Someone attacking the communications would have to know the device-key and have the other key, which never left the local server. The local server needed only to forward that key to the server ingestion routines, and the cam unit could simply submit the data directly in the form of encrypted blocks. If a unit was lost and someone found it and tried to get at the data, they’d just have big chunks of random stuff and, in order to extract the video, they’d need to already have a copy of the video. In cryptography, that use of an encryption algorithm is called “electronic code book mode” and is as strong as the key and the underlying cryptosystem; using the plugged-in state of the cam unit was not a tremendous innovation – I would bet a bag of donuts that Roger Schell or someone like that invented the technique in the 1970s – but it neatly solved the problem as it had been framed. I did some estimates of power-consumption and determined that my proposed system was basically “free” in terms of battery life. The body cam could stream thumbnails over the encrypted link and save full-resolution video on the local memory, to be offloaded and ingested when the cam unit is back in the cradle. In the mean time, if something unfortunate happens to the cam unit, the thumbnail stream is still there (640×480 50% quality jpegs 15 seconds apart would consume about 100kb/minute)

** another nice feature of the suggestion I made above is that you can also send very small “heartbeat” messages securely (my suggestion was a thumbnail, battery level, gps data, and timecode), so if the cam unit goes offline because someone – I dunno – backed a car up over it, the system has an exact detail of when the cam unit ceased functioning, and all the data right up to that point.

It appears that your ideas on security were a little “too secure” for their tastes. This is why we can’t have good things.

There is another mode in my suggestion, where the cam unit generates a random session-id, encrypts the blocks, and sends the key to the server ingestion routines directly then forgets it. In that mode the local server can’t access the data, it’s opaque and copies to the cloud server so it’s tamper proof and unreadable even if it stages through the local server. Perhaps thumbnails might go to the local server (basically the process inverts) – that approach screams “I don’t trust you!!!!!” at the cops, which is what I would recommend now.

It also solves the “plug a USB in” problem. The device is just full of cryptobits. The ability to access data directly off the device is very worrisome; it hoists a red and black skull and crossbones flag labeled “governance”!

In fact, I would recommend that every city establish a City Desk office which is responsible for governance for cop cams; police cannot be trusted with this stuff. Nor can local judiciary or the mayor’s office, either. In the Freddie Gray murder the entire city of Baltimore’s apparatus conspired to clear itself of the crime; it was a true inter-agency cooperative effort. The City Desk would be a paid position staffed by people from communities.

It is clear to me that this is an issue that touches on public trust and the public cannot trust cops.

This kind of raises a question, doesn’t it — why aren’t you? Why not at least send a link to this blog to the Gray family lawyers, especially if the testimony they’re getting isn’t very good? I’m sure if I was a lawyer, what you just explained would seem veeery useful. You’re familiar with that specific system — if that doesn’t make you an expert witness, who is?

I spend a lot of time thinking about media, and I am not convinced that video evidence is worth much.

Even with an untampered original, no juror would ever see it. Both sides edit the video into a narrative that best suits their position, and present that. The testimony becomes one of dueling edits. Video, like a photograph, *feels* true while leaving out a great deal, while simultaneously people tend to see what they are led to see by others and their own prejudices.

Every time some shit goes down, we are confronted with endless murky cell phone video in which, objectively, you can see nothing whatsoever, but with commentary that clearly indicates that people are seeing what the want to see. The feeling of truth, that indexical quality of the video, makes us feel absolutely certain of what are often nothing more than our own biases, confirmed.

In the end, it boils down to whoever can produce and sell to the judge and jury the most convincing edit.

Andrew Molitor@#4:

I spend a lot of time thinking about media, and I am not convinced that video evidence is worth much.

It’s definitely problematic. But the alternatives are sworn testimony or discarding the notion of justice entirely. Video evidence is circumstantial and like all other circumstantial evidence it can (and should be) challenged.

Even with an untampered original, no juror would ever see it. Both sides edit the video into a narrative that best suits their position, and present that.

That is a flaw with how the justice system works, not a flaw with the idea of having video. I would imagine that in some courtroom a judge might order the entire unedited video to be played. Let the jury do their job and figure it out. In fact I can imagine how that would be an excellent trial strategy for one party: “the other side has showed you an edited video that creates a narrative. We want you to see the whole thing, so you can see what appears to have happened and to understand how the other side tried to create a different narrative through edits.”

Every time some shit goes down, we are confronted with endless murky cell phone video in which, objectively, you can see nothing whatsoever,

I challenge that assertion. There are plenty of situations in which we see murky cell phone video of cops shooting people in the back while they are running away, after testifying that they were running toward them aggressively. That valuable as hell – because it shows that someone is lying. Was the video edited? Probably cropped. Was it created out of whole cloth? Probably not.

All evidence is circumstantial and if it’s presented so that it interfaces and supports itself, it can be powerful. If you have video evidence that is “you can see nothing whatsoever” then maybe it’s still contributing some valuable timing information to the rest of the assembled evidence. If Officer Porko says he shot a running suspect who was attacking him at 12:11am and 3 people present murky cell phone videos that show a suspect running away at 12:15am it’s going to call the clearer video into question.

I think we agree that evidence needs to be recognized as circumstantial and managed carefully, presented well and accurately to make whatever argument is being made.

. The feeling of truth, that indexical quality of the video, makes us feel absolutely certain of what are often nothing more than our own biases, confirmed.

A prosecutor or attorney’s entire job is to manage that expectation.

In the end, it boils down to whoever can produce and sell to the judge and jury the most convincing edit.

I disagree, unless you are saying that all judicial systems are corrupt and are impossible to repair. I might agree with that, but that’s really got nothing to do with the quality of evidence. As we’ve seen, a video can show a cop shooting a running person in the back and they can get acquitted based on their testimony that the person who was shot was actually running the exact opposite direction.

Lawyering and prosecuting succeeds or fails based on presenting evidence to support an interpretation of events. Don’t blame the evidence for bad lawyering or corrupt judges. When a jury sees video that directly contradicts the testimony of a witness, and the witness is still considered credible, that’s a problem with the whole system – not with the video.

In the case of Freddie Gray, the entire system failed him: murdered him and covered it up, with all parts { cops, prosecution, mayor’s office, suborned witnesses, faked evidence } conspiring against him. It was pretty clear that in spite of multiple corroborating video streams showing Gray was severely injured during his initial arrest everyone decided that he had broken his own neck by hammering his head into the side of the van. There’s no amount of video that would have gotten justice for Freddie.

…“what do you mean by ‘secure’ in this context?” …

Judging from the attached wish list, keeping the data private/confidential didn’t even matter. :-O

brucegee1962@#4:

Why not at least send a link to this blog to the Gray family lawyers, especially if the testimony they’re getting isn’t very good?

I left a message on the podcasters’ answering machine while I was writing my posting (monday). Haven’t heard back from them, yet. I may never. One thing I mentioned in my message is that there is a tremendous amount of video online instruction in how to use the system as it is, which might help them better understand what it is capable of. I didn’t find Chavez’ description to be very enlightening but that’s because I am already familiar with the material (If I weren’t, Chavez’ description would leave me more confused than I was before!).

There’s some interesting quirks. I can’t claim to know how the system operates because the system I saw was still in development and what has been fielded is clearly different. I could attest, as an expert, that the system does not function as the system might if it were designed to provide security properties for evidence.

It’s often hard to get in touch with podcasters or bloggers or journalists. Unfortunately, they often get threats and stalking. So I may have just gone direct to spambucket.

Pierce R. Butler@#6:

Judging from the attached wish list, keeping the data private/confidential didn’t even matter. :-O

I was involved in the early stages of system design. It certainly appears that the criteria for evidence integrity changed since then. Was that reflective of customer desires or a choice of the system designers? I don’t know.

My impression was that the police aren’t concerned with the truth. If you want to know the truth, they’ll bloody well tell you what it is and if you don’t like the truth, you can fuck right off before you get a wooden shampoo. 2 + 2 = 5 and that is the truth.

These systems should not be under the control of the police. Or prosecutors. What’s scary is that, from the sound of it, evidence.com is one of the better systems out there. Imagine an in-house video archive maintained in a server closet at some pigpen somewhere.

Well, sure, it’s not all murky video where you can’t actually see anything. My point was that, given such a video, people still “see” things in it. As for videos in which the image quality it perfectly clear and it shows something, well, we still do not see what happened before, after, or out of frame, right?

The cop, if pressed, would simply say “I misspoke, he charged me a moment before the video begins, and when I shot him in he back as he fled, there was just to the left that isn’t in the video that makes it OK” which, you know, is probably a pack of shit, but still. Properly pitched by a good attorney, it pretty thoroughly spikes the evidence.

The malleability of supposedly reliable “evidence” is appalling. I am taking some time to teach my children a little media literacy, and have shared a few interesting cases with them.

Here is a link to a classic:

https://2.bp.blogspot.com/-qmCh25jBHBw/XTXZeJ8n3uI/AAAAAAAAccU/e8xzX_8TFAQUp7mLpzHFFMt9qDOW45b5ACLcBGAs/s1600/Three%2BSoldiers.jpeg

I apologize for the obnoxious link, and I hope that it works..

Yes, the system is pretty broken. The problem’s root cause is, of course “all of it” including the issues around cop bodycam footage. The point where I think the largest leverage exists, though, is in the way cops are prosecuted. The people charged with that responsibility simply do not have the will to do the job. Fix *that* and whatever the cops want to do with bodycams won’t matter much. Bad guys are pretty dumb, whether they have a badge or not. Your City Desk should in fact be a separate judicial body not beholden to cops, and whose job it is to prosecute cops.

Perhaps it could have its own union, but a shared pension with the cops, with whom it competes for funds. Our justice system is already adversarial, let’s put some fuckin’ coin in the game and see what happens.

I have no solutions for this, though, other than to appoint other guardians to guard the guardians. At which point, isn’t it turtles all the way down, or, possibly, up?

Andrew Molitor@#9:

The cop, if pressed, would simply say “I misspoke, he charged me a moment before the video begins, and when I shot him in he back as he fled, there was just to the left that isn’t in the video that makes it OK” which, you know, is probably a pack of shit, but still. Properly pitched by a good attorney, it pretty thoroughly spikes the evidence.

Yeah? And that’s when the opposition presents the entire video, going back 1/2 hour.

You’re presuming that someone is always going to be able to manipulate the evidence, but that’s circular logic – if it’s easily manipulated then it’s not evidence. The problem with cop cams and cop cam evidence is exactly that the system conspires to make it easy to discredit their own evidence. And you and I know why that is. The only jury that will buy it is a jury that happens to consist (by some stroke of luck!) of retired members of the fraternal order of police. Hey, luck of the draw, right?

The malleability of supposedly reliable “evidence” is appalling.

Yes, and no. That’s my point in this entire discussion. It can be malleable but that’s because it’s not designed to be captured as evidence. Cop cams are (as presently constituted) there to collect the cop’s viewpoint not evidence about what may or may not have happened.

The point where I think the largest leverage exists, though, is in the way cops are prosecuted. The people charged with that responsibility simply do not have the will to do the job.

I think that they have the will to deliberately not do that job, because they don’t see that as their job. They are there to protect the cops.

The Freddie Gray case is horrifying because it’s pretty clear that the cops were charged the way they were so that the state could lose its case.

I have no solutions for this, though, other than to appoint other guardians to guard the guardians. At which point, isn’t it turtles all the way down, or, possibly, up?

There was a notion that journalists would expose corruption and self-dealing, but that appears to be a dead letter. Not that it matters in Baltimore, where the paper of record ought to be renamed The Whitey.

I suspect that we agree almost entirely, and our differences are almost entirely about how relatively important things are. I do agree that tamper proof video would be better than, let us say, “tamper resistant” video, for example, I just don’t think it’s as important as you do.

I am happy to let it rest there.

Andrew Molitor@#9:

Here is a link to a classic:

https://2.bp.blogspot.com/-qmCh25jBHBw/XTXZeJ8n3uI/AAAAAAAAccU/e8xzX_8TFAQUp7mLpzHFFMt9qDOW45b5ACLcBGAs/s1600/Three%2BSoldiers.jpeg

Immediately, I would be asking for the entire roll of film, or all of the surrounding images photographed that day, with all metadata intact. Also, I know you’re a photographer so you know that’s not the default crop you’d get off the camera – the image has already been altered for some effect, in other words.

I take your point that manipulation is always possible, and even likely, but the trick is to let the opposition fall on their own sword with their manipulations, if possible. For example, we can pretty easily tell that the prosecution was planning on losing their case against the cops that murdered Freddie Gray: they didn’t even ask “why were all your body cameras turned off right when you were making an arrest?” Such a coincidence! The world is full of such coincidences.

As The Whitey says [sun]

People aren’t going to trust those cops any farther than you can comfortably spit a live rat. I feel bad for the cops having emotional difficulty – at least they didn’t have their necks broken.

Andrew Molitor@#11:

I do agree that tamper proof video would be better than, let us say, “tamper resistant” video, for example, I just don’t think it’s as important as you do.

Tamper resistant video: nice

All the video: better

I will note that even the media edits the video. Oh, you don’t to see the part where they broke his neck because it’s really upsetting. And I know you know this, too: the reason some images are historically important (e.g.: napalm girl, bodies at My Lai, bucket man in Abu Ghraib) is because they are upsetting.

What drives me crazy about this shit is that we’re expected to believe that a surveillance camera panned back and forth and recorded clear video for weeks then suddenly experienced a problem with its gear drive mechanism during the 6 minutes in which a citizen was mortally wounded by cops, and then the gear drive re-engaged itself and flawless function resumed afterward. This bullshit beggars even basic imagination.

I agree with you about your main point, which is that the narrative is created by selective omission rather than outright fakery. You know, the cop who shoots the gun in the back – maybe his unclear, jerky video is presented – but what happened to the cop cams of the 2 other cops who were standing around? Are we supposed to really believe that nobody looked at those videos?

One other point: the more videos there are, the harder they are to fake; they corroborate eachother. Of course they can still be faked, and they will be. The City of Baltimore went to some lengths to release edited videos, after all a cop’s life was on the line!.

No doubt this is my naivety talking, but is it not possible to tell your client that you are delivering a robust and secure system that nonetheless lets them get away with tampering with evidence, and then instead deliver a system that catches them in the attempt? I do not for a single solitary moment believe such professional deception to be unethical.

@Callinectes sure, if you don’t want a repeat contract. And want to annoy a lot of thugs with guns, who are friends with the people who investigate things like kidnappings and missing persons…

Callinectes@#14:

is it not possible to tell your client that you are delivering a robust and secure system that nonetheless lets them get away with tampering with evidence, and then instead deliver a system that catches them in the attempt?

Backdooring systems would require a time machine, since, at the time, my decisions made sense to me.

I know that sounds like a wiseass answer, but it’s true. We can’t tell whether a system will survive and become interesting or important or corrupt, so we’d have to backdoor everything all the time and see what happens. Usually over time the backdoors would evolve out or be discovered, which would be a huge problem requiring a lot of explaining. There actually was a series of incidents where a penetration tester was discovered to be leaving backdoors so he could come back and exploit his own clients 5 or 6 months later. That was an interesting forensic investigation; me and one of my friends were able to figure out what was going on and made sure that the guy never worked in information security again – and he didn’t know why, other than assuming that his backdoor kung fu was flawed.

I’ve had a total of two contracts where part way through I realized I had stepped into something really nasty. In both cases, I extricated myself delicately from the situation. In one of them, I was able to defuse a disastrous situation from a safe (for everyone) distance by dropping a few facts in someone’s ear at a conference. I’ve never backdoor’d a system but not for lack of opportunity – mostly lack of desire to spend time in solitary confinement.

From what I’ve seen, the American People are remarkably resistant to evidence of malfeasance or outright fraud. That’s one of the key points carried through the podcast about Freddie Gray: in spite of massive evidence of police malfeasance and evidence of a cover-up including altered evidence, the press had moved on to sexier stories and the prevailing wisdom was that the city of Baltimore released evidence that showed that Freddie Gray broke his own neck to spite those poor hard-working cops.

Mostly serious suggestion. Allow the victims and family and close friends of the victim to have first opportunity to go before the grand jury to get an indictment and be prosecutor or hire a lawyer of their choosing to be prosecutor. In most cases, the victim or friends and family if the victim will decline, and everything works as normal. Aka bring back private criminal prosecutions, like how it used to work circa 1800, with the assumption that some state office would pick up the bulk of cases because the victim would defer to them in the bulk of cases. I think the plan would work, but most people seem to say that it’s super bad or completely unworkable or something.

@GerrardOfTitanServer

That probably needs to come with some sort of cost control, i would worry about poor people’s access under such a system.

Maybe just randomly assign prosecution cases to the lawyers?

Other than that is doesn’t immediately sound less effective than the current system

My response is that it’s better than nothing, and the same problem exists in civil court too, and yet that seems to be working to some level that is better than nothing.

If I knew a better approach, I’d give it. I have nothing against taxes to help poor people.

Oh, one other idea. For every dollar the gov spends on prosecutions, they have to spend an equal amount of gov public defenders.