This appeals to my sense of the absurd. Instead of taking an AI and training it to turn photographs into converted images “in the style of” some training set, what if we plug the pipeline in backwards?

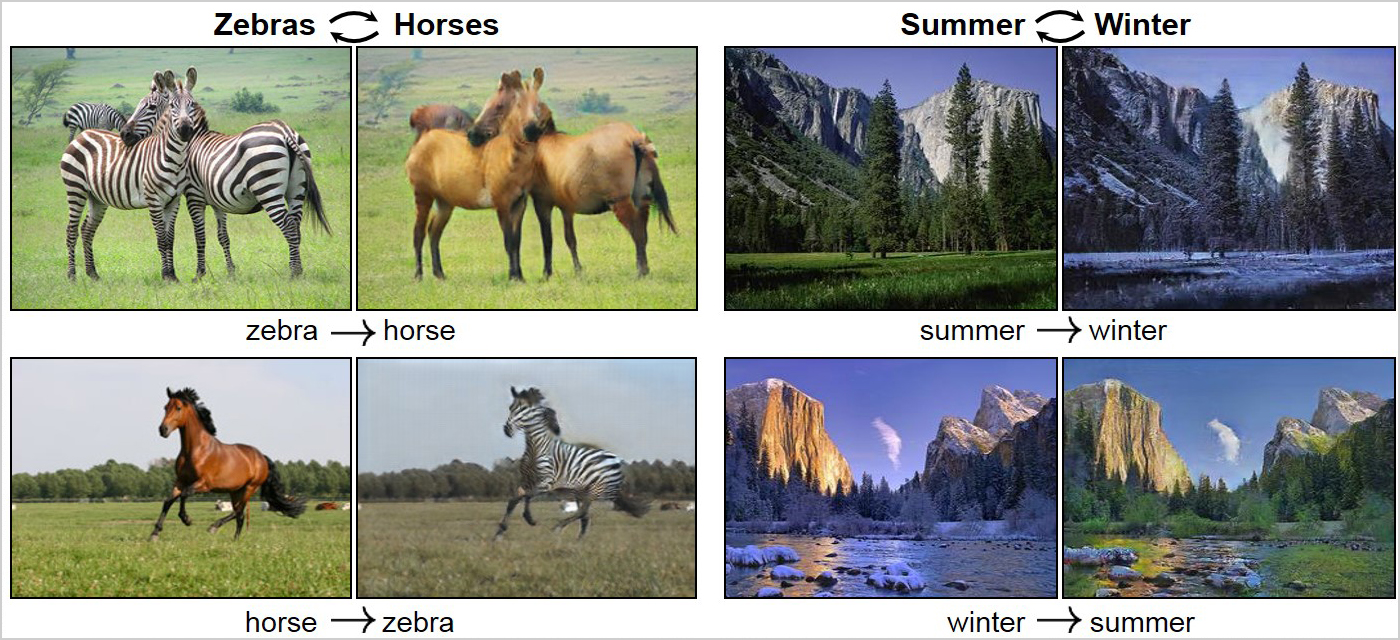

A team of researchers at UC Berkeley have revealed an “Unpaired Image-to-Image Translation” technique that can do something really interesting: it can turn a painting by Monet into a ‘photograph’… also, it can transform horses into zebras and summer into winter.

It sounds a lot like Adobe’s “smart layers” except the layers are regions identified by the AI, plus some basic AI repaint transformations. [petapixel]

It is billed as “turning an impressionist painting into a photograph” but that’s not really possible – a photograph would show information that never existed in the painting. The AI may add new information, in which I would call it a “rendering” not a photograph. One might call it an attempt to produce a “photo realistic” image.

The AI doesn’t do a very good job at the horse->zebra->horse conversions. Look at the ears. And, look at the right buttock of the right zebra the “horse” conversion: the stripes are still there.

I wonder how much longer people will recognize that as a camera?

I don’t want to fault the AI, it’s pretty cool, but it’s not a good enough artist to fool a human. It’s impressive progress, though. I assume the AIs will only get better, and their training sets will only get deeper – their knowledge will get better with practice. The zebra->horse conversion would fool someone who doesn’t know horses and as the AI gets more knowledgeable it’ll inevitably learn about the ears.

The winter->summer conversion didn’t get all the snow off the rocks, either. And the summer->winter conversion was cleverly selected to be scene with evergreen trees and no trees that drop their leaves.

In his book Implied Spaces [amazn] Walter Jon Williams introduces us to a person who is existing in a procedurally-generated artificial world. He uses the term “implied spaces” to describe the fractally-detailed regions of the map that are ’empty’ until you look at them – at which point the game fills them with an appropriate level of detail.

Let’s look at an implied space:

Implied space: fill in city here [source]

Adobe constantly experiments with new imaging manipulations; a good new transform is worth a lot of money (e.g.: that popped-out HDR stuff everyone was using for a couple years) Adobe has developed what they “Scene Stitch” [petapixel] which is a content-aware fill (call it an AI that trains itself quickly regarding the contents of an image) These images are from Adobe:

Let’s say you have a desert scene that is marred by the presence of a road. Normally in photoshop you’d start blotting over it with the clone brush and it might look like this:

There are repeats, because we had to duplicate the information – we couldn’t create it. Note that the demo forgot the other piece of road (lots of experience with doing demos makes me wonder if there’s a reason that it wouldn’t handle the other road correctly!) – but with the new Adobe content sensitive stitching, it apparently generates implied spaces.

Immediately I wish I had a copy of that to play with. Why? I’d take one of my photographs and enlarge it hugely. Then, I’d let the stitching routine generate implied spaces in the enlarged image. There are some interesting and warped possibilities.

Here, an image is shown with the region to be filled highlighted (Adobe’s image) – presumably there is a source for the additional information.

That’s not out of this world, difficulty-wise, for just plain photoshop pixel-pushing. But, if you can do that with a couple clicks, then you could automate sampling in implied spaces over a much larger image – of you could give it a weird source and see what it does. I would like to see what would happen to that skyline picture if my sample dataset was a plate of spaghetti and meatballs. Or if the skyline was stitched with city-scapes from Star Citizen.

One last point about all this: these features are powered by Adobe’s cloud service, Adobe Sensei. Like with Prisma and Deep Dreams you shove your data up into the cloud, it gets hammered and pixel-pounded, then you get it back. There are going to be interesting security problems with that. I hope Adobe thinks this through (they have a horrible history of security mistakes) because I can think of a couple ways that this could go wrong.

Geeky note on procedural generation: A procedural generation system has great big tables of conditionals and probabilities following those conditions just like a Markov Chain. So, when you enter an implied space – let’s say it’s a stellar system in Elite: Dangerous – you roll some dice and see what the typical distribution of planets is in that type of star system. Then for each planet, you roll dice for probability distribution of moons for that type of planets in that type of star system. Then you roll dice for the orbits, etc. You get down to a broad voxel-space for each moon, and then within the voxels, you subdivide and subdivide as necessary. Zoom in far enough, you see individual rocks.

Of course there is one problem with that: if you roll dice you’d need to remember the die rolls, or the universe will look different for each player – remembering the die rolls for every detail of 400 billion stellar systems is going to take a lot of storage. So, instead, what you do is pick a magic string as a seed, and plug that into a cryptographic function. Let’s say we’re using the Data Encryption Standard (DES). So, we take 1K of zeroes and encrypt them with our string.

int dicerolls[1000 * sizeof(int)]; // 1000 dice rolls

des_keyschedule key; // cipher machine state

bzero(star_system_id,dicerolls); // pre-fill the dicerolls with the star system number

des_setkey(key, “the secret to life is low expectations”);

des_cbc_encrypt(key,dicerolls,); // cipher block chaining mode (cbc) folds the data from one block to the next

Now, you have 1000 dicerolls that are, for all intents and purposes, random-looking – but everyone who goes to that system will have the same dice rolls. As long as the dice rolls are specific to the same decision-points on the table, you’ll get the same answer. So if I fly to SKAUDE AA-AA-1H14 planet B’s first moon, and go to a particular region on the map, and look closely, I’ll see the same dice-rolled rocks in the same positions as you would.

My DES function calls are from 20-year-old memory, don’t try to write a crypto application using them, they’re just an illustration. The point is, once you bootstrap your dice rolls, then all you need to keep is what you rolled on which ‘table’ when you rolled it. Next time you go to the same system, it’ll generate the same ‘rolls’ and the system appears persistent but it’s not.

I first encountered procedural generation playing rogue on a PDP-11 running BRL V7 UNIX in 1981. rogue was probably not the first game to use it – it’s a very old technique; in the case of rogue it generates a new dungeon for each player every time they start a game. But, if you used the same random number seed for every player (let’s say the game used the day of the year as the seed) everyone who played that day would get the same dungeon.

I believe that there are even slicker ways to do the “thousand dice rolls”. A few interesting hash tricks with a robust PSEUDO-random number generator – or a family of them – and you can get all the detail you need, the same for every client, without ever having to save even as much as a secret key phrase – let alone one of those per system. Or per detail; 1000 rolls would get used up FAST.

Not quite – the original paper calls it a ‘translation’, the article about it calls it a “photograph”(in quotes).

Not really an AI question but I wonder if there would be a chance to recover the colours in a black and white photo from the early 20th C ?

jrkrideau@#3:

Not really an AI question but I wonder if there would be a chance to recover the colours in a black and white photo from the early 20th C ?

Since the original information is lost, it would not be possible to accurately reconstruct it, but an AI could learn how to map the grey tones to something likely. The problem is that, depending on the film and how the prints are made, the colors don’t always map to the same grey-tones.

There are photo retouchers who do an amazing manual job of “colorizing” images; I bet you could train an AI with their work and the originals as inputs, then supervise its learning.

abbeycadabra@#1:

A few interesting hash tricks with a robust PSEUDO-random number generator

Using a cryptosystem, as I suggested, is slower than a PRNG but basically it works the same way – cryptosystems have the property of producing output that ought to be indistinguishable from random (and, of course, it’s reproducible with the same keying).

I could probably figure out what Elite:Dangerous uses but they probably do most of the “rolls” on the server not on the endpoint.

Maybe they were trying a shortcut to resurrecting the quagga?

https://en.wikipedia.org/wiki/Quagga_Project

Marcus @#5:

That wasn’t lost on me, and I didn’t think the existence of PRNGs was something you hadn’t heard about. My point was more along the lines of how PRNGs are intended for this kind of usage and generally therefore more efficient – as you said! – but also that connecting some different or even just differently-salted ones in a multidimensional pattern, with layered results seeded off an above result, means you could get all the way to tight detail without ever having to save more than a few ints for the whole universe.

Better yet, you can invert the locus of control for a lot of these, and basically use a PRNG network to ‘query’ information about some particular location by feeding its coordinates – whatever ‘coordinates’ might be defined as for the current level of detail. Come to think of it, a scheme of salt-by-hash for a string made by concatenating the location and the requested value would do a LOT.

I have no idea what Elite: Dangerous does, but this would work.

PRNG worldbuilding is a subject that particularly interests me. When I was really tiny, like 9 years old, I invented a whole D&D-inspired game that procedurally generated a whole dungeon to explore.

abbeycadabra@#7:

Better yet, you can invert the locus of control for a lot of these, and basically use a PRNG network to ‘query’ information about some particular location by feeding its coordinates – whatever ‘coordinates’ might be defined as for the current level of detail. Come to think of it, a scheme of salt-by-hash for a string made by concatenating the location and the requested value would do a LOT.

I’m not sure if that’d work for a galaxy sim, because galaxies are pretty sparse. I guess you could “roll” for every unit of distance (“is there a star here?”) but I believe they must be doing a hierarchy at some level – i.e.: for each 10ly box, how many star systems are in it? then locate them and work down. I’d love to know but it’s sheer curiousity. I don’t believe Braben or the Elite:Dangerous team have ever said much about how it works except that it’s based on the best statistics they could get about various types of galactic regions and their composition. I believe that’s how implied spaces are done in most games: “oh, we’re in a desert. roll for “desert stuff” at this location…

One fun problem that has come up over and over in Elite is that people want there to be MORE STUFF out there to find. Usually when someone complains, a few of us would say, “either the probabilities would be completely off, or how do you know there isn’t stuff out there already because the odds of finding it are basically equal to zero?” Implied spaces ought to tend to be very very sparse and in deep space, you’ve got a whole lot of “sparse” to work with.

a scheme of salt-by-hash for a string made by concatenating the location and the requested value would do a LOT.

I believe one of the Elite:Dangerous engineers did say something about how they use a secret salt and a cryptographic hash that they just rehash as many times as they need to. As an implementation detail, that’s less efficient than just encrypting a lot of zeroes with a stream cipher.

PRNG worldbuilding is a subject that particularly interests me. When I was really tiny, like 9 years old, I invented a whole D&D-inspired game that procedurally generated a whole dungeon to explore.

That is super cool!

If you have a procedurally-generated dungeon, you can actually build something that surprises you, if the underlying set of possibilities is large enough.

My first computer program, around 1975, was a BASIC program that generated D&D characters. My second was one that rolled up rooms for dungeons. It generated a lot of “detail” and did all the pencil-work for me; I’d just tear off a few dozen rooms before we started a session, and check them off as their contents were discovered. Come to think of it, I used that as implied spaces – there were static rooms that had pre-planned important stuff, and then there was the default, which was random.