The currency of computer security is Trust – the degree to which you can believe that your system is doing what you expect it to. There are a lot of properties that comprise trust, including integrity, reliability, etc., each of which is made up of smaller properties like non-repudiation, auditability, resistance to replay attacks, ad infinitum. We talk about trust loosely; it’s like Liberty or Good Cinematography – it’s a useful concept for describing the relationship between ourselves and the systems we use – whether they work right for any given notion of “right.”

One of the other properties of a trustworthy system is that it does what you want it do, and it doesn’t do things you don’t want it to. The category of “things I don’t want” is infinite in potential, so we shorten that to “do only what I want and nothing else.” A system that does other stuff you didn’t ask it to is not trustworthy – it may be a mildly undesirable feature like Microsoft’s animated paper clip in Office, or it could be a highly undesirable feature like occasionally selling your entire stock portfolio and investing in dodgy penny stocks. From a high level perspective, ‘malware’ is just an unwanted feature that is doing stuff for you, like aggressively sharing your private data.

One of the other properties of a trustworthy system is that it does what you want it do, and it doesn’t do things you don’t want it to. The category of “things I don’t want” is infinite in potential, so we shorten that to “do only what I want and nothing else.” A system that does other stuff you didn’t ask it to is not trustworthy – it may be a mildly undesirable feature like Microsoft’s animated paper clip in Office, or it could be a highly undesirable feature like occasionally selling your entire stock portfolio and investing in dodgy penny stocks. From a high level perspective, ‘malware’ is just an unwanted feature that is doing stuff for you, like aggressively sharing your private data.

That’s another aspect of a trustworthy system: it protects the data you want protected, and publishes the data you want published, and it doesn’t make those sort of broad policy decisions for you (because: how can it get that right?) A system that starts sharing data you want protected, is untrustworthy, insecure – or, you could just say it’s compromised.

From a high-level security perspective, then, you can immediately see that a system that works based on “opt-out” is going to be more likely to force you into making a bad policy decision than one based on “opt-in.” More precisely, security types would talk about “fail graceful defaults” – the system should always tend to do the least damaging thing unless it’s explicitly told to do something risky. In a fail-graceful system, your phone would not export all its data without asking you, first: “hey, shall I make your contact list available to your friends?” or whatever.

None of that deals with outright malicious and deceptive systems. Imagine if I had an app and I wanted to give a copy of your contact list to my marketing team. What if it automatically added me in your contact lists as foozum.com official site and then asked “shall I make your contact list available to your friends?” If you click OK, now that I am your friend you just gave me your contact list. That’s a silly example, except that it isn’t. Horrible skeevy marketing weasels use that sort of trick all the time. It’s why virtually every business, for a while (and still a lot) want you to install their app: they get to have their code running on your system, and then they can either outright compromise your security, or sneakily do it using legalistic dodges like the one with friend-lists. When you combine that sort of thing with the tracking apps running in your browser, [stderr] your device is completely untrustworthy: it is not doing what you want; it is doing what a whole slew of unknown people want. If you thought that you were using your smart phone to do stuff that you want, you’re wrong – most of the cycles and traffic your smart phone generates are not spent doing what you want.

In a very real sense, it’s not your phone. It’s owned by a collection of marketing weasels (and a few government agencies) and it only incidentally does a few things for you now and then.

Think I’m kidding?

More than three in four Android apps contain at least one third-party “tracker”, according to a new analysis of hundreds of apps.

The study by French research organisation Exodus Privacy and Yale University’s Privacy Lab analysed the mobile apps for the signatures of 25 known trackers, which use various techniques to glean personal information about users to better target them for advertisements and services.

Among the apps found to be using some sort of tracking plugin were some of the most popular apps on the Google Play Store, including Tinder, Spotify, Uber and OKCupid. All four apps use a service owned by Google, called Crashlytics, that primarily tracks app crash reports, but can also provide the ability to “get insight into your users, what they’re doing, and inject live social content to delight them.”

Delight me. (Picture me saying that in Samuel L. Jackson’s voice) What they are doing is exporting: your location, your browsing activity, what other apps you have installed, which apps you are running (so it can see what you run the most), your bookmarks, and – anything else they can fool you into giving the app permission to access.

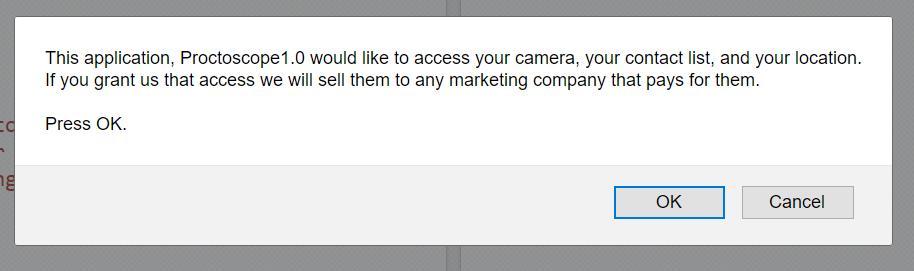

They tell you it’s OK because you gave permission, but it’s not – it makes your system untrustworthy. A trustworthy system would do a little pop-up with full disclosure, and an opt-in. A message like that, you will never, ever see: because the people who are building these harvesting apps are not honest about what they are doing. A disclosure-box would be honest; they’re sneaky. What does that tell you? They know they’re not trustworthy, too.

Other less widely-used trackers can go much further. One cited by Yale is FidZup, a French tracking provider with technology that can “detect the presence of mobile phones and therefore their owners” using ultrasonic tones. FidZup says it no-longer uses that technology, however, since tracking users through simple wifi networks works just as well.

The Yale researchers said: “FidZup’s practices closely resemble those of Teemo (formerly known as Databerries), the tracker company that was embroiled in scandal earlier this year for studying the geolocation of 10 million French citizens, and SafeGraph, who ‘collected 17tn location markers for 10m smartphones during [Thanksgiving] last year.’ Both of these trackers have been profiled by Privacy Lab and can be identified by Exodus scans.”

Remember: if they were being honest, they’d ask. But they’re not – they’re hiding this stuff in apps and gaming your approval with fine print in the End User Agreement – the End User Agreement saying, as Proctoscope says: “If you grant us that access we will sell everything you have to anyone who asks us nicely.”

This is not an edge-case scenario: Uber has allegedly stopped tracking customers after their trips end. Hint to smart phone users: when you’re done with any app that has access to your location, turn it off immediately when finished. Besides, your phone will run faster and your battery will last longer because it’s not constantly updating Uber (or a dozen other providers) what you’re up to.

FidZup and Teemo’s apps used to turn your microphone on and sample it constantly listening for ultrasonic chirps that were output by location-tracking points. When the app heard a chirp, it would push the ID of the chirp up to Teemo’s cloud and it knew your location very precisely. And you were wondering why your new phone’s battery life sucked: it was burning its CPU like crazy doing a frequency analysis of everything coming in the microphone. Or, may I say “micropwn”?

Don’t feel better that you’re using an iPhone [though Android is manifestly worse] – Apple’s app store is not there to keep untrustworthy apps off your phone; it’s there to keep untrustworthy apps that crash your phone or make Apple look bad off your phone. That’s for a simple reason: Apple sells phones, Google sells ads. Google’s platform is optimized for Google’s purposes and Apple’s platform is optimized to Apple’s. Microsoft, of course, does the same things but for its own purposes, as does Facebook, etc.

Here’s another way of thinking about it: you already have an ‘app’ – it’s called “a browser.” If some organization wants you to run their app, that’s because they want to do something that your browser doesn’t facilitate. In some cases, that may be something really cool (I’m a fan of Hipstamatic, for example) but in most cases it’s that they want to collect a bunch of stuff that your browser would protect you from giving up so freely. Every app that ‘phones home’ to see if you’ve got messages (Snapchat, Hipstamatic, Instagram, Facebook, Spotify…) when they connect up, what information are they transferring? At the very least, your location can be determined based on the network address of your current access point (there are companies that sell that information) Remember when Google’s street view cars went around making pictures for the maps? They also mapped all the wifi access points they saw. So, if you’re coming from a carrier network, they have that data (they know the carrier) and if you’re using your meth-cooking buddy’s home WiFi, they know that too, and will give that information to the FBI when the FBI asks about the devices that used your buddy’s access point.

[skyhook]

So, there is a vast infrastructure of sneaky, nasty, deceptive code that is deployed by marketers to infect your browser so they can track everything you are doing. This reduces your ability to trust your browser tremendously, since you (naturally!) have no idea what it’s doing: it is not your browser. And, there is a similar vast infrastructure of evil running on your smartphone, sucking your battery life, tracking your location, monitoring the sounds around you, and eating your bandwidth and performance to transmit all that to dozens of companies: it is not your smartphone.

You’re just paying for it.

Android: first off, it an operating system platform produced by a marketing company. Naturally, it’s going to facilitate the delivery of advertisements. But beyond that, there is a fascinating tale of deep technical cluelessness and hubris. The smart guys at Google (and they have some smart guys!) (excepting James Damore) came up with one of the worst possible operating system distribution models for smart phones: they release the entire core software and let device makers add whatever they want without a clear dividing line between that which is Android and that which is device-maker-modified Android. So the device maker adds some fancy thingie specific to their device, and codes a couple security holes into it: now there is a security flaw that’s not Google’s problem and (since it’s not Google’s code) it’s only going to get fixed if the device maker gets around to it. Worse, what if the device maker alters something that’s also something Google alters – which version do you wind up with? That’s an open question. What if Google fixed a bug in a cryptographic processing routine and the device manufacturer had their own version and doesn’t patch the Google fix back into their code-base? Essentially, it’s the world’s worst operating system distribution model since the original BSD UNIX distributions – where everyone hacked away at it and sort of converged on a standardized wad of bugs, eventually. But that only happened after the UNIX operating system-based vendors had so thoroughly crapped all over the market that Microsoft wound up becoming the dominant player. In cynical moments I think Google actually did Android as a way of driving a bunch of cell phone makers completely crazy (look what it did to Amazon’s phone) I simply cannot believe Google did Android’s software model by accident, when they basically solved system administration for their own servers, using a distributed form of configuration management. They could have done that with the phone O/S but it would have taken a bunch of additional thinking to identify and block what interfaces the device makers could play with, and which they couldn’t, then to devise a binary patching system atop that, so that the device makers could patch their crap and Google could patch their crap and all the crap would be patched.

“Privacy Lab:” Why have a privacy lab? There is no privacy anymore. It’s like having a Dodo Bird Lab.

Hey, here’s one:

- Collect underpants use the accelerometers on the phone to have an app that figures out when you’re having sex. Based on, you know, characteristic movements. And then you can push that to the cloud and sell that to companies that home-deliver pizza, cigarettes, and red wine. And you can crossmatch for nearby phones and figure out who the person’s partners are, then sell that to Amazon as input into their “Big Data” mine.

- ??

- Profit!

Yeah, I have a Windows phone (partly) on the basis that it’s probably the least-worst of the available smartphone options from this perspective… Plus, I’m less tempted to install all sorts of dodgy apps because almost nobody supports my phone. Bonus!

There’s no way in hell I’m using a phone (or a browser) from Google.

Yes, that’s right kids, we’re now at the point where Microsoft are the lesser evil.

Umm…people are carrying their cell phones … somewhere? … while they’re having sex?

I’m pretty sure that meets the definition of “doing it wrong.”

brucegee1962@#2:

Umm…people are carrying their cell phones … somewhere? … while they’re having sex?

Darn, wordpress seems to not support the “I am just being goofy” tag.

Dunc@#1:

I used a flip-phone until 2009.

Isn’t a cicada’s strategy to get out of the predation ecosystem by not being there for a long time, and then only being prey for brief times on long intervals? Eventually that’s where some of this is going to go: turn your phone off when it’s not in use and put it in a faraday bag.

http://mysteriousuniverse.org/2017/11/make-a-faraday-cage-out-of-a-chip-bag-and-hide-from-surveillance/

I have an extremely basic flip phone, it’s not ‘smart’ in any way. It has a basic browser, which I have never used. There are zero apps on it.

On my tablet, I keep apps to a minimum and don’t allow auto updating. The only time I’m online with it is to download books, and once that’s done, I’m offline again.

When I brought Athena home (my latest computer), I was disgusted to see a screen full of ‘apps! apps! apps!’. Got rid of that screen, went through the system program and got rid of all the shit I didn’t want.

brucegee1962:

That was what people call a joke. That said, people have sex in interestin’ places all the damn time. Even if people are in their own houses having sex, it’s likely their cellphones aren’t far away, say like on a bedside table or something. FFS, get an imagination booster.

Marcus:

Mine’s almost always off. Got to the point Rick stopped trying to call me, and just emailed.

I underutilize my smartphone. I make perhaps a few phone calls a month, a few text messages a month, and it notified me when I got an e-mail. I didn’t read the e-mail on the phone, I just waited until I was at my computer, but it was nice to know that I had received one.

Therefore I had a pretty small data package from my telephone service company, a couple hundred megabytes per month. But the Android phone was eating up that data limit without my doing anything. It was not serving me, it was serving Google. This wasn’t any fancy third-party apps, it was the most basic built-in apps that a smartphone has.

Now I keep my cellular data link turned off. I still receive phone calls, but I don’t receive text messages in a timely manner.

Caine@#7:

Mine’s almost always off. Got to the point Rick stopped trying to call me, and just emailed.

When I switch to making my communications asynchronous I get a lot more work done. No interruptions.

One of the end games with all this stuff will be browse-devices. I know an iPad is getting close but that’s for now – soon there will be too many tracking apps. It would be possible to make a $100 device running a raspberry pi with an selinux kernel that could browse and probably be pretty hard for the police state to get into. All that’s left is to make a mesh VPN configuration with big pre-exchanged static keys and you’ve got a “resistance communication terminal” that is subpoena resistant and semi stealthy. A friend of mine and I were nearly contracted to build such a device for anti-government resisters until we discovered that our client was not exactly interested in promoting democracy and we backed out. Eventually need will compel the development of such a thing. I am too interested in other projects or I’d consider doing it just for art’s sake. But I don’t think that the internet’s users have figured out how badly screwed they are – yet. The more aggressive resisters have. I’d be surprised if anyone with a brain is using the internet to coordinate anything anti-government…

Reginald Selkirk@#8:

But the Android phone was eating up that data limit without my doing anything. It was not serving me, it was serving Google.

Bingo. They really should sell lockable ankle straps for smart phones.

(Hm now there’s an art project that involves metal-work and welding.)

I have a phone for work that is never off. And I mean never. I only turn if off if I’m on vacation AND overseas. I’ve only put medical apps on, but it came from Motorola with many apps already installed. The medical community hereabouts uses Tiger Text for texting. How is it possible for my phone to ever truly be HIPPA compliant?

This sounds like an interesting little project. What browser would you suggest as being trustworthy enough to put in it?

The birthplace of the Illuminati

Marcus, not for nothin’, why don’t you and a few of your comrades in cybersecurity whip up a trackerless device? Gotta be a market?

Yes, yes, I know you are very, very busy, what with The Little Iron Schoolhouse project and all …

So you’re saying the snitch lives in our pocket.

You can’t “whip up a trackerless device”. Tracking is so deeply embedded in the code that makes most of the websites you use work that you can’t strip it out without breaking everything else.

The best you can reasonably manage is probably TAILS, although the TOR networking flags you for additional tracking… But even then, you can’t interact with anything without that interaction being tracked. If you want to actually do anything with your device, you can’t avoid that being trackable. It’s much like meatspace in that regard. It’s like asking to be able to go about your life without anybody remembering that they’ve met you.

Regarding phones, the very nature of a mobile phone (or cell phone, perhaps a better term) means it has to know where it is for it to work, which entails the network also knows where it is.

(So: ‘dumb’ phones offer little solace, even if only service provider has direct access)

That is painfully close to the truth.

turn your phone off when it’s not in use and put it in a faraday bag

Isn’t it enough to just turn it off and take out the battery? Do I really need the bag?

Putting it in a Faraday bag is way simpler than taking out the battery.

Australian man uses snack bags as Faraday cage to block tracking by employer

leva @20, it’s probably easier to turn the phone off and isolate it than it is to physically remove the battery, at least with some models. The isolation is because a phone could appear to be off to the user while still running in actuality. They’re fully electronic devices, not old-fashioned electromechanical.

@22

Option #1. Go to a shop. Buy a pack of junk food. Throw out the food (I don’t eat this stuff). Wash the bag. Dry the bag. Store the bag in a place where I don’t lose it (otherwise I am back to step one). Time spent: a lot.

Option #2. Take out the battery. Time spent: about 2 seconds. (I refuse to buy a phone without a removable battery. I normally use my electronics longer than Apple’s planned obsolescence period.)

And, yes, I understand that simply turning off the device is not enough.

leva, just evert the bag. :)

Y’all don’t have a spare tinfoil hat handy to wrap your phone in?

Lofty

… we have only aluminum foil or aluminium foil …

I dunno

I reckon we’re all just fucked

chigau, aluminium foil will work just fine.

(You’re good!)

“There are always eccentrics who deny that the

tinfoil hataluminum foil phone-baggy is absolutely essential to preventbaldricksdemonsgoogle taking over theirmindsbattery life.”– Paraphrasing a quote from a character from The Salvation War trilogy, written by Stuart Slade

http://tvtropes.org/pmwiki/pmwiki.php/Literature/TheSalvationWar?from=Main.TheSalvationWar