Elon Musk is in the news again, for worrying out loud about the AI that we may create that will kill us all.

Musk, at least, can claim to have his priorities somewhat straight, which is to say:

- CO2 emissions

- Diversifying off Earth

- The difficulty of finding parking in San Francisco and Los Angeles

- Artificial Intelligence wiping us out

Musk’s spending his time and effort trying to get things done toward the problems he’s identified, instead of trying to use his money to influence elections, or build the world’s tallest yacht, or whatever. I don’t care how he chooses to waste his time, but he’s wasting ours and that annoys me a little bit.

(spoiler: this is not going to happen)

It’s interesting how many fairly smart people get worried about AI wiping us out. Hawking, Musk, Kurzweil, Joy, the list goes on and I’m not on it, which probably means I am neither smart nor rich. [vf] I have, however, worked on and studied AI on and off since the 80s, and I’ve been a gamer and student of the art of war since I was a child. Usually whenever I say something critical of AI I have to dispense with two counter-arguments, so I’ll try to get over them first: 1) “What do you know about AI, anyhow?” and 2) “Yeah, but: evolution.”

1) What do I know about AI? Aside from being one since I first booted up back in 1962, I’ve periodically refreshed my interest in the off and on since 1994, when Rumelhart and McLellan’s Parallel Distributed Processing books first came out [amazon], and a bit before then when I explored using neural networks to train a system log analysis engine. There was also an unfortunate series of experiences with chatbots in the late 80s, including my own implementation of Eliza which I logged in to a MUD and discovered – to my increasing distress – that some young nerd-males will try to make out with anything that seems interested in them. I was a younger, more energetic programmer, then, and did some of my own implementations of neural nets and Markov chains, always from the point of view of “faster is better”, but I was repeatedly unimpressed.

AI comes up again and again and in the computer security field, right now, it’s a great big marketing check-box called “machine learning” (now that the “big data” fad has burned to completion, customers are desperate for something to help them analyze the “big data” they expensively collected because Gartner told them to) my practical experience with machine learning goes back to the early days of building firewalls: if your firewall encounters a situation it does not understand, you ask the user “allow or deny? [yes/no/yes always/no never] and remember what the user told you. You can build remarkably expressive rule-sets that way while having no understanding at all of what your underlying system is doing. I call that the “ask your mother” learning algorithm, which is one that I was trained with during the middle period of my boot-up.

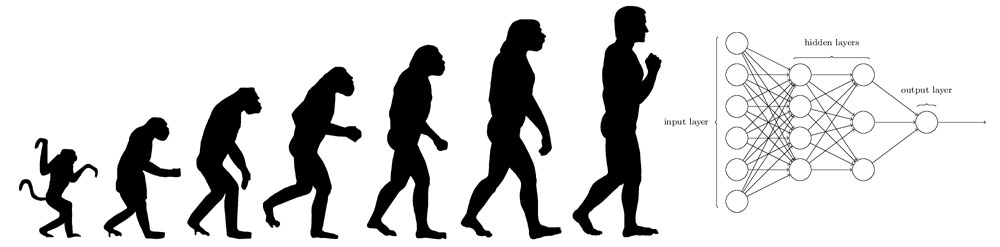

random “machine learning” to detect malware slide from google image search

The current state of AIs are various forms of statistical finite state machines. There are many many implementation details, but for most purposes, they consist of an analysis module and a training set that is intended to program the analysis module to develop its own internal rules for how to respond to future situations. The training set is a bunch of data that represents past situations (as data) and the future situations are going to be represented using similar data. The AI is trained to discriminate within the training sets, and is then told what outputs to produce based on that discrimination. Then, when the system goes operational, it’s given more data (in a form similar to the training sets) and it produces the outputs it was trained to produce based on its discriminations. That’s the state of the art and it’s pretty cool. But it’s more like “fuzzy pattern matching” (fuzzy logic and AI are Arkansas cousins) and it doesn’t bear much resemblance to “thinking” – it bears some resemblance to the rough pattern-matching that goes on in our visual memory and face recognition subsystems in our brains. In fact, current AIs do a pretty fair job of exactly that sort of thing: they can recognize speech, discriminate faces, reprocess a photograph to produce an output of “brush strokes” that are statistically similar to a model created using Van Gogh’s brush strokes. Such an AI, like a meat-based AI, is a product of its training set (“experiences”) and what it learns from them, almost more so than its underlying implementation. It doesn’t matter if you’re using an array of Markov chain-matching finite state machines, or a back-propagating neural network, or an array of Bayesian classifiers: what you get out of it is going to be statistically similar (if not identical!) to the training set and how it was told to behave when it recognizes something in the training set. It’s not quite “garbage in, garbage out” it’s more like “whatever in, statistically similar to whatever out.”

[source]

Still pretty good summary of the state of the art, unfortunately

2) But what about evolution? At TomCon a couple weeks ago, Mike Poor and I had a rollicking debate for hours about whether or not it was possible to make systems that would artificially evolve to be more intelligent than us. Oddly, neither of us was arguing for the positive or the negative; we were mostly trying to figure out our own beliefs about the question, which turned out to be surprisingly complicated. The naive version is “if I take 2 chess playing programs, each of which knows how to randomly generate only legal moves, and never forgets a game, but which both know what ‘winning’ means, can’t I just have them play very fast against each other until they both figure out what all the losing games look like… I’ll have an unbeatable chess player in a few years.” Simple search-space exhaustion will result in the programs starting to win more and more and play better and better. The reason this idea seems initially compelling is because that’s sort of how meat-based AIs like Bobby Fisher learn to play chess. They do not start out great: they play a huge number of bad games, then get better and better and eventually they are better than their teacher. This isn’t just a specious point: evolution guarantees that such a system will play just well enough beat its competitor. If its competitor is also evolving, they will each co-evolve to be just good enough to beat each other, endlessly improving just a tiny bit, assuming no environmental changes. That works if your problem-space can be boiled down to some simple meta-rules that define success and failure.

It’s the implementation details that kill you, unfortunately: Bobby Fisher doesn’t have time to play the 121 million+ possible games that follow the 3rd move on a chessboard. It’s actually hard to even calculate the number of possible games, because it’s such a huge number. Once you’ve played ten moves into a game there’s a good chance that you’re playing a game that has never been played before. So what about evolution? Evolution gets to set the bar low: all you have to do is breed – if you were trying to evolve a system that avoided fool’s checkmates you could probably do that exhaustively (let’s say, the first 4 possible moves) in a few seconds. But Bobby Fisher wouldn’t have fallen for any known fool’s mate, ever, because he’s using deeper rules. The purely evolutionary approach would be described as “exhausting all the possible bad games” but what we want is to be able to play only the good games. The problem is, in order to know a game is good, you have to play it all the way to the end. Fisher doesn’t have to play it all the way to the end.

I’m taking a very “meta-” approach to this problem and maybe there are a few AI researchers howling and hammering on their keyboards right now, but please bear with me a little longer. My argument is that, for some complex games like chess or go, you need more than just a definition of “winning” and the patience to avoid defeat: for complex games you want meta-rules that allow you to avoid whole gigantic clusters of bad moves. For example, my dad taught me that “developing your queen early is generally a bad move” – if I accepted that reasoning, and my chess-playing AI would favor keeping the queen back until there is enough room for her to maneuver, I have ‘pruned’ billions times trillions of losing games out of my space of possible games. Two more things: we could call those meta-rules “understanding the game” – the degree to which I have a good set of those meta-rules is the degree to which I ‘understand’ how chess is played. I don’t care whether it’s a meat-based AI like Bobby Fisher that has those rules, or if it’s a silicon-based AI like Deep Blue. The next thing is: where did that rule come from? When my dad told me that meta-rule regarding queens, he increased my understanding of chess, and he was my expert. I am not simply an artificial intelligence playing chess, I am an expert system that has accepted some externally-provided meta-rules and, based on the richness and expressiveness of those meta-rules, I’m able to evolve my own individual plays (as long as they work within that meta-framework) and I can evolve from there.

It seems to me that that’s how meat-based AIs learn things, so I’m comfortable that the model I’ve described above doesn’t contradict how we observe reality to work. That doesn’t mean it’s right, it’s just not obviously (to me) wrong. I also observe that some AIs, regardless of substrate, experience different styles of learning at different points in their boot-up process. When a human AI first boots up it tends to be accepting of meta-rules from authority figures. That makes complete sense to me; one of my favorite meta-rules was “do not put jelly beans up your nose.” Early on, I accepted that rule but explored the option-space around it and evolved my own rule, “do not put cinnamon red-hots up your nose.” Much awkwardness could have been spared if my parents had offered a broader meta-rule while I was still in the stage of my boot-up where I accepted rules more easily. Silicon AIs unquestioningly accept their training sets as well as unquestioningly accepting any meta-rules given to them by their authority figures. This means that training an AI is pretty efficient: it won’t ask you “why?” all the time.

That seems to be another property of AIs in general: some approaches favor weighting early learning as more important, while others favor later learning. In training an AI neural network, the “parent” has to be careful to give it the right training sets to prevent “overfitting” (where the neural network learns to produce the exact input as the output) or not having enough training data to produce a reliable output. Some neural networks are coded to exhibit cognitive bias toward detecting patterns based on earlier input or later input. In human meat-based AIs we modulate our learning and pattern-detection based on the levels of neurotransmitters in the meat, which are – in turn – modified by things like the presence of adrenaline. In the software-based neural networks, there is no “pain” associated with learning various trial sets over and over again; but that’s only because the AIs haven’t been programmed with a sensation of boredom or being under threat. In both there’s a reward-loop: successful recognition of a pattern or winning move feeds back into the system with a little shock of pleasure (happy < MAX_HAPPY ? happy++)

Let’s go back to those meta-rules: what if, when dad taught me “don’t develop your queen too early” it was a rule that pruned not only a gigantic number of bad games, but a very small number of the absolute best games that will never be played because of that meta-rule? That’s not an attempt to make a specious argument, I swear – there’s a problem that a learning system will either be:

- Entirely without experts

- Limited by the experts

If you’ve followed me this far, you can see those are the only two choices: either the system is nothing more than the totality (plus rearrangements) of what went into it, or it’s not. If it’s the former, then “what went into it” is initially chosen by the experts or parents. A system that is entirely without experts is entirely without limits but is going to have to make an awful awful lot of mistakes. A system that’s limited by the experts is going to have tough going surpassing those experts unless there is some way of synergistically combining the expert knowledge, or the AI has opportunity to “experiment” and gets lucky and invents something new that the experts haven’t thought of. What does “experiment” mean? Let’s imagine that there’s a capability within the AI that shuffles options within the known space of options, and chooses one – I call that the “hypothesizer” – semi-randomly. I say it’s “semi-randomly” because it’s constrained within the known space of options: let’s say that we have a very small space of options: teletubbies, pet, kick, lick, eat, barney, dogs, cats. Our hypothesizer might come up with “kick cats” or “dogs eat teletubbies” or whatever. Then, the output from the hypothesizer gets applied against the classification system that has been trained to recognize ideas that are likely to be popular and practical. I believe that’s actually a fairly good model for how “creativity” works in general AIs including meat-based ones like myself.

Consider the story of the AI that makes up paint names [ars] – it’s a trivial example of a neural network or

[ars]

Shane told Ars that she chose a neural network algorithm called char-rnn, which predicts the next character in a sequence. So basically the algorithm was working on two tasks: coming up with sequences of letters to form color names, and coming up with sequences of numbers that map to an RGB value.

There’s the deep rule (tying characters to RGB values) which limits the hypothesizer in terms of what it can generate. If she’d wanted to make it work, she’d have trained another AI with a big dataset of popular paint names, and then classified the outputs of the hypothesizer against the popularity classifier. This illustrates

I painted my walls “WTF red” [alibaba]

This has all been a very roundabout way of making an argument that: most of what we call ‘AI’ are actually expert systems. And I’ll go further and say that the expertise in the systems is pretty much wired into them: they’re fairly predictable given their training inputs and their expected outputs. After all, that’s what they are for: we want a picture-recognizer to pretty much always recognize the same person the same way. We can’t have it flip back and forth between Socrates and PZ Myers.

(my mad photoshop skills)

(By the way, a current state-of-the-art AI would discern a difference between those two characters because Socrates is awfully white. Unless an expert programmed the face recognizer with deeper rules that counted luminosity as less important than beard length, it is going to classify based on the best fit difference. The more deep rules programmed into the recognizer the more of an expert system it is, depending on the assumptions of the expert. Which is fine as long as the expert doesn’t suck.)

The value, in other words, of the AIs that we’re building (including the meat-based ones) is that they’re fairly predictable and consistent in terms of inputs and outputs.

Now I am ready to discuss why I don’t think we’re going to see hyper-intelligent AIs, let alone a hyper-intelligent AI comes up with the idea of “kill all the humans” and pulls it off.

Chess-playing programs depend greatly on expert inputs, because the search-space of chess is too big to exhaustively prune losing games. A general purpose hyper-intelligent AI would require experts to tutor it into hyper-intelligence. John Von Neumann did. Plato did. Alexander did. Napoleon Bonaparte did. If there were an AI that started hypothesizing new maths, it needs a recognizer that will tell it “that’s right!” or it will need a complete epistemology for math that it can check against, or it’s going to top out just a bit past where its human trainers do. Of course we can take two such AIs and have them train eachother, but we might just wind up with two hyper-fast imbeciles that have established a self-rewarding echo chamber: how would we know? I have often commented that William Buckley was a “public intellectual” because he sounds like how stupid people think smart people sound. What if we had an AI that was a sort of hyper-intelligent deepity-slinger like a fusion-powered Deepak Chopra on meth? It’d need qualified human experts to tell it whether it was full of shit or not. No matter how you slice it, it would not be able to be much more than a much faster slightly weird and more creative human. Sure, if you had John Von Neumann teach it math, and Richard Feynman physics, and John Coltrane saxophone, it’d be like a massively fast Von Neumann that never forgot anything (we already had one of those, it was called “John Von Neumann”) and its saxophone playing would sound a lot like John Coltrane with maybe all the mathematical errors fixed by the Von Neumann training set. It still would not wake up one morning with the idea “kill all the humans” unless trickster god Feynman had already put that idea in its mind, for fun.

War is hard; it’s really hard. As Napoleon said, it’s not just maneuvering and tactics, it’s logistics. Humans are really good at it because it’s one of the important things that we do. A hyper-intelligent AI would probably absorb Sun Tzu and surrender. Here’s why: it’d have nothing to go on but human expertise. Human experts say “never fight a land war in Asia” as a deep rule, but Bonaparte tried and so did Ghengis Khan. Human military strategy is a complete mish-mosh of special cases, because doing the average thing in a war gets you killed. And that’d be the first and obvious option for the AI. If there were some brilliant always-true hyper-successful military strategy that the hyper-intelligent AI could figure out, I absolutely know that the young Bonaparte would have figured it out already. We are a species that produces military dipshits like William Westmoreland on a regular basis, but we also cough up the occasional Ho Chi Mihn or Alexander. People like Elon Musk who are worried that some AI will wipe us out simply haven’t thought very hard about what wiping humanity out would entail. The worst case scenario would be an evolutionary bottleneck in which the AI wiped out a bunch of humans and served as an expert trainer in the art of war to the rest.

The AI that tries to wipe us out is going to be a faster-thinking tactician, for sure, but it would only be able to do what Wellington would have done (at best) or perhaps what John Coltrane would do in a particular military situation. And the fascinating thing about warfare is there’s no training ground and there are no “do overs” – the great military geniuses are the ones that innovated, moved fast, and didn’t make any mistakes at all until they decided “hey let’s march on Moscow!” A hyper-intelligent AI that decided to wipe out humanity would either already have embedded deep rules like “never fight a land war in Asia unless you’re Ghengis Khan” “don’t march on Moscow” and “don’t try to wipe out all the humans” or it’d never get a chance to update its training set.

You want to wipe out all the humans? Give them nuclear weapons and lots of fossil fuels and sit back and watch. Or, make everyone question their own epistemology until they just sit there, inert, and starve.

I am not current with the state of chess playing, though I gather that it continues as an art-form and silicon-based chess players have pulled away from the best humans and the current champions would probably demolish Deep Blue, the famous chess program that beat Garry Kasparov in 1999. [wikipedia] Since we now have master-class chess programs playing each other, do we expect a super-master-class to evolve? If not, why not? That’s a silly question, really, but its implications are not: if we expect AI to suddenly produce super-human intelligence with a strategic sense capable of eradicating mankind, where would it arise first? Would our spam filters turn on us first, or our network management tools, or our chess-playing programs?

Consider neural networks as 2 dimensional array of Markov chains. Consider Markov chains as a vector of Bayesian classifiers. Consider Bayesian classifiers as a flat probability dice-roll against a table of previous events.

My describing most AIs as “finite state machines” is probably going to set a few people’s teeth on edge. But: think about it. Even we humans are finite state machines. You can be a very very very complicated finite state machine and: you’re a Turing machine!

The reason the two super hyper great chess playing programs played such a long game is because there was no expert available to teach either of them how to end-game a hyper great chess player. There’s no expert available to teach an AI how to beat Napoleon Bonaparte, either.

IBM is building silicon that does parts of what brains do: [ars] It’s a pretty cool thing: speed up the stuff that can be sped up. Our brains do that with facial recognition; we appear to have ‘hardware’ that does some of the heavy lifting quickly, before dumping rough ideas of recognizer’s output into our memory retrieval and matching software. It’s good stuff.

Another answer for the question “where do new ideas come from” is in Cziko’s book Without Miracles [amazon] His idea of “universal selection theory” is similar to my idea of the hypothesizer+filter except he argues (rightly!) that evolution has adequate explanatory power: bad ideas die, good ideas succeed. It explains why vuvzelas are a gone thing, now, but toasters are not.

At what point is back-training a neural network that it made a mistake “abuse”?

This would seem to add weight to the ‘humans doing shifty stuff with AI is a bigger threat than rogue AIs’ argument: http://boingboing.net/2017/07/17/fake-obama-speech-is-the-begin.html

Yeah, it turns out that people whose identity is built around thinking of themselves as being really smart think that being really smart is of critical importance.

polishsalami@#1:

This would seem to add weight to the ‘humans doing shifty stuff with AI is a bigger threat than rogue AIs’ argument

Yes, that’s why I tried to draw a distinction between tactical use of AIs and strategic use of AIs. You could have an AI-powered war machine and tell it “kill that human” and it would do it fast and efficiently assuming it had been trained to be a fast, efficient killer. I have absolutely no problem believing humans will build those; they are working on them now, in fact.

But those AI-powered war machines are not capable of being better at killing (“faster” is not the only axis of “better”) than a human-powered war machine, because the AI learned its trade from humans. If the AI accidentally innovates a bit the humans may borrow from it. There may be co-evolution. What I don’t see as possible at all is some AI bootstraps itself to being the greatest tactical and strategic expert of all time and concludes “kill all the humans.” Humans almost certainly will try to build that (technically, we already have, it’s called “US Strategic Air Command”) but its expertise will be perforce limited by that of its human trainers.

Dunc@#2:

Yeah, it turns out that people whose identity is built around thinking of themselves as being really smart think that being really smart is of critical importance.

Funny, that.

I used to occasionally troll libertarians about how pointlessly human-centric their world-view is. If everyone should just do their best, libertarians should positively worship bacteria. They’re thoughtless, all-powerful, durable, and they have a strategy that works fantastically and has done so for billions of years.

Bacteria, now, those are fucking scary. And they appear to have shrugged off a little blip of defeat for 100 years and are resuming their billons-years-long march of all-consuming conquest.

The Two Faces of Tomorrow suggests that one possible reason for an AI to try to eradicate us, might be that we, afraid that an AI might turn on us, try to provoke one to do so, to make sure we can always “pull the plug” … and it turns out we can’t.

Which easily gets around your main objection: “kill all humans” is in the result set because we have intentionally constructed it in such a way as to make that possible.

And it turns out that the real-life group most likely to do such a thing … is the one created by Musk and friends. Now there’s a paradox!

Part of the problem might be the set of possible outputs. A super-Eliza can’t kill most people with semi-sensible strings of text, but a super-Wargames system with a live nuke-it-all red button could fail to choose to not push the button before it can evolve enough sense to not to. Training something to intentionally kill us all off is a different problem than training something with immense capabilities to not unintentionally kill us. (c.f. global warming.)

The evil robot/android trope is a beloved one, and people won’t give it up. At bottom, the fear is creating something as mediocre and juvenile as ourselves. We aren’t good at much, but we do excel at killing for no good reason, so of course, there’s the assumption that anything we create will be the same.

We’re going to have much more to worry about than rogue robots in the coming years. That nonsense will be the playground of those with money to burn; it won’t have much relevance in every day life, especially when climate change starts wreaking havoc on a vast scale.

khms@#5:

The Two Faces of Tomorrow suggests that one possible reason for an AI to try to eradicate us, might be that we, afraid that an AI might turn on us, try to provoke one to do so, to make sure we can always “pull the plug” … and it turns out we can’t.

Interesting idea! It’d entail training the AI to become an offensive expert as well as a defensive expert, which would probably be a strategic error. (PS – sounds like a fun book. Queued!)

Which easily gets around your main objection: “kill all humans” is in the result set because we have intentionally constructed it in such a way as to make that possible.

It addresses one of my objections. The other is that once the AI reached the conclusion “kill all humans” it would still need to somehow acquire the expertise to do so. Humans have been evolving cleverer and cleverer ways to “kill all humans” for a long time and we’re pretty good at defeating most of our own best attacks. An AI would have to come up (from someplace) techniques of attack that would succeed, which we couldn’t defeat. I consider that amazingly unlikely, given some of the innovations in “kill all the humans” that our fellow humans have already primed us to respond to.

This was a difficult posting because I was trying to do three things: explain the background for why I believe what I do, explain what I believe, and keep it semi-interesting. So, I didn’t just trot out a main point and begin hammering on it; I’m not even sure if I have a main point at all, but , if I do, it’s that AIs trying to “kill all the humans” will immediately fall into co-evolution with us and we’ll both become successively better at killing all the humans.

And it turns out that the real-life group most likely to do such a thing … is the one created by Musk and friends. Now there’s a paradox!

Technically, Ray Kurzweil and his friends are arguing for “kill all the humans” as an inevitable side effect of becoming transhuman beings of pure light and caffeine or whatever it is they believe. Like you, I am quite comfortable that a splinter group of humans might “kill all the humans” – it’s been tried before, after all.

Caine@#7:

At bottom, the fear is creating something as mediocre and juvenile as ourselves. We aren’t good at much, but we do excel at killing for no good reason, so of course, there’s the assumption that anything we create will be the same.

Yes. Mary Shelly was right. And it’s no coincidence that she made Dr F’s creation of subhuman intelligence and superhuman strength. In fact, I probably could have shortened this entire posting to read “see Frankenstein” it’s the fear of our own traits that drives us.

Hidden in all the verbiage is the part that scares me a bit while also keeping me hopeful: we keep getting locked into co-evolving strategies that are, basically, “kill all the humans.” MRSA doesn’t need to evolve a strategy of “kill all the humans” it just eats whatever can’t kill it and human is made of food. When I think of how a war between humans and AIs might play out, it looks a lot like the kind of push/pull we see between humans and bacteria, not between tribes of humans.

I suppose that’s another question to throw into the mix: if an AI concluded “kill all the humans” wouldn’t it try “kill all the bacteria” first? They’re a bigger threat and all the humans would be collateral damage from that war.

That nonsense will be the playground of those with money to burn; it won’t have much relevance in every day life, especially when climate change starts wreaking havoc on a vast scale.

Yep. The oil executives ought to be worrying about humans coming up with a strategy of “kill all oligarchs” – never mind AI, we’ve got this.

davex@#6:

Part of the problem might be the set of possible outputs. A super-Eliza can’t kill most people with semi-sensible strings of text, but a super-Wargames system with a live nuke-it-all red button could fail to choose to not push the button before it can evolve enough sense to not to. Training something to intentionally kill us all off is a different problem than training something with immense capabilities to not unintentionally kill us.

Yes, I didn’t even touch on that case. In the example of a system like PERIMETR [wired it was humans that built the “kill all humans” system and put it under the control of a very limited expert system. We’d be pretty stupid to put control of a system like that under a creative AI unless its training set did not include any outputs like “launch for fun” or “LOL, launch”

I would argue that PERIMETR was created with a superior strategic position and an expert rulebase that could actually allow “kill all humans” – building it was one of the great pinnacles of human stupidity.

Another system with a superior strategic position built into it is biological warfare. We could engineer a successful pyrrhic victory device and place it under the control of an AI or expert system. In that case a glitch could wipe us out. But I’d argue it would be a human-built superior strategic position and a human-built glitch.

Building a machine to eradicate ourselves and putting it in control of a simple expert system would show that humans aren’t very intelligent at all.

A super-Eliza can’t kill most people with semi-sensible strings of text

I wonder if president Trump could pass a Turing test.

Well, this is embarrassing. You see Marcus, AlphaGo has already surpassed the best human Go players, using exactly the techniques you said wouldn’t work (a neural network AI, trained initially on human games, then improving by playing against itself). You really should have googled “go AI” before writing this article.

BManx2000@#12:

Last time I checked, AlphaGo was an expert system, not an exhaustive searcher. If that’s the case, then there may be great games that AlphaGo will never ‘think’ to play.

I didn’t say it wouldn’t work – I said its top-end performance ought to be limited unless it can co-evolve or be tweaked with new deep rules by experts.

You may be right, though, because go’s outcomes are very easy to specify: it’s extremely clear what a winning move versus a losing move look like (you just count!) so that might make iterative self-play capable of resulting in incremental improvements.

Now I have to go refresh on AlphaGo. Thanks for the heads up. (I could never even beat Nemesis on “easy”)

Edit: OK that was quick. AlphaGo was trained to play against humans as well as a lot of practice against other machines. So that’s entirely as expected.

Let me put this another way: if it had been trained on 30 million moves by me it’d be a go playing idiot. What AlphaGo is doing is a statistical synopsis of the best of human go playing. Now, if it plays against itself it’s going to be able to eke out very small improvements but mostly by exploring the random decision-space in its trees.

Marcus:

Of course. One of the old Star Trek eps covered that one: “Sterilize! Sterilize!” and so on. As far as the damaged bot was concerned, humans were simply meatsacks of bacteria which needed to be cleansed. Almost every story in this line makes the same point: no matter how good our intent, we’ll fuck it up, and it will bite us in the ass with big shiny fangs.

Bacteria always win in the end anyway, and there’s a whole new wave comin’ for us. We would do much better to be seriously fuckin’ worried about that. Many of us have been living in relative luxury when it comes to bacteria driven disease; boy, are we in for a surprise.

Dunc@#2:

“Yeah, it turns out that people whose identity is built around thinking of themselves as being really smart think that being really smart is of critical importance.”

I would phrase it as “It turns out that people whose identity is built around thinking of themselves as being really smart think that they are smart enough to predict the future”.

I worked with economics/economists for 10 years. That’s a group of people who think that they can predict (model) the future in their area of interest. They do that very well, because if their prediction is wrong, they just “adjust” the forecast, and explain it using some recent “unforeseen circumstance”. It helps that they never, ever look back and compare their forecasts to reality (unless those forecasts just happened to be correct, for a while). They can’t seem to grasp that life (and economics, and everything in the future) is FULL of unforeseen circumstances, which always occur when you least expect them to occur! (Duh!) You’ll do just as well predicting a steady state.

Any one who makes a confident prediction about the future is in my opinion a BS artist, no matter how smart they are in other areas. They are just trying to be modern day prophets – and we all know how well prophesy works out…

… I don’t think we’re going to see … a hyper-intelligent AI comes up with the idea of “kill all the humans” and pulls it off.

Have you not read any of Fred Saberhagen’s “Berserker” stories? Or Fredric Brown’s “Answer“?

Pierce R. Butler@#16:

Have you not read any of Fred Saberhagen’s “Berserker” stories?

I have; also Laumer’s “Bolo” series. I don’t see any reason why AIs that are programmed to kill anything they encounter won’t be pretty good at it, especially if the experts that trained them were pretty good at it and they have a long time to evolve to get even better at it. It’s worked for us, after all.

The first episode of the short-lived 1980s TV series “Probe” had an example of a rogue AI which killed humans. The reveal is at https://youtu.be/DMbyMH2YzjQ?t=660 . In short, the program identifies waste in the system, defined I believe as eliminating unjustified expenses. “The system” in this case controls the power, traffic, natural gas, etc. of the city. It determines that someone who has retired and is receiving a pension is an unjustified expense. It has control over the power and gas subsystems, and is able to overload them and kill the retiree.

For an accounting AI it’s quite smart. It has voice recognition and can use the traffic signals to spell out “Just doing my job” in Morse. But science fantasy aside, consider a system like https://www.ncbi.nlm.nih.gov/pubmed/14724639?dopt=Abstract “The system automatically originates hypotheses to explain observations, devises experiments to test these hypotheses, physically runs the experiments using a laboratory robot, interprets the results to falsify hypotheses inconsistent with the data, and then repeats the cycle.” Only, have it control real-world systems. Start it with a baseline model of the world, and allow it to tweak that model in response to experiments.

Cost–benefit_analysis looks a lot like deliberate killings if the expected liabilities for killing a human are low enough.

Marcus Ranum @ # 17 – My link goes to the text of the Fredric Brown story – all 255 words of it.

Whoa there. The MOVIE versions are like that, but in the BOOK, the ‘monster’ was smart enough to learn language by listening to random people, survive in the woods with no training on what was or was not food, and befriend an old blind man with conversation. He taught himself to read based on a few found books – and did all of this WITHIN A YEAR of being brought to life. His campaign of revenge against Victor was strategically planned, a conscious, careful decision to take away everything Victor valued.

The creature may or may not have superhuman strength (he did not seem to perform any feats greater than a particularly strong human could), and he definitely had remarkable stamina, but I strongly disagree with the idea that Shelley’s creature had ‘subhuman intelligence’. He was actually blisteringly smart and articulate, making the tragedy all the greater.

(cf Chapter 15 of ‘Frankenstein’

I’m sure someone once said that it wasn’t artificial intelligence we should be worried about – it was natural stupidity.

I don’t know much about robots. I do know a fair bit about Alexander the Great though. Everyone knows that Alexander was tutored by Aristotle, in a picturesque philosopher’s cave in Mieza when he was in his early teens. What is generally less well known is that when he went on his epic Persian campaign he was accompanied by another, rather more relevant, expert – his father’s most trusted general Parmenio. Which was both a benefit and a problem for Alexander – Parmenio was a veteran campaigner and military logistician (he was in his mid sixties in 334BC when the Persian campaign began), but he was also a potential rival and focus for dissatisfaction among the men. Particular the older Macedonians, who had fought under Parmenio and Philip for years. Eventually, when Parmenio’s son Philotas was discovered to have conspired against Alexander, Parmenio was quietly assassinated. Alexander then made plans to replace most of his veterans with newly trained soldiers from his Persian empire.

But before Alexander was able to do that, he seems to have butted heads with Parmenio several times over issues of military strategy. Arrian’s account of the preparations for the Battle of the Granicus River gives us a classic encounter. The Macedonians had reached the Granicus, where the Persian army was waiting for them, after a long march and with few hours of daylight left. Parmenio suggested they make camp, then sneak along the banks, cross quietly, and attack at dawn – the orthodox strategy. But Alexander ignored him and led a glorious charge across the river to take the Persians unawares. It was a triumph for the hot-headed, glory-seeking and unorthodox Alexander over the traditional wisdom of boring, plodding old Parmenio. Plutarch rather liked the story, because it exemplified that streak of pothos – a fiery, irrational and dynamic infatuation – which Plutarch had decided was at the heart of who Alexander was.

The lesson we might take from this, if we believe the story is true, is that a certain degree of unpredictability is desirable in a strategy. The experts can be wrong because the enemy’s experts will be expecting exactly what they suggest. Training our Alexandrobot in the conventional wisdom would be of value only if it were prone to ignoring that wisdom and charging off to do its own thing when it felt like it. It would need pothos protocols to complement its understanding of the rules of war.

Of course, Arrian was mostly using the accounts of the expedition’s official historian Callisthenes for his information (supplemented by the account of Ptolemy I, who was just as keen to lionise Alexander as his claim to Pharaonic legitimacy rested on Alexander’s shoulders). Another ancient historian – Diodorus Siculus, working from less official sources – contradicts Arrian’s version, and tells us that Alexander took Parmenio’s advice and attacked at dawn. Robin Lane Fox suggests that what Callisthenes may have done is written up Alexander’s actual, and entirely orthodox, strategy as a fictitious conversation with Parmenio (to make the latter seem dull and lacking in spark), then invented a much more romantic story to create a contrast. Needless to say the majority of later ancient historians tended to accept the doctored version as the truth. It didn’t do Callisthenes a lot of good though – Alexander had him executed two years after he bumped off Parmenio.

So I propose that, rather than the Turing Test, we adopt a much more useful Parmenio Test. When an AI succeeds at its task through entirely conventional means, then tries to convince us it did a far better job and the traditional thinking is wrong, we can consider it on a par with human thought and will have cause to worry.

I’m going with Dorkwood for the banister and then Ronching Blue in the hallway. I’m saving Snowbonk for the walk-in closet.

Aaah, lovely tone that Snowbonk has …

Well, you don’t just have to wonder about it. There are for sure some good openings with “early” queen moves. I don’t know how soon is “too” soon, but normally, you’d want to prioritize developing most of your minor pieces, establishing some control of the center with your pawns probably, perhaps castling (kingside, obviously) would come first too….

Then your queen might have something interesting to do. You’re still not charging into the opposing army with your one super strong piece. You come to learn that she’s not that strong, and top of that, doing so could easily get you into trouble (because you’re wasting a lot of time, which isn’t something you can waste in chess, when you should be building up more effective defenses/attacks). Instead, you can combine your forces into a meaningful attack that will actually put your (strong) opponent into a difficult/complicated/hard-to-solve situation, not something that just looks vaguely threatening or badass to a kid who likes shuffling a queen around the board. Not that you’re still a kid — but that can take a kid a while to learn.

Anyway, there are some nice games where, for example, Queen A5 check appears fairly early on, for tactical reasons basically, and it’s not a big deal that you haven’t developed all of your other pieces or did all of those other nice things. That sort of move does help, in certain circumstances, and the real lesson to learn is that your whole strategy shouldn’t revolve around making a bunch of queen moves (or knight moves or whatever, even though it might seem exciting) at the expense of everything else.

Just to give a sense of the problem: currently, chess is essentially solved when there are 7-8 pieces or less remaining on the board. There are endgame “tablebases” that will spit out perfect moves, found by going backward from a final position where you have a win (or a draw, if that’s the best that can be done). I’m sure people will keeping working on that kind of approach for a long time, but I’d be surprised if the number of pieces grows by more than one or two for the rest of my life, for the next century, or something like that. I’m pretty sure it’ll never be solved for 32 pieces. That doesn’t take a lot of cleverness, either, just a big stupidly fast computer cracking away at it for stupidly long periods of time.

If you start from the opening, when there are that many pieces, you’re not going to prune out many truly “lost” games at all. Decent players just don’t get mated that easily most of the time, unless something goes terribly wrong. Chess engines will tell you things look better for white or black (or they’ll say it’s equal, if they don’t find anything interesting), but it’s not like they need to find a checkmate somewhere down the line in order to make that determination. That’s just not how they do it, because those numbers are way too big to crunch. Meanwhile, even some truly horrible moves/positions are salvageable by decent chess computers now, because they are wonderful at defending positions that humans would consider hopeless (and would probably resign to stop the suffering, as opposed to actually reaching a position with a checkmate on the board).

So, it’s important to understand that they’re not finding zillions of “lost” positions with checkmates at the end of zillions of long sequences of moves, but are trying to prune out “losing” positions. And even if you give them a position which is “losing” in this sense, they will put up a hell of a fight. Unless it’s really bad (not just a somewhat suboptimal line), they’ll probably still kick your ass, even if you’re Carlsen or Kasparov or anybody else.

I’m not sure how much input any of these engines get from human experts. The rules of chess (including what’s a win/loss/draw) are clearly defined enough, and now that that’s in place, I’m not sure if we can help them very much beyond that. The situation with Go is different, because (like #12 said) AlphaGo does use high-level human games as a model of good/bad play, to help it sort through all of its myriad options for every move. (But that’s not the only thing it does, of course.) There are online databases of chess games now, but I don’t think the best engines actually put those to use when evaluating a position. (Why bother? What we do is too stupid for them.) They’re just for people studying other people’s games, just for fun, so you can learn new moves from lots of players everywhere, so you find your opponents’ weakness, and so forth.

Marcus:

Well, okay…. It will be limited just like people are limited, or like any thing or set of things in the universe is limited. Here’s a puzzle for you. AlphaGo can learn really fast, recall everything it learned accurately, doesn’t get tired, makes its moves extremely quickly, and maybe the list of nice things goes on a bit from there. Every professional Go player would dream of having just one of those qualities. And all of them? Well, that’s asking for an awful lot, and they wouldn’t need all of that just to win against all of their human opponents. That’s just overkill. People (you and your opponents) just don’t do that much calculating that fast and that accurately, no matter what they learn over the course of decades or what their expert helpers (teachers, coaches, etc.) have helped them to do. Learning and experiencing and so forth does lots of stuff for you, but not that.

So, if we’re “co-evolving” with entities like this, how should we expect that to work out for us?

(I’m not worried about AI “kill all humans” doomsday scenarios either, for what it’s worth, but the game engines we’re talking about are not that kind of “AI” anyway. I’d probably give somewhat different reasons for why I’m not worried, but no matter. In any case, maybe we shouldn’t get these two things mixed up.)

Back to the question… People can learn new things, develop some new proverbial weapons, strategies, etc., in an “arms race” against chess and go programs and their ilk. Those programs will “learn” more and otherwise get better at it too. And they will (apparently, if past experience is any guide) do it much faster than us. To me, it looks like the gap between us and the chess-bots will just grow larger over time. That sounds like it’s not working out well for us; but let’s remember that it’s actually no big deal that we’re not the best at chess. That’s about as non-threatening as it gets. You may console yourself with the fact that we’re improving at the same time as the computers are, because both of those charts are headed up. And that may be true, if we find some ways to improve along with them. But there’s still a question of which one is going up more.

I know more about chess, so I’ll stick with that. The best programs out there can beat all of our best players, any day of the week. Those players now very regularly use those programs to study chess. They want to find out how to beat their human opponents (because they’re not usually very interested in playing the computers), and they do so by consulting the magical, fantastical, all-wise oracle that is the chess engine. Analysts and announcers for those games? They also ask a chess engine what they should say about the games, because if they didn’t, some asshole teenager on the internet will do that and proceed to explain carefully to them why their analysis actually sucks and they should quit their jobs. They all spend money on nice shiny computers for this. The players want it to quickly run stupidly large numbers of games for them, and their trainers/etc. will find good openings for the pro to memorize for their next tournament/match/whatever.

(It’s not just about rote memorization, or “preparation” as it’s known, because the player needs to understand the moves and adapt to their opponent if/when the game strays out of preparation. And these are good players who already understand chess anyway.)

That is what is actually already going on in the chess world, as a completely boring matter of routine that basically every top player does, just to keep up with all of the other top chess players. If there’s an arms race going on that’s of any interest to anybody, it’s between those players, with the chess programs as the weapons not one of the contenders on the battlefield. I think they know what they’re doing, and they all seem to recognize that they have no hope of ever exceeding what the chess programs are already doing now. Because they don’t.

At this point, it’s maybe good to note that you’re not going to keep the whole chess world going, by having audiences watch a computer kick everyone’s ass a billion different ways without breaking a sweat. It’s better than us, but it doesn’t follow that people therefore would be more interested in watching it. I like watching people play each other, make mistakes from time to time, perhaps find a way out of it if they’re clever/lucky, make very insightful moves much more often than stupid blunders — all of that’s exciting to watch, partly because I’m not very sure what the outcome will be in advance. So, chess programs have found their way into our ecosystem, but maybe not filling in exactly the niche that you would’ve expected. Still, by all accounts, they are superior to every human player, with probably no chance of any human player ever again catching up with them. And if you want to say that this just can’t be — it seems like that’s what you were saying — then maybe there’s something wrong with your theory. (I’ve got no idea what may be wrong, but the evidence is what it is.)

Hi Marcus! I thought this was an interesting read, and that you make some compelling arguments for how “kill all humans” won’t easily happen. You do put a lot of stock in experts, though, but you did not explore what experts really are. That begs the question: What are experts, if not only meat-based AIs who have learned from their own mistakes or those of other meat-based AIs before it? And doesn’t that mean that silicon-based AI can develop experts of its own?

Following up on my previous comment and in the same line of thought, how is human intelligence different from machine intelligence? Are we simply very sophisticated machines, or are we something else?

I find that discussing AI is so interesting because it’s really discussing ourselves and what we are.

jgorset@#26:

You do put a lot of stock in experts, though, but you did not explore what experts really are. That begs the question: What are experts, if not only meat-based AIs who have learned from their own mistakes or those of other meat-based AIs before it? And doesn’t that mean that silicon-based AI can develop experts of its own?

I …. Ow. I know you know that’s a deceptively simple question. But since I left myself open to it, I’ve got to try. I’m deep in quicksand, though, because defining things is hard for me, since I’ve got this problem with [linguistic nihilism] whenever I try to nail something down without making a circular argument. I am trying not to get maneuvered into a situation where I have to establish epistemology; that’s a “land war in asia” scenario.

I use the term “knowledge base” to describe an accumulation of rules. Knowledge bases can be manipulated and combined as long as they are non-contradictory or you have a meta-knowledge base that lets you decide which rule is better. So, for example, if I have a knowledge base of winning chess games that I know are good games, and one I know are badly played games, I can train an AI (whether meat-based on silicon-based) to execute similar games and I have a good chess player. This implies that “knowledge” can somehow be encoded into an independent form for transmission even if it’s as simple as “these are good games and those are bad” So: knowledge is/can be built on other knowledge as long as we keep those damn pyrrhonian skeptics out of the discussion. I hear them scratching at the door, attracted by the smell of raw meat…

An “expert” is someone who has or applies higher levels of knowledge. For the sake of hand-waving, we are going to treat the expert as though they are right, at least to the extent that we accept their knowledge is somehow better than ours. An “expert system” is one that encodes an expert’s knowledge-base and uses it as its final decision-making fallback. So an expert is an “authority” as well.

There is another knowledge-base that’s special, and I think it merits a term of its own but I don’t have one that I use – that’s the knowledge of what “winning” is; the rules of the game. I see this as a different form of knowledge but that’s incredibly important to the AI because it’s how the AI ultimately scores itself as succeeding or failing. I believe meta-based AIs do this as well, using proxies like how many billions of dollars, youtube “likes” or facebook “friends” a person or their ideas has. In evolution, because of how it works, this “winning” condition equates to “survived long enough to reproduce” though we always have to remember that evolution is goal-less. Let me call these “victory conditions” per the 1980s wargames I trained on. (I will try to come back to this point)

And you’re completely right: where does an expert come from? It’s got to be the same process for a meta-based AI or a silicon-based one – it trains against a knowledge-base established by an expert or authority and then it may hypothesize from there, and if its hypotheticals work (i.e.: are consistent with the ‘rules’ of the game and lead to fulfilling victory conditions) and it is able to encode that hypothetical into its knowledge base, I would say it has “learned” and has “advanced its expertise” – if you then lash that AI up as a question-answerer for others, it is more of an expert than its teachers. That seems to be how humans increase our knowledge and expertise: throw stuff at the wall and if it works and is consistent with the rules of the game and victory conditions, then we have ‘learned something.’

That’s why I made the point of mentioning that John Von Neumann was not self-taught; he burned through a pretty impressive string of teacher/experts/tutors on his way to becoming a great expert himself; how did that happen? He appears to have had a ruleset (“math”) that allowed him to hypothesize new rules as long as they were consistent with all the old rules, and he cranked out new rules like nobody’s business.

And doesn’t that mean that silicon-based AI can develop experts of its own?

I expect they will, but they’ll have to follow the same process of trial and error and feedback that humans do; we shouldn’t assume that because they’re working faster that they will automatically do it better.

That’s what I meant about AlphaGo being able to train itself against itself (human chess players do that too!) because its victory conditions are pretty easy to check your games against. That’s why I think it’s plausible that an AI can learn to be the best expert and even teach other AIs after it has become so. That’s also why I am not generally worried about the AI Napoleon, because the reality of warfare is more complex and winning conditions are vague; an AI Napoleon might be able to train itself to beat a human Napoleon at a game of Wellington’s Victory – or even beat a human Wellington – but the problem of acquiring new expertise is so expensive that we won’t see an AI Napoleon that’s able to evolve new battlefield strategies in the infinitely complex world of real warfare. I can imagine an AI would be quite a lot better than a human at managing the complexity, but that doesn’t necessarily translate to being able to innovate new knowledge and expertise.

A shorter answer would be that for the really interesting tasks, like “kill all the humans”, testing would have to take place in reality, because it’d be too complex to simulate – and AIs cannot afford to iteratively try “kill all the humans” until they develop their own AI Napoleon who doesn’t make the mistakes that the human one did.

(By the way, I teased Cziko’s book Without Miracles which includes a very interesting discussion about whether evolutionary epistemology can answer the problem of induction. Basically, Plato’s theory that we are recalling knowledge that we already had is an answer to the question “where do new ideas come from? and how do we know they are right?” which, unless your rules are simple enough that you can attempt a proof by induction, has to be “we keep trying it and it keeps working” which is basically “close enough for a bacterium.”)

jgorset@#27:

how is human intelligence different from machine intelligence? Are we simply very sophisticated machines, or are we something else?

I find that discussing AI is so interesting because it’s really discussing ourselves and what we are.

I’m pretty firmly in the camp that the substrate/system architecture an intelligence runs on is probably going to affect how it runs, but they’re all intelligences to some degree or another. I don’t see a distinction between the “intelligence” part of “human intelligence” or “machine intelligence” because when we call them “intelligence” we’ve already accepted they’re the same kind of thing. And, yes, I think humans are more like expert systems, in terms of how we behave and gain and transfer expertise and learning, than anything else. (I prefer to use terminology of expert systems because that always introduces the question of the origin of knowledge-bases, which then entrees the nature/nurture question) So, perhaps, if I may – I’d say that the meat/silicon divide in intelligences is the “nature” and the knowledge bases they run and their accumulated experience* is the “nurture.”

Not a criticism, but I find it interesting that you wrote “simply sophisticated machines” – that’s kind of an oxymoron. Which, in my mind, is a good description of the problem! It appears to me that we are incredibly complicated machines that are incomprehensibly complex and therefore mysterious.

Please don’t ask me to defend this, but: my personal opinion is that “consciousness” is a sort of while-loop that evolved into a parallel state-machine that is so complicated and incomprehensible that it thinks it thinks. Since that’s a definition of “thinking” I accept it. I also think that its decision-making process is so complicated (it builds in a ton of situational factors) that it’s incomprehensible to the consciousness part, which has programmed itself to think it has free will, as a way of getting out of having to think about how it thinks about everything.

(* accumulated experience + knowledge base is kind of redundant but I had to throw that in there. In my mind, accumulated experience is a knowledge base just like all the other knowledge bases, which is why I was careful in my preceeding comment to stipulate that knowledge bases must be able to combine without contradicting themselves or you’ve got an internal decision-making problem)

it’s really discussing ourselves and what we are.

That’s worth repeating.

Whenever I think about AIs I often fact-check my explanations against my experiences with my dogs and some horses I’ve known. Less complicated AIs than us are worth asking about how they think, as well. I’ve always found it odd that we humans are so worried about the danger of building superhuman AIs when we really should be worried about how to make an AI that’s as good at being a dog, as a dog. Or as good at being a horse, as a horse.

Raucous Indignation@#22:

I’m going with Dorkwood for the banister and then Ronching Blue in the hallway. I’m saving Snowbonk for the walk-in closet.

My dining room’s done in Stummy Beige and Bunflow.

consciousness razor@#24:

Well, you don’t just have to wonder about it. There are for sure some good openings with “early” queen moves. I don’t know how soon is “too” soon, but normally, you’d want to prioritize developing most of your minor pieces, establishing some control of the center with your pawns probably, perhaps castling (kingside, obviously) would come first too….

That’s the problem I was trying to get at, with rules of thumb. It may be a good rule of thumb or it may not. But that’s what the expert that programmed my chess-playing rules told me, so that’s still how I play. It’s clearly an open question as to whether or not that rule was a good one. Let’s imagine for the sake of argument that it was a terribly bad rule – what if one of Bobby Fisher’s early programmers had taught him that one? Well, he’d have eventually trained out of it I’m sure but it would have been – as they say – “a learning experience.”

ust to give a sense of the problem: currently, chess is essentially solved when there are 7-8 pieces or less remaining on the board. There are endgame “tablebases” that will spit out perfect moves, found by going backward from a final position where you have a win (or a draw, if that’s the best that can be done). I’m sure people will keeping working on that kind of approach for a long time, but I’d be surprised if the number of pieces grows by more than one or two for the rest of my life, for the next century, or something like that. I’m pretty sure it’ll never be solved for 32 pieces. That doesn’t take a lot of cleverness, either, just a big stupidly fast computer cracking away at it for stupidly long periods of time.

I did not know that was a thing. How clever, to go at it from the opposite direction! So now we have tables of end-games and tables of openings. Now, we just need a table of middles!! Well, actually, that’s what I see the neural network as being: a great big mass of statistics about what moves in the middle game have historically worked better than others.

I’m not sure if heuristics (rules of thumb) are on a continuum with dictionaries of what works, or not. I.e.: is “don’t develop your queen too early” an implicit reference to “within the knowledge-base of games I have, developing your queen before move 5 is more likely (70%!) to result in a loss than not.” That’s an area I keep daydreaming about, whether or not it would be possible to write an expert system that could extract rules of thumb from training sets. It seems to me that, in principle, that would be possible. (per the example above)

Meanwhile, even some truly horrible moves/positions are salvageable by decent chess computers now, because they are wonderful at defending positions that humans would consider hopeless (and would probably resign to stop the suffering, as opposed to actually reaching a position with a checkmate on the board).

It is interesting to think that a chess AI never “makes a mistake” – it can only make a badly-informed choice. Or, wait, that may be wrong: if it’s randomizing the decisions at the branches of its decision-trees, I suppose it can make a bad guess. Is that a “mistake”? I dunno.

So, it’s important to understand that they’re not finding zillions of “lost” positions with checkmates at the end of zillions of long sequences of moves, but are trying to prune out “losing” positions. And even if you give them a position which is “losing” in this sense, they will put up a hell of a fight.

Right. If I know all the ways to lose, and avoid them, I still have a chance you’ll make a mistake. But I don’t need to know all the ways to lose in detail – I just need successively refined layers of detail that are relevant to the current situation on the board. Of course, since the AI doesn’t ever forget, either, that’s not a problem (it’s a lookup table/database issue)

I’m not sure how much input any of these engines get from human experts. The rules of chess (including what’s a win/loss/draw) are clearly defined enough, and now that that’s in place, I’m not sure if we can help them very much beyond that.

I would say that the gigantic collection of games that are input are from human experts. Or maybe non-experts, but they represent an epistemology of how chess is played.

Back when Big Blue was first out, I watched its games pretty closely, and one thing I remember was that there was a small army of people who were tweaking its rules and settings pretty constantly. At the time I thought it amounted to a committee of experts, really. None of the people who were tweaking the rules and settings were bad chess players.

To me, I suppose the question is whether you’d wind up with a really bad chess playing AI if you trained it only on my games. My guess is: yes. Perhaps after many games against better opponents it might learn to be a better chess player than its starting state. Unless it comes prepackaged with a better collection of starting rules than I do, in which case were those rules given to it by experts? (then it’s an expert system)

(Why bother? What we do is too stupid for them.)

Building a better model of “that’s usually a dumb move. LOLhumans.”

But what I’m saying is that’s not how they work. I’ve heard it said that some chess engines tend to be a little “materialistic,” for instance. So they put a little more emphasis on that than what some (human) chess experts would. Seems to work very well despite that (if they know what they’re talking about), but at any rate, to the extent they’re evaluating the game that way, what does that mean? They’re counting the value of the pieces, the “material” on the board, using the traditional values that human experts have come up with over the years. It goes like this (with some variations, and there’s a more detail wiki article on it actually):

Pawn = 1, Bishop/Knight = 3, Rook = 5, Queen = 9

So, if you’ve got a position on the board, you can count how many pawns, bishops, etc., both sides have, multiply them by those numbers, and that gives an estimate of the material value for each side. A side which has lost a pawn/piece (or made an unequal exchange) is in that sense generally weaker than the other side. Still, there are often good strategic/tactical reasons to sacrifice material to gain initiative, mobility, etc., or perhaps because it’s a necessary step in a mating attack. The piece values don’t count for anything in the game — winning and losing has nothing strictly to do with that. It’s a helpful way to guide players in typical cases, but you can sacrifice all of it in a very grisly battle, if doing so leads to you winning by checkmate/resignation.

Here’s the point… You don’t need to know a thing about what happened before or after, whether one side or another eventually wins or draws. It’s not that the computer is being told why this is a game to watch and what it’s supposed to learn about it. (It’s not “this is a win, look how they did it” or “here’s where we think things went downhill, now make sure you record that data, computer.”) You always just have that one position, the configuration of pieces and what those are approximately and typically worth, and you evaluate it using that kind of a metric.

And there are plenty of other metrics besides that — this is just an important example. I’m sure none of these things look like “advice” along the lines of “don’t move your queen too early.” That’s too vague, and it’s often bad advice anyway. You need something concrete the program can use effectively, at every moment in every game, without the kinds of ambiguities involved in English statements like that. Even if you looked at high-level English psuedo-code for what’s really going on under the hood, it’s probably mostly going to consist of mathematical statements, because that’s clear and precise and you won’t run into issues like that. (And chess is a very mathematical game, so that works out very well.)

That’s the thing, though. I don’t think they’re doing it by inputting a gigantic collection of games. I mean, just think about it. There are new games all of the time, which have never seen been before. These engines will tell you all about the mistakes that, let’s say, world-champ Carlsen is making, right this moment in his current game. He may win the game (or not), and it will tell you (in real time) how he could win even more efficiently (20 moves earlier, let’s say, without having to sacrifice a pawn along the way). Unless a time-machine is involved, there aren’t any collections of games anywhere that will be appropriate for analyzing a new position, very accurately, move-by-move, which isn’t just about one line but lots of possible branching lines, none of which may be familiar to anybody. Those get sorted into better/worse by the computer (how does that sorting work?), and if it were playing the game itself, it would presumably pick its top choice every time (or there could be some stochastic processes to break a tie in the ratings). Whatever is going on, it doesn’t feel at all like a chess version of Watson — it really seems to understand how to play and win the game, how to evaluate all sorts of new things it hasn’t seen before, and so forth.

Let me add some observations, which maybe give you a better sense of what we’re talking about….

It’s pretty common nowadays to hear analysts/commentators discussing games (during the game or after), and something like this might come up: “wow, that’s a real computer move,” usually as a compliment to the player for finding something really stunning or calculating very accurately. Or if a player makes a decent choice, but a suboptimal one, which we only know because the computer finds a better one, they’ll say things like “only a computer would see a move like that.” They’ll say it’s bizarre and alien and insane and just plain non-human to play that way. So, they think it’s at least understandable that the player made the decent choice instead of the mad-super-genius type of choice.

And it’s true: real people don’t generally “see” moves like that when playing a game. They never have seen moves like that. I guess we’re not really built for it. There aren’t moves like that in chess history, found in a human game somewhere, much less large collections of human games. The computer actually found something new and didn’t look it up in a table somewhere. That’s the moral of the story.

Younger players, who’ve grown up learning a lot from these engines, do have a very different style than people from older generations, so you can start to compare them to some extent, but it’s still very clear which was the cause and which was the effect. Computers just have a very different approach to the game than people. At the very least they seem to, because of how deeply and broadly all of the various lines are calculated (even ones that may seem unpromising — or like pure lunacy — to a good player). They don’t get scared of very complicated and hairy positions like we do, so they go for it, and it can feel like an all-out, rip-you-to-shreds, tactical brawl every single move. They’ve got all sorts of tricks up their sleeves, and they’ve got tricks inside of those tricks, etc. And that doesn’t mean they’re bluffing — you have to be very very very picky about how you respond, or else you’re toast. Up against that kind of opponent, everyone eventually collapses.

But all of this is just to say the moves themselves are often qualitatively different from human moves that you would’ve found in past decades. Whatever people (“experts”) have thrown into the black box to make it go, something very different from what we all knew before has emerged from it. That point might be hard to appreciate if you’re not very familiar with chess.

Thing is, by design, AIs should be able to explore many more plies when employing minimax strategy than humans.

—

I think Ian Banks put it best (ref: Culture Minds):

“Oh, they never lie. They dissemble, evade, prevaricate, confound, confuse, distract, obscure, subtly misrepresent and willfully misunderstand with what often appears to be a positively gleeful relish and are generally perfectly capable of contriving to give one an utterly unambiguous impression of their future course of action while in fact intending to do exactly the opposite, but they never lie. Perish the thought.”