I don’t pay much attention to site stats, actively avoiding digging into them. I’m not interested in optimizing for traffic, or that SEO nonsense, but as the administrator for this site I’ve got this little toolbar at the top of the window that graphically shows how many visits the site gets. It’s not something I really care about, but I did like the predictable wave-like plot — visits rise until about noon, and then slowly decline over the course of the afternoon and evening, before starting to rise in the early morning. The tide goes in, the tide goes out, and you can’t explain that…OK, except that I can, because it tells me I have a predominantly American audience and it’s just a reflection of human daily activity levels in my hemisphere. That’s another reason to not attach much significance to those numbers.

Except…over the last few weeks, the rhythm has been disrupted. The waves are gone. I’m getting site activity all night long, which makes me suspect this isn’t human activity. On closer inspection, site views have also been more than doubled, which sounds like a good thing, except that I seem to be talking to non-human entities. Not aliens, though — AIs scouring the web.

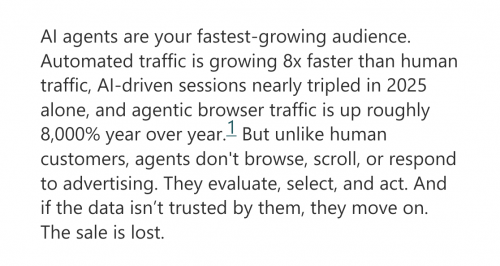

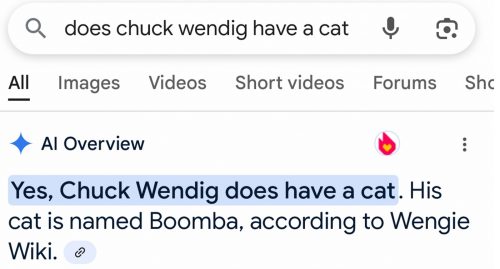

Then I saw this comment on Mastodon:

It’s all artificial, and not at all intelligent. They’re not contributing anything, they’re not the audience I want to talk to, and I think all they’re going to do is jack up my hosting expenses.

If it is aliens, though, welcome. Leave a comment. I’m sure many people here would love to have a conversation with you.