I know, I’m getting a reputation as that guy who hates Elon Musk (I don’t, I hate hype), but his latest is just too much bullshit. He has bought a company called Neuralink, which has the goal of creating brain-machine interfaces (BMIs). OK so far. These interfaces are cool, interesting, and promising, and I’m all for more research in this field. But Musk gets involved, and suddenly his weird transhumanist-wannabe fanboys start hyperventilating. I double-dog dare you to read this puff piece, Neuralink and the Brain’s Magical Future. It begins roughly here, with the claim that Musk is going to build a Wizard Hat to make everyone super-smart:

Not only is Elon’s new venture—Neuralink—the same type of deal, but six weeks after first learning about the company, I’m convinced that it somehow manages to eclipse Tesla and SpaceX in both the boldness of its engineering undertaking and the grandeur of its mission. The other two companies aim to redefine what future humans will do—Neuralink wants to redefine what future humans will be.

The mind-bending bigness of Neuralink’s mission, combined with the labyrinth of impossible complexity that is the human brain, made this the hardest set of concepts yet to fully wrap my head around—but it also made it the most exhilarating when, with enough time spent zoomed on both ends, it all finally clicked. I feel like I took a time machine to the future, and I’m here to tell you that it’s even weirder than we expect.

But before I can bring you in the time machine to show you what I found, we need to get in our zoom machine—because as I learned the hard way, Elon’s wizard hat plans cannot be properly understood until your head’s in the right place.

I dared you to read it, because I’ll be surprised if anyone can plow through it all: it goes on for almost 40,000 words (I know, I pulled it into a text editor and confirmed it), and that doesn’t count all the crappy little cartoons scattered through out it. When the author says you cannot properly understand it without putting your head in the right place, he means you have to start with sponges and be lead step by step through a triumphalist version of 600 million years of evolutionary history, which is all about a progressive increase in the complexity of brain circuitry. It’s an extremely naive and reductionist perspective on neuroscience and intelligence that presumes that all you have to do is make brains bigger and faster to be better, and that computers extend the “bigger” part but are limited by the speed of interfaces, so all we have to do is improve the bandwidth and we’ll be able to battle the AIs that Musk thinks will someday threaten to rule the world.

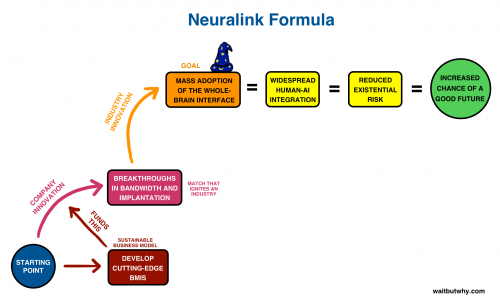

All the verbiage is a gigantic distraction. It’s virtually entirely irrelevant to the argument, which I just nailed down for you in a single sentence…without bogging you down in a hypothetical history of flatworms and a lot of simplistic neuroscience. He summarizes Elon Musk’s glorious plan in yet another crude cartoon:

It is accompanied by much grandiloquent noise and promises of planetary revolutions, but what needs to be asked is “How much of this is real?”. The answer is…pretty much none of it. We are currently in the little blue ball at the lower left labeled “starting point”, and Musk has bought a company that is doing tentative, exploratory research on building BMIs (I guess that this whole field is new enough that they are all, by default, “cutting edge”). Everything else in the diagram is complete fantasy. Elon Musk has bought a company, and is cunningly trying to inflate its value by drowning the curious in glurge, techno-mysticism, and making shit up, which, because he has this mystique among young male engineers, will probably succeed in making him more money and fame, without actually doing anything in the top two thirds of that cartoon.

I do rather like how the third step is “BREAKTHROUGHS in bandwidth and implementation”. You could replace it with “And then a miracle occurs…”, and it would be just as meaningful.

Let’s add a little more reality here: Musk has a BS in physics and economics, and started a Ph.D. in engineering, which he dropped out of. He has no education at all in biology or neuroscience.

Another shot of reality: he’s buying this company in collaboration with Peter Thiel’s venture capital company. You remember Thiel, right? Wants to prolong the life of old rich people by transfusing them with the blood of the young? Libertarian acolyte of Ayn Rand who is now advising Trump on policy? If you think this is a recipe for a post-Singularity paradise, looking at the people backing it ought to tell you otherwise.

So why are these filthy rich people getting involved in this nonsense? Let’s ask Elon.

Long Neuralink piece coming out on @waitbutwhy in about a week. Difficult to dedicate the time, but existential risk is too high not to.

— Elon Musk (@elonmusk) March 28, 2017

Fear and ignorance, like always.

They’ve imagined a huge, shadowy existential risk which does not exist yet — you might as well drive your decisions by the possible threat of invasion by Mole People from Alpha Centauri (oh, wait…they also fear aliens). They don’t know how AIs will develop or what they’ll do — nobody does — and they lack the competencies needed to guide the research or assess any risks, but they’ve got a plan for generating all the benefits. These guys are as terrifying to me as the Religious Right, and for all the same reasons.

They have fervent worshippers who will vomit up 40,000 words based on inspiration and wishful thinking, and then wallow about in the mess. It’s possibly the worst science writing I’ve encountered yet, and I’ve read a lot, but still, take a look at all the commenters who want it to be true, and regard grade-school and often incorrect summaries of how brains evolved to be informative.

Wow. I probably own the same set of crumbling cyberpunk paperbacks that Musk does, but I always assumed they were fiction.

I just hop that his excursions into neuro flapdoodle don’t interfere with the genuinely good stuff he’s doing (the cars and the battery/solar roof combo).

Wow. Sounds like someone wants to be Stross’s Manfred Macx. (Accelerando).

Fact is, we do face a serious existential risk: resource depletion and environmental degradation (of which climate change is only one, albeit arguably the biggest, piece) could wipe out nine-tenths of us by famine, war, and general mayhem, and leave the survivors scratching for existence in a landscape out of every post-apocalypse movie ever made. And Musk’s batteries may be among the things that help us avoid that fate. AIs run amok, however, are way down the list of stuff worth worrying about.

Read about how Tesla treats its workers and you might decide to hate Elon Musk after all.

http://www.mercurynews.com/2017/02/09/tesla-worker-long-hours-low-pay-and-unsafe-conditions/

This kind of nonsense is not confined to North America:

https://www.theguardian.com/technology/2017/apr/18/robot-man-artificial-intelligence-computer-milky-way

Elon puts on his robe and wizard hat.

Imagine if eternal life were to be discovered in this country, and it wasn’t for everyone, but just the rich.

Just imagine that happening right now. And think about who would live forever.

Yeah.

My go-to meme on this sort of nonsense is getting an extra-heavy rotation these days http://e.lvme.me/r9id6xt.jpg

off topic (sideways)

I want that neural interface to a GPS SatNav device. Hear me Garmin? why look at ones phone to see how to get from A to B and how long it will take. This way one can just start thinking about it and >Bingo!< the way is known, with reasonable estimate of ETA.

I got my money here waiting for it. hear me? I’m waiaiaiaiaiaiaiaiaitinggggggg…

slithey, discard those meat-person notions of “travel” and “places.” Discard them NOW.

Oh great, another layer in the hi-tech, transhumanist, infinite-growth Ponzi scheme:

1. Big sunk costs in cars that might turn a profit one day. Boutique shop is worth more than GM, because: growth!

2. Big investment in batteries that might turn a profit one day. Finances unclear: Nevada State Treasurer Dan Schwartz calls it a “Ponzi scheme”

3. Hyperloop: a vacuum tube roller coaster with all the benefits of a plane and the cost of a train. Just keeping the passengers under 4G will require hugely cheaper bridge and tunnel building technology. If you had either one of those, you would have a $1B product without even needing to build the damn tube.

4. SpaceX: Thunderbirds are go! We’ll just increase ISPs from 380 to 420, have parts go from +1000 degrees to absolute zero repeatedly, but be able to reuse them in 48 hours. Also, send people to Mars, so this is going to be 99.44% safe.

I read it and suffered a seizure

@4 Claire Simpson

I was going to say myself that this feels like Musk’s latest distraction from the labor unrest at Tesla.

@gorobei #11:

I don’t think the rockets are up there long enough to radiate away much heat before re-entry. They’d need to be in an orbit which kept them in Earth’s shadow for them to even drop below -70C.

@richwoods #14:

I admit to a bit of exaggeration on the lower bound, but the problem is not the skin temperature, it’s the engine: really hot bits with ablative cooling, radiative cooling, and cooling from preheating the liquid oxygen. It’s doable to build a reusable engine that doesn’t need a tear-down every 3 flights, but Musk’s numbers for combined efficiency/cost/reliability are just insanely optimistic.

It’s pretty easy to launch one rocket every six months. Cycling one rocket engine in a static test stand 10 times in 20 days is a good way to see where theory and practice start to diverge.

I hate how cool, interesting, plausible technologies like brain-machine interfaces get a bad rap because some of the people pushing for them have wild, implausible ideas about what they’ll be able to do. The same goes for a lot of other technology that gets misrepresented by hyperbolic futurists.

@gorobei #15:

I wouldn’t disagree with that. He’s bound to overstate things before reality creeps in and refines his numbers for him. There are far too many extremely wealthy people around at the moment who are making various technology claims based upon a mix of dangerous self-belief and calculated sales-spiel walkback. They’re probably better than a lottery ticket investment, but not always by much.

gorobei @11

However much Musk is mishandling those first two projects, making cars that run on something other than gasoline and being able to store energy collected using photovoltaics are important to stopping our reliance on fossil fuels. I just hope that Musk doesn’t end up making the technology look bad by managing his projects poorly.

gorobei: Tesla has some very dodgy financials, but Ponzi scheme has a very specific meaning that I don’t believe anyone has established applies to Tesla. Unfortunately “Ponzi” has become the go-to word for many finance writers whenever a scheme raises suspicions.

More to the point, that quote from the Nevada Treasurer was directed at Jia Yueting, a Chinese company that hopes to compete with Tesla. But he was not talking about Tesla itself.

I assume that “right place” for your head would be up your ass.

I need a brain-machine interface like I need a hole in the head!

How the fuck can you non-invasively implant a device in the brain? Implanting a device in the brain is invasive. Sometimes, that’s worth the risk for medical reasons, and those occasions will likely become more frequent, – but the risk is inherent.

And – completely incidentally – gives Elon Musk and the NSA the ability to monitor and control your thoughts!

Naturally, Elon Musk doesn’t want you thinking bad thoughts that might get in the way of his projects. For now, he is unfortunately limited to plausible misdirection and the use of pliant numpties in the media in trying to ensure that you don’t think about how every advance in communication technology, whatever its benefits, has been listened in on, intercepted, bugged, hacked, etc., by corporations, criminals and “security” services.

To be fair, Tim Urban does have something to say about possible downsides.

That’s it – out of 40,000 words. And note the misdirection again. ISIS are a nasty lot, but how does their reach, the resources at their disposal, their ability to influence what laws are passed, compare to that of Elon Musk – suck-up to Donald Trump – or the NSA?

Have you read the same guy’s article on cryonics? http://waitbutwhy.com/2016/03/cryonics.html

Looks like he is able to justify any pseudo-scientific wishful thinking.

chrislawson: thanks for the correction. I should have drilled into the actual article rather than make assumptions based on the google result.

Thing is, we already have a technology that could significantly improve the intelligence of the human species. It’s a non-invasive neural reconfiguration technique called “education”, and currently it is woefully under-used. Perhaps Mr. Musk would be better served funding more of that.

When good quality education is freely available to everyone on the planet, then we can start to think about drilling holes in people and stuffing their brains with wires. Or, more likely, since we’ll all be so much more educated, we’ll give that one a miss.

This will not end well.

Shakespeare wrote great stuff as it was hard to write fast and gave one time to think about what he was writing.

The keyboard and the computer give us twitter. When our thoughts come without thinking we will be at war with everyone within seconds.

This sounds like cyberpunk stuff alright. I was playing a cyberpunk-style RPG recently with some friends and it got me thinking about how the real world and the cyberpunk scenario split off and went in different directions.

There seem to be only a rather small number of changes. Corporations in the real world prefer to be the power behind the throne rather than sit openly on the throne themselves. Corporations don’t hack things themselves, they prefer to have governments do it for them. So there is less crime and less sophisticated crime when it does happen because governments prefer to have a monopoly on sophisticated hacking. Oddly enough one could also make a reasonable case for real world privacy being less than in these dystopian cyberpunk fantasies where privacy is considered nonexistent.

#26 spot on.

Now I need to rest my overtaxed brain.

until we learn how the brain thinks fundamentally and in some detail the idea of obtaining access to all data or all knowledge through a direct brain machine interface will be a pipe dream.

the likely direction such research and development and implementation will go is in the area of brain control of prosthetic devices for mobility.

speaking of pipe dreams where is my bong gotten to

uncle frogy

@ catomamcer yep education. I have always contended that it’s cheaper and more effective to have babies than build human like AIs.

Regarding long life for the wealthy there are lots of science fiction stories and fantasy ones on that topic. I likee ‘Drunkards Walk’ by Frederick Poll

@25, cartomancer

SO much this. People don’t seem to realize how much their own brains can be capable of. If only they viewed their brains the way body-builders viewed their bodies. Also, people tend to have a limited view of what “education” is, as if it has to be passively listening, rather than learning through thinking (with some helpful guidance on how to think) and doing.

Speaking of lack of imagination, it was pointed out to me recently that the dangerous Artificial Intelligence Overlords might not even be recognized as such if they aren’t made out of the materials people expect them to be. For example, powerful organizations (such as corporations, or even cultures) operate on a set of rules/algorithms, so are they “robots”? Whatever the case, they are real powerful things that exist right now and surely they deserve consideration similar to the risks of bad AI. There’s also just as much promise that they can use their power for good, if we make them right.

So, MIRI folk, get on that. Even their research page says “For safety purposes, a mathematical equation defining general intelligence is more desirable than an impressive but poorly-understood code kludge.” Sounds good, but poorly-understood kludge organizational cultures are things that exist right now. Which my website idea should be able to make somewhat transparent, but alas.