I thought Xians were supposed to celebrate with colored eggs and church services and a nice family dinner. I was wrong. They celebrate it with threats of bombings and cursing and mocking religions. Good to know.

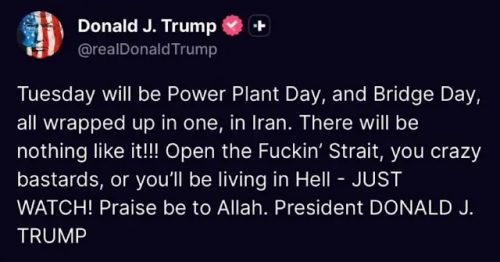

Tuesday will be Power Plant Day, and Bridge Day, all wrapped up in one, in Iran. There will be nothing like it!!! Open the Fuckin’ Strait, you crazy bastards, or you’ll be living in Hell – JUST WATCH! Praise be to Allah. President DONALD J.

TRUMP

I think I’ll pass on the whole fuckin’ holiday.