Script down below, if you’d rather just read it.

One of the messages I’m trying to get across in this series is that science is not some abstract ideal that virtuously aspires to the truth. It’s imbedded in culture, carried out by human beings steeped in culture, and there’s no arguing that it modifies culture. Yet somehow there is a common attitude that science is something outside of culture, that it’s a shining mirror reflecting the nature of an external reality. This is not to deny that there is an external reality — I’m a materialist who denies the supernatural, so I’m quite supportive of the idea of science as a tool to study nature — but that we have to be constantly on guard against the kind of glib positivism that turns science into dogma.

The thing is that good science is conscious of this problem of forgetting that we are swimming in human nature. Here’s a quote from Richard Feynman, a very popular quote that I’ve used myself.

Science is a way of trying not to fool yourself. The principle is that you must not fool yourself, and you are the easiest person to fool.

It’s a great message because it’s saying that science is a process, not a result, and that one of the key elements of that process is to correct for ubiquitous bias. Even brilliant physicists are subject to bias, and one of the purposes of science is to provide a kind of checklist of things to do and not to do to prevent you from screwing up.

When you travel, you probably have a kind of mental checklist: don’t forget your toothbrush, clean underwear, your ID. Same with science: don’t forget to have a control, question your interpretations, call up that person who disagrees with your theory and run your observations past them. Only longer. It is implicit in the conduct of science that you are a flawed ape struggling against the limitations of your mind and your own prior beliefs…your cultural presuppositions.

This has been known since the beginning of what we call science. Let’s pin that to a specific date: 1620, and Francis Bacon’s Novum Organum. This is generally considered to be the great work that defined a scientific method and provided a framework for research, with an emphasis on inductive reasoning from empirical evidence. But also, he includes a great many cautions: he warns readers of the dangers that arise from our inbuilt biases.

He explains that just being human is a limitation on our perceptions and imaginations — there is far more to the universe than what we can see or touch or dream about.

He explains that as individuals, we are shaped by our education and our experience, that we live in a framework of ideas that we gathered to ourselves and that color our vision.

He explains that the models and theories we use to understand the world are all also flawed, and we have to be wary of whether we are blinded by our preconceptions to observations outside the scope of our suppositions.

The message is that we are not objective observers standing outside that which we are describing, but are also part of a system of knowledge that affects our understanding, and that science is a protocol to help you catch out your unwarranted assumptions.

There is another flaw, one that Bacon called “the most troublesome of all”, and that’s what I’m going to talk about today. That is the problem of language. I’m sure we’ve all struggled with this:

For it is by discourse that men associate, and words are imposed according to the apprehension of the vulgar. And therefore the ill and unfit choice of words wonderfully obstructs the understanding. Nor do the definitions or explanations wherewith in some things learned men are wont to guard and defend themselves, by any means set the matter right. But words plainly force and overrule the understanding, and throw all into confusion, and lead men away into numberless empty controversies and idle fancies.

And, really, Bacon was on a tear over this problem, going on at length in his aphorisms. I really wonder what he’d say if he could witness modern political discourse or, worst of all, the internet. He’s really in high dudgeon about how words get abused by “learned men”.

Whence it comes to pass that the high and formal discussions of learned men end oftentimes in disputes about words and names; with which (according to the use and wisdom of the mathematicians) it would be more prudent to begin, and so by means of definitions reduce them to order. Yet even definitions cannot cure this evil in dealing with natural and material things; since the definitions themselves consist of words, and those words beget others: so that it is necessary to recur to individual instances, and those in due series and order…

Please note: he’s not addressing the malicious use of language to lie and mislead here. He’s pointing out how even people of good faith, trying their best to communicate, can be stymied by the need to use definitions to explain their words, and those definitions are made of words, and you’re all going to be buggered by human nature, personal bias, and the fashionable prejudices of the time. He’s not at all pessimistic, though. A subtitle to his book includes a quote from Daniel 12:4: “many shall run to and fro, and knowledge shall be increased.” His point is that we have to be conscious of these obstacles all the time, and strive to overcome them, in order to progress.

That Feynman quote I gave at the beginning? It’s the same sentiment. It’s hard work to overcome our human influences and limitations.

So today I’m going to talk about one of those words that force and overrule the understanding, and throw all into confusion. I could talk about the word “theory”, which we all know is confused by the apprehension of the vulgar, but I’m going to pick something more challenging.

The word of the day is “genetics”.

I’m not saying genetics is wrong at all, but what’s incorrect is the public’s understanding of genetics — that they get taught a few basic concepts, they think they know it all, and then it’s off to the races, with misconceptions building on misconceptions until what I see pundits and YouTube “experts” promoting has only a tangential relationship to what real geneticists say.

So let’s start at the beginning. Here’s a potted explanation of genetics as Mendel would have understood it, and which, unfortunately, is about as far as most people have gone. So, really, the public knowledge of genetics is arrested at about the level of 1865, with a smattering of poorly understood buzzwords, like “DNA” or “genes”, shoveled on top.

This is probably what most of you learned in high school. Mendel identified a small number of traits in the common pea plant, like round seeds vs wrinkled seeds, yellow seeds vs green seeds, etc. He crossed plants with these traits, and worked out the rules of inheritance. So whatever was behind these traits — we call them genes nowadays — are paired, and some are dominant to recessive forms.

These words should all sound familiar to you. Don’t worry, there will not be a test.

What this means operationally is that if you have a plant that carries both the round trait and the wrinkled trait, it will only express the dominant trait in the phenotype, which happens to be round.

And that’s pretty much it, all that most people remember of grade school genetics, and often that’s all that they remember of college genetics. It’s too bad, because it is all conveniently oversimplified. Again, not wrong, but misleadingly trivial, allowing lots of people to think they know genetics when all they’ve got is grasp of a cartoon.

Part of the reason for that is Mendel’s good luck (or perhaps, selective reporting). All 7 of these genes have exactly 2 forms, or alleles, each, which is a nice simplification, but has a lot of people thinking that’s universally true. None of his 7 genes exhibit phenomena like linkage, or sex-linkage, or codominance, or variable penetrance, or any of the other complications that we see over and over again.

Some days I think it’s too bad that one of the simplest examples of diploid genetics is this basic, go-to teaching example, because it leads to a kind of overconfident cockiness in students who master it. On the other hand, I’ve seen so many students struggle with even this simplistic scheme, and I can’t quite imagine that jumping straight into complexity would help matters.

You see, I teach genetics. One of the things I see over and over again is students who have mastered the simple Mendelian model — they’re smart young men and women, they caught on fast in high school — and they’ve got this concept down cold. There are two kinds of alleles, dominant and recessive, and only two. There is round vs wrinkled, or yellow vs green, or tall vs short, everything fits into this convenient paradigm.

It’s the tyranny of the binary. We humans, or at least we humans in Western culture, tend to reduce everything to simple choices: there are males and females, black people and white people, Republicans and Democrats, good and bad, delicious and yucky. And look here: Gregor Mendel confirmed the existence of simple dichotomies with Science!

Except it’s not true. As soon as you step outside the seductively simple Mendelian model, it falls apart.

But we constantly reinforce it. I can fall into what I call the Big A/Little A trap when teaching, myself. You’re trying to explain a simple cross on the blackboard, so you set up a convention: big A is the dominant allele, little a is the recessive allele, and now let’s show how a cross between these works. And the students readily grasp it. There’s capital and lowercase, a binary. There’s nothing in between or different. Simple, and supported by a typographic limitation.

Except it’s not, again. Why not bold A, italic A, serif A, script A, comic sans A, every font in the computer A, and then different colors: pink A, blue A, yellow A? And then the combinations: purple italic Times New Roman A, underlined. The catch is that these are hard to draw on a chalkboard, and they make the simple problems more complicated, and sometimes you want to ease them in, even at the cost of getting some students caught in an invalid paradigm — you just hope to yank them out later.

In case you’re wondering, real geneticists are fully aware of the complexity, and know that a single gene may have thousands of alleles, not just two, and the usual convention is to attach a superscript to the gene name. Sometimes that superscript is messy and cryptic, but it does allow for an infinite number of descriptors for variants.

Still, I have literally seen students break down and weep when faced with a problem involving more than two alleles. They’re not stupid, they’ve just learned a conceptual model that works fine for simple problems, but that totally breaks down once you move beyond Mendel. The really clever ones try to rationalize it by saying that in diploid organisms there can’t be more than two alleles at a single locus, and then I have to gently crush their excuses by bringing up tandem duplications, copy number variants, and translocations. One of the hard things about genetics is that we have so many ways to destroy everything you learned in grade school. One of the disappointing things about the public understanding of genetics is that so many people have never gotten beyond big A/little A, dominant and recessive, and Punnett squares and therefore get everything wrong.

Speaking of Punnett squares…I have a love/hate relationship with them. They’re great for helping students visualize the most basic, basic stuff of a cross, but they’re useless after about the first week of a genetics course, the bit where you’re doing a simple review of elementary ideas. Beyond that, they fail hard.

It’s heart-breaking to be grading an exam and turn to a student who has decided that the best way to solve a simple problem that you can answer algebraically in your head in about 30 seconds if you use the right method, but they instead try to force the problem into a 7×7 Punnett square, and clearly spent the whole hour trying to plug and chug through a mechanical solution.

This is the tyranny of method. You’ve got a procedure, and instead of thinking about the concepts, you fall back on rote repetition to solve the problem. It’s a very human thing to do.

I feel a lot of sympathy for my math colleagues. Whenever educators come up with a new way of doing math, the jokes follow fast and furious.

But I understand completely what they’re doing. They’re trying to break habitual thinking and force people to consider the underlying concepts. It’s a good thing. Mindless repetition of old strategies is a trap that’ll wreck you as soon as a new context arises, so flexibility and comprehension are more important than just tapping numbers into a calculator.

But back to genetics. Here’s another problem with good old Mendel: he wasn’t wrong, but his examples are very sticky — memorable, easy to understand, and carrying the illusion of universality. Everything begins to look like a pea plant, because it’s such a powerful concept.

And thus was launched an era of reductionist genetics, which in many ways, we’re still in. The strategy was straightforward and successful — and that’s important, this kind of genetics works and gets stuff done — in which you break the genome in some way, or look for spontaneous mutations, that produce strong, discrete, discontinuous phenotypic variants. These exist, so it’s not wrong, and it was a good way to bootstrap genetics, but it also colors our perception of how genes work.

Let me give you an example.

Shortly after Mendel’s work was rediscovered in the early 20th century, Thomas Hunt Morgan began his own search for the rules of inheritance in his favorite organism, the fruit fly Drosophila. His first step was to look for variations, and he found one: among all of his milling hordes of red-eyed flies, he found one with white eyes, which he then crossed to his other red-eyed flies. As expected from Mendelian genetics, the trait disappeared in the first generation — it was recessive — and reappeared when he crossed first-gen flies to each other.

This was great stuff. It simultaneously confirmed Mendel’s rules, and extended them, because it turned out this white-eyed variant was sex-linked, a phenomenon that Mendel had not seen, so that it was expressed with a different frequency in males than in females, just like color-blindness in humans. Morgan worked out the rules for sex-linked inheritance.

Furthermore, he kept going, collecting more and more Drosophila variants, building up a library of flies, and doing many more crosses — he had far more than 7 traits to play with. And along the way he figured out that Mendel’s 2nd law of independent assortment — that any two traits segregated independently to the gametes, wasn’t always true. He discovered the phenomenon of linkage, which allowed him to make maps of the virtual locations of genes on chromosomes.

Morgan deserved the Nobel prize he would win for this work. The principle of mapping is a prelude to all the genome sequencing stuff we do nowadays — just the idea that genes had physical locations on a chromosome was a great insight, and this was accomplished at a time before anyone knew what a gene was or did, and when there was only a vague inkling that DNA played a role in heredity.

None of this mapping is wrong, but it does have unintended and invalid implications. A modern geneticist might be fully aware of all the other information that has been pared away to make a simplified presentation of the complexity of the full story here, but throw this down in front of a public school graduate, or a college freshman, or the President of the United States, and they see something far different. They see confirmation of the idea that their identity is fixed in the genome. Race and class are encoded here. This is an astrologer’s map of the stars, or a palmist’s chart of lines on your hands. The same impetus that drives people to consult phrenology diagrams leads them to misinterpret these data. They don’t understand it, but they like the certainty of a determined nature.

So we get swarms of “a gene for X” opinions. There is a gay gene. There is a gene for intelligence. There are genes for nail biting, cigarette brand preferences, and what kind of car you drive. Don’t believe me? CBS News said so!

Jim Lewis and Jim Springer were identical twins raised apart from the age of 4 weeks. When the twins were finally reunited at the age of 39 in 1979, they discovered they both suffered from tension headaches, were prone to nail biting, smoked Salem cigarettes, drove the same type of car and even vacationed at the same beach in Florida.

The culprit for the odd similarities? Genes.

I wonder if there is a gene for credulity. I would also love to see where the map of Florida is encoded in the genome.

Here’s a cartoon of the situation that’s perpetuated by misinterpreting evidence, like Morgan’s gene map.

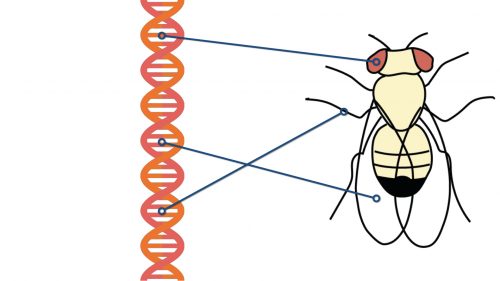

On the left, we have a strand of DNA, with genes on it. On the right, a fly. When we start our mapping exercise, we find connections between mutations to the DNA and changes in the phenotype, and we start cataloging them. So, for instance, we find a gene that affects eye color. We find a gene that affects bristles on one of the legs. We find a third gene that affects the shape of the wings.

All is well. We’re gathering data. We can naively think that as we get more and more, we’ll be able to fill in all the connections and find a one-to-one mapping of genes to discrete traits. We’re not going to succeed. And geneticists figured this out in the earliest years of genetic analysis.

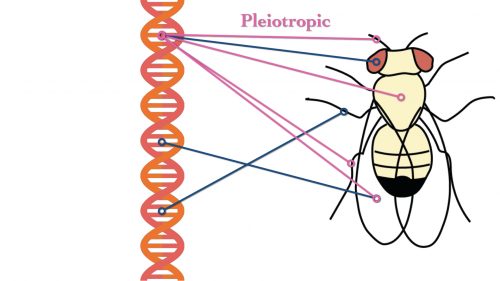

One thing that happened as they started building up this list of genes is that immediately they discovered that multiple genes contribute to each trait — that multiple interlinked factors work together to build each feature.

Traits are polygenic. That simply means that multiple genes contribute to each one, so now in my cartoon I have to draw multiple connections from DNA to any one attribute, such as eye color.

Let me get specific. What does that white gene that Thomas Morgan discovered actually do? As it turns out, the pigments of the fly eye are not encoded by the white gene — they’re actually two molecules, drosopterin (a bright red pigment) and xanthomattin (a brown pigment) that aren’t encoded in the genome at all, although the enzymes that synthesize them are. What this means is that of necessity you can’t explain something as seemingly simple as the color of a fly’s eye by pointing to a gene — there’s a battery of them working together in the biosynthetic pathways, and there are also genes that are support players.

Like white.

White doesn’t directly create eye color — it’s a transport protein. It’s responsible for pumping the precursors to the biosynthetic pathways that make the color. A mutation in this gene is comparable to breaking down the truck that delivers supplies to a factory.

You know, it’s kind of weird how something as seemingly simple as eye color in a fly requires an intricate array of genes and proteins and cells working in concert, yet some people still try to claim that intelligence is the product of a gene.

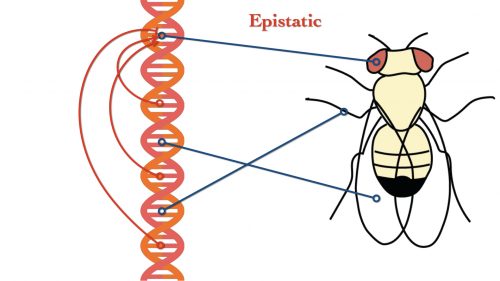

So multiple genes contribute to each trait. But we’re not done. It also turns out that each gene contributes to multiple traits.

This is a phenomenon called pleiotropy. If we look at just white again, we can see that it plays a role in many phenomena, not just eye color, but may affect processes all over the fly. Geneticists are comfortable with that, too. If you visit flybase.org and look up the gene ontology for white, there are all kinds of surprising connections.

For example…

The phenotypes of these alleles manifest in: male reproductive system; gonad; excretory system; head capsule; testis sheath; frons; rhabdomeric photoreceptor cell; portion of tissue; larva; epithelium; main segment of Malpighian tubule.

So in addition to regulating the transport of precursors to eye pigments, white also plays a role in the reproductive system, the fly equivalent of kidneys, and the frons, which is the insect equivalent of the forehead. It’s also involved in memory and orientation with respect to gravity. This is not unusual! For example, another gene, even-skipped, was first recognized for it’s role in segmental patterning in the very early fly embryo, but it also plays a later role in neuroblast patterning and axon pathfinding. Since every cell has a copy of every gene, it should not be surprising that evolution will find novel uses for every tool in the toolbox.

And speaking of even-skipped, one of the reasons it’s expressed in a lot of tissues at different times is that it is a transcription factor. That means its role in the cell is to regulate other genes — it has epistatic interactions with genes.

Another significant kind of interaction is epistasis — the suppression or activation of genes that are not alleles of one another. So gene A binds to a control element of gene B, and can turn it on or off. There are many ways genes ‘talk’ to one another, generating another level of control of gene expression. Whether a particular gene will be expressed in a cell or cell type or even organism is going to depend on what other genes are being expressed, and on signals from the environment.

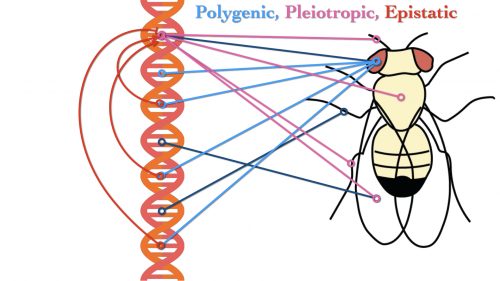

What we’re dealing with is a truly tangled web. When we combine these different mechanisms in translating genotype into phenotype, it ought to be obvious that the old model of mapping genes to traits one-to-one is totally invalid, yet still we get these absurd breathless declarations that science has found a gene for whatever phenomenon has caught the fancy of some uninformed ideologue. There is no “gene for intelligence”. There is no “gene for sexual preference”. There is no “gene for courage”, or “business”, or “politics”. Complex behaviors cannot be reduced to the behavior of a single protein.

I have to pound one more nail into the coffin of simplistic genetic determinism. I mentioned how traits are polygenic; we’re now thinking that is an inadequate term, and it’s more likely that everything is omnigenic.

Genome wide association studies, where the contribution of multiple genes to a trait is measured, had been leaning in this direction for a long time. There are diseases that are caused by a single defective gene — cystic fibrosis, for example. But other complex traits and syndromes seemed to be the product of, perhaps, one gene making a major contribution, and dozens of others contributing to a lesser degree to it. And as more details were worked out, the percent contribution of what was thought to be the primary causal gene declined, and the contribution from an increasing number of secondary and tertiary genes increased. Eventually it got to the point that pretty much every gene in the genome was implicated to a greater or lesser extent.

“…gene regulatory networks are sufficiently interconnected such that all genes expressed in disease-relevant cells are liable to affect the functions of core disease-related genes and that most heritability can be explained by effects on genes outside core pathways.”

This is not to say that some genes might have a stronger effect on the condition; but this is saying that most of the heritable variation may not be coming from those few strong genes, but from a cloud of less obvious genes that are inherited in a complex fashion.

What does this mean? Is there no predictability to genetics? Not quite. You’re pretty much guaranteed to be born a human, to usually have two arms and two legs, to be an omnivore who consumes proteins and carbohydrates and fats, to need love and to yearn for connections to other people to varying degrees. The stuff that’s shared with all other members of your species is, you could say, hardwired in.

But all the stuff that makes you distinct from other human beings? Part of that is genetic, of course, but it’s a genetics that’s so complex and multivariate that it is impossible to predict, even after the fact. An even larger part of those individuals are the product of environment and history. The ironic part of all this is that those of us who understand the complexity of inheritance and development are accused of being simpletons, blank-slaters, who ignore the evidence, while the jejune reductionists are the people who really neglect the bulk of the story to favor simplistic deterministic versions of reality.

That’s enough for today. Next week I plan to go gunning for those who mangle another rich scientific concept, evolution. And I don’t mean creationists: let’s rip into evolutionary psychology.

A few links:

Baconian aphorisms: http://fly.hiwaay.net/~paul/bacon/organum/aphorisms1.html

https://www.cbsnews.com/news/twin-brothers-separated-at-birth-reveal-striking-genetic-similarities/

Theory Suggests That All Genes Affect Every Complex Trait

https://www.quantamagazine.org/omnigenic-model-suggests-that-all-genes-affect-every-complex-trait-20180620/

Good stuff PZ.

Nice straw man there PZ. What they’re claiming is exactly what you’re describing – multiple genes contributing to a phenotype.

Your introduction is really powerful! It could also be used in another direction, to explain what’s wrong with philosophy and religion.

Philosophy and religion more resemble a game of Calvinball where the goal is precisely to fool yourself. And others. I like to think Wittgenstein pointed this out, but I could be wrong.

Hi everybody. I saw the Atheist Experience on YouTube and Matt.D said that if I came on here, there would be people happy to answer any questions I might have, regarding god. Is this the right place for that please?

Donald TrumpFan @ #4

Your questions about god(s) can be answered here, but you are off topic for this post. I would wait for a more appropriate post.

If, however, you are looking for the Atheist Experience blog it is located here: https://freethoughtblogs.com/axp/

donald trumpfan @4: “Hi everybody. I saw the Atheist Experience on YouTube and Matt.D said that if I came on here, there would be people happy to answer any questions I might have, regarding god. Is this the right place for that please?”

This particular blogpost probably isn’t a great place to discuss god, as it’s about a common pblic misapprehension of science. But sure, there’s plenty of blogposts here that you might profitably post questions about god in respnse to.

An excellent treatise. Wondering if you may also tackle cognitive neuroscience? Specifically, the reductionist argument that if you fully understand the function of the entire connectome, you’ll understand the neural basis of mind. I’m fully committed to the materialist notion that the mind is the result of the activity of the brain. But I have yet to see a comprehensive theory that truly explains the neural basis of consciousness. Similar to your critique of simplistic genetic explanations, the same can be said for “neuroscientific” explanations. I too was guilty of accepting Francis Crick’s dogma that “it’s all just a pack of neurons”. Then I listened to Noam Chomsky who mentioned that there is an entire wing at an MIT library dedicated to studying the nervous system of insects. And no matter how hard we try to generate the honeybee connectome, that will never elucidate it’s varied complex behaviors. Just as simplistic genetic reductionism won’t succeed, neither will neural reductionism.

Most interesting to me is the educational question: what sequence do you follow when teaching?

It’s a big part of my mental workout when I’m writing my syllabus in intro Physics each year. Textbooks almost always follow a specific chain of topics, which crudely follows a constructivist path through simplified history: first constant acceleration (Galileo) then forces (Newton) then eventually momentum and energy, then rotations, etc.

I see the same patterns in my students: often they have seen the first part of this sequence before, and thus struggle to fit everything into it. They are resistant to the latter material since the first is so familiar.

One textbook (Mazur) opened my eyes to turning this on it’s head: introduce the things most important to physicists first: start with momentum and energy, since they represent symmetry and conservation rather than cause-and-effect. For experienced students, it means they dont’ fall into habits. For new students, it emphasizes the important things up front.

I can’t help but wonder if there’s a shared conversation to be had here. Is there a way we can throw off the shackles of ‘how we were taught’ or the simplfied historical?

Some people I know do historical, but add all the complications (similar to Morgan, above) by showing all the hairy problems with the original work. This is great, but is really history-of-science, not science – if you go at the same speed as the forefathers, you’ll never get further than they did.

I think the right answer is the other way, but I’m in the minority…

Thank you so much for this! I am struggling to teach myself the inheritance patterns in chicken plumage color. I was running across words not in my normal vocabulary, like “hemizygous” and “autosomal” which is great. You explain things a little bit differently which was really helpful. The complexity of it all just makes it that much more fascinating.

Thank you to larpar @ 5 and to cubist @ 6 … I’d love to even understand what you guys are on about, I read some posts and made my own little quiz … Ten points if I get a correct answer … One point if I even understand the question … lol :o) take care

You are missing the point. Yes, modern IQ-gene advocates have been forced back to polygenic theories by the brute force of GWAS, but what they are claiming to describe is — multiple genes contributing to a phenotype in an identifiable and predictable way. Which is a lot like PZ’s (admitted, by PZ) simplification and not very much like yours. Nice straw answer?

A friend recently described to me an article about multiple sets of “identical”(-looking) twins whose DNA tests showed multiple major variations – that is, “fraternal” twins with a coincidental physical resemblance. (Sorry, she gave no specifics and is now out of reach regarding details.)

If true, a big boatload of twin psycho-/socio- studies will need dumping/re-doing.

Hmm – a preliminary search indicates that this had reached Scientific American ten years ago. IOW: what our esteemed host just said about unlearning the simple version.

We need a new teaching metaphor.

How about we have a robotic production line. It will assemble a car.

The Gene’s are instructions about building the production line, not the car itself. One gene might govern how materials are sourced. Another may take roughly appropriate materials from that supply to assemble a car component or another assembly device. Each gene is likely to have cascading effects throughout the system.

Cars mate. Their gene sets mix and the child is a new assembly line that will make a new car

I’m sort of surprised nobody fixated on this part:

It seems to support the “spiritual” and religion, but what it really means is dark matter (whatever it is) and black holes and everything else that we can’t necessarily see with our own eyes, but know to exist from other observations.

I used to be more simplistic in my understanding, believing in things like Gattaca deterministic genetics. But, if we ever get to the point that we can create designer babies with the intelligence, strength, and other attributes we desire, it will still be something of a crapshoot whether they actually achieve some of the more esoteric things like “intelligence” because there is so much of the “nurture” aspect to it as well.

@Pierce R. Butler:

Just as a point of nomenclature, the SciAm article you link to does not suggest that these are actually fraternal twins (that is, twins from two distinct eggs fertilized by two distinct sperm), but rather that surprising differences can arise in individuals who both originally came from the same fertilized egg. The last sentence states: “Maybe we shouldn’t call them identical twins,” Harvard’s Bieber says. “We should call them ‘one-egg twins.'” (probably recalling the technical term, the Greek “monozygotic”)

Around the same time that article came out there was a PBS show (Nova, maybe?) about monozygotic twins. One example of that I recall had one twin that seemed to be pretty severely autistic (mostly nonverbal, behavioral problems), but the other of whom was normal. Let me see if I can dig that up . . .

Preview of Ghost In Your Genes (from 2007) (there seems to be a full version on YT, but with the visual and audio changes of a ripped copy by someone trying to avoid DMCA takedown)

Full transcript of the episode.

I can see now that they were mostly talking about epigenetics, not DNA that was actually different. My mistake.

After digging some more, I recall that the term of art is “somatic mutation” — as each cell divides during development and growth, it can mutate slightly or severely from the original. A single individual might have an early somatic mutation that can cause great differences down the line in the tissues that the mutation occurs in from those where it did not occur.

Here’s a lay discussion of a study of such mutations in monozygotic twins, which might be what you’re recalling hearing about.

Followup to #15 – the actual technical papers:

• Phenotypically Concordant and Discordant Monozygotic Twins Display Different DNA Copy-Number-Variation Profiles.

• Somatic point mutations occurring early in development: a monozygotic twin study.

@ragdish 7

I can give you my impressions for what their worth.

<

blockquote>…if you fully understand the function of the entire connectome, you’ll understand the neural basis of mind. <\blockquote>

The connection only tells you what genome produced elements interact with what partners. The connectome is part of and maintains the cells and tissue that generate consciousness, which I see as a phenomena of networks of central pattern generators touching base with and interacting with streams of incoming sensory information and carrying out actions based on the content of those streams.

Getting a basic understanding of neuroanatomy and the functions and relationships among parts of neuroanatomy is as important as the specific identity of the little tiny things that maintains their function and relationships.

The data involving what happens when something breaks is particularly useful in figuring out what it does.

https://en.m.wikipedia.org/wiki/Central_pattern_generator

Incoming sensory data (exteroception) from sensory portals.

https://en.m.wikipedia.org/wiki/Sense

…and from you (interoception).

https://en.m.wikipedia.org/wiki/Interoception

(Your sense of self has its own primary cortex in the insular cortex).

There isn’t one but we can say what it would have to look like given the architecture and what neurons/brain nuclei do. We’re at the stage where we can tease out the difference between a Social Behavior Network and a component termed a social decision making network.

https://www.ncbi.nlm.nih.gov/m/pubmed/29053770/

Since I have tourette’s syndrome that’s given me some insight into the details. I have my own problems with the language involving “disorder” and “pathology” as being a human sensitive to social behavior has advantages as well as disadvantages, there are many kinds of humans in a developmental context.

Do you have an example of the generation of such a connectome and attempt to predict behavior from it so I can accurately picture this?

Did FTB’s formatting change? blockquote doesn’t seem to do what it used to when I preview.

Or maybe blockquote doesn’t show up in the preview?