I’m sure you’ve all been waiting to hear what Kim Kardashian has been up to. Apparently, she has landed a leading role on a new television series (honestly, I didn’t need to read the review to know I have no interest in watching it), but I did learn something new. Kardashian is a moon landing denier! I shouldn’t be surprised, since she was married to Kanye West, but I’m supposed to keep track of looney conspiracy theories and missed that one.

Fortunately, NASA shut her down this time.

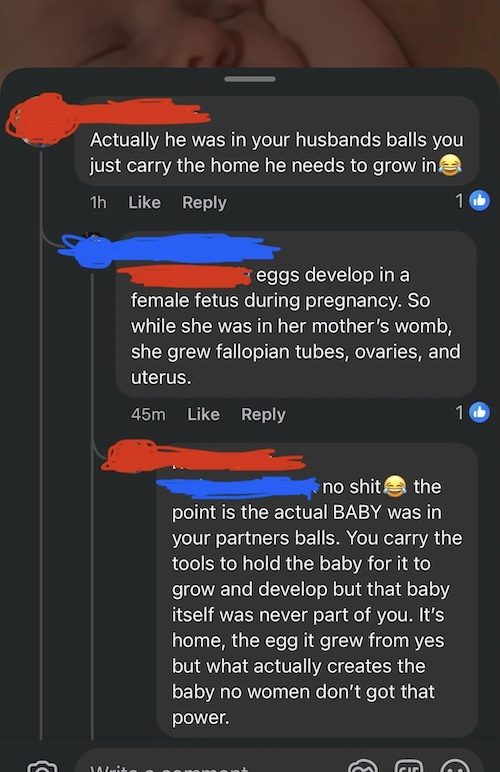

But it’s one thing to purchase a billionaire’s (breathable and stylish, hand to God!) product and another to buy into the skewed version of reality they’re promoting. We can laugh at the conspiracy-minded lunacy Kardashian touted on a recent episode of “The Kardashians,” but the fact that NASA had to publicly and officially refute what she said tells us plenty about the times in which we’re living. NASA does a lot more than plant flags on lunar surfaces. It undertakes vital scientific research that is at risk of being defunded under an administration more devoted to bathroom renovations than functional progress.

But I have something to add to the legend of Kim Kardashian. NASA may have rebuffed her, but guess who wants her to join his “research team”?

Is anyone surprised? She has negative research qualifications, but she is loaded with empty PR potential, which is all dear Avi wants. Sign her up!