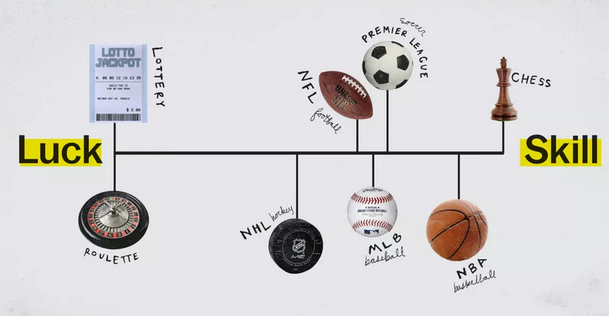

I find the concept of luck vs skill in games to be fascinating, because the common intuitions are just so wrong. The common intuition is that some games involve more luck, and some games involve more skill. On the extreme end of luck, we have the lottery; on the extreme end of skill, we have chess. The orthodox view was best expressed by a Vox article/video, which included the following image:

The Vox image also shows several sports, and the position of each sport is based on the statistical analysis of Michael Mauboussin. The details of analysis aren’t explicitly described, but it’s basically analyzing the national tournaments for each sport, and estimating how much of the variance in outcome is explained by luck or by skill.

Mauboussin did not analyze chess. Vox added chess in themselves, pulling a claim out of their ass. Without doing any analysis, I can guarantee that if you applied the same statistical analysis to chess, you would not find that chess was 100% skill. The analysis will only show that a game is pure skill if the same people consistently win all their games. I quickly checked the US Chess Championship winners, and while some names show up repeatedly, it is not 100% consistent, and therefore would not be deemed a pure skill game by this analysis.

So what gives? Is the statistical analysis bogus, or is the claim that chess is 100% skill bogus? Trick question. Both of them are bogus.

Proposition 1: Explanation of variance is a poor way to model skill vs luck.

Let’s step back and think about this philosophically. Skill and luck are concepts that we have prior to any knowledge of statistics. When people use statistics to create measures of luck and skill, that’s just a mathematical model for a preexisting idea. Sometimes a model can show us something surprising and counterintuitive. But sometimes a model is just failing to accurately represent the thing it’s supposed to represent.

One of the surprising conclusions of Mauboussin’s statistical model, is that luck and skill are not properties of the game itself, but properties of the tournament and its players.1 Suppose I created a tournament where I play basketball against one of the professionals–I would just lose every single game. The analysis would show that the outcomes are 100% explained the skill difference between us. And now suppose we created a tournament where two equally skilled players play games against each other. By “equally skilled”, I mean that in the matchup each one wins 50% of the time. The statistical analysis would show that the outcomes are 100% explained by chance. After all, a difference in skill cannot explain anything when the difference in skill is zero.

Which brings me to the second surprising conclusion: a game between two equally skilled players is a game without skill?

We have two options: bite the bullet and accept these counterintuitive conclusions, or else admit that the model is inaccurate or incomplete.

…Or we can take Vox’s route, uncritically accepting the model, while contradicting ourselves by describing chess as a game of pure skill. Within Maubboussin’s model, it is not merely wrong to claim that chess (detached from any tournament) is pure skill, it is nonsensical.

Proposition 2: Luck and skill are distinct properties.

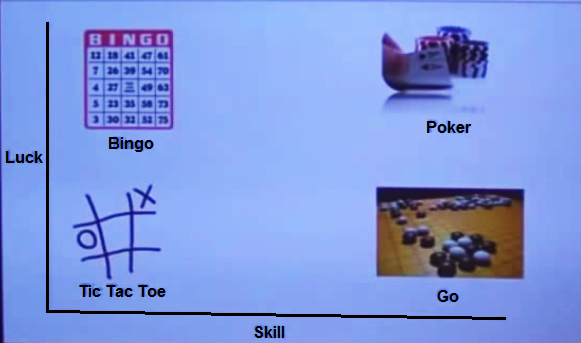

I earnestly believe that one of the world’s premier philosophers on luck vs skill in games also happens to be the creator of Magic: The Gathering. Richard Garfield has talked about luck vs skill in many places, most notably an article in 1997 (!) and a talk in 2012 (and here’s another talk with better audio). The main point he makes is that luck and skill are two distinct axes that can be used to describe a game. You can distinguish between high luck high skill games from low luck low skill games.

Screenshot from Garfield’s talk. I overlaid text and lines to make it more readable.

Garfield describes a thought-experiment-game called Rando-chess. In Rando-chess, you play a game of chess, and then you roll a die. If you roll a 1, then the person who lost the chess game wins, otherwise the winner of the chess game wins. This obviously involves more luck than chess, but does it involve less skill? The strategy of rando-chess is identical to that of chess, and it takes an identical amount of time and effort to master. One’s skill may have less impact on who wins or loses, but it seems inaccurate to say that the game involves less skill.

In fact, it may be best to detach the concept of skill from the concept of winning entirely. Take a game like Minecraft, which has no end point and no winners, but still makes use of skills. Or take a non-game like mathematics, which certainly involves skills. Skill is an independent entity, and winning is a reward you get in the context of some kinds of games.

Proposition 3: Chess involves luck.

Garfield describes another thought-experiment-game, where you have to choose between two identical doors, and one of them is the winning door. Obviously this is a game of luck. But what if there’s a complex puzzle that tells you the correct door, and the puzzle is simply beyond your understanding? It’s still a game of luck, because a puzzle beyond your understanding simply doesn’t help you.

Chess may be deterministic, but essentially it’s a puzzle beyond all understanding. And you don’t ignore the puzzle, but neither can you fully solve it, not even with a computer. The best you can do is assess which moves are likely to be winning. But you simply don’t know for sure, so it’s still partway like the game where you have to select the correct door.

Random number generation (e.g. with dice or cards or computers) is not the only form of luck. Luck is defined relative to your personal knowledge. If a computer has a deterministic method for choosing the next random number, but you don’t understand the method, then it’s still random from your perspective. If every chess position has deterministic winning and losing moves, but you can’t distinguish between them with certainty, then it’s still random from your perspective.

Proposition 4: The introduction of luck often enhances the level of skill, rather than diminishing it.

I believe that generally speaking, when you introduce an element of luck into a game, that makes it harder to master. I have three arguments:

1. Rather than considering the single outcome for each possible move, you have to consider the collection of possible outcomes, and the likelihood of each.

2. Improving at a game often involves assessing whether you played well or poorly. If luck is involved, then it’s harder to tell when you played well, because the outcome might have been the result of good plays, or it might have been the result of good luck.

3. The more luck in a game, the more likely players are to find themselves in a variety of game states. And so players must master the game from a wide range of positions, both winning and losing.

Of course, these arguments don’t apply to every situation (try applying to Rando-chess).

Proposition 5: A better model could produce better insight about skill in games.

Although I’ve argued that “skill” is a distinct concept from “the degree to which a game rewards skill”, both of these are still useful concepts that we might like to model. I think a good starting point is something like the Elo rating system created for chess. In this model, each player has a certain mean performance, and variance in performance. Performance can’t be measured directly, but if one player beats another, then we know that that player had a higher performance in that particular game.

The mean performance might be considered the “skill factor” of the game, and the variance in performance is the “luck factor”. But the Elo rating system can’t provide an absolute measure of the luck factor, because “performance” is scaled in such a way that the variance in performance is constant.2 At best, the model describes the ratio of skill to luck.

Now, if you have a tournament, you’ll have a certain variance in skill among the players, but it should be clear that this is a property of the players, and not a property of the game. If we want to describe the properties of the game, I think we need a function that estimates the mean performance of someone at a certain skill level (where skill is a combination of talent and practice). I’ll call this function M(skill).

There are two general properties that I might use to describe M(skill). The first property is the derivative, which describes how much you’re rewarded for a certain increase in skill, where the unit of reward is increased probability of victory. Note that M(skill) might have a different derivative at different levels of skill. The second property, is how much you can increase your skill before M(skill) levels off. This is basically the depth of the game, how much time you can dedicate to it before the rewards dry up.

So this model provides a way to think about the “depth” of the game, and the degree to which the game rewards skill. What the model does not do, is provide a way to measure how much skill is in the game. Is that because the model is incomplete, or is it because “How much skill is in the game?” is an incoherent question? I leave it for the reader to judge.

1. There’s an analogy to be made to a concept in evolutionary biology, known as heritability. Heritability is a statistical measure of how much variance in a biological trait is explained by variance in genes. Counterintuitively, heritability is not a property of the trait itself, but is a property of a specific population. In a hypothetical population where everyone is genetically identical, then variance in genes would explain none of the variance in biological traits. (return)

2. Given two players, the expected win rate of the first player is

1/(1+10^((M1-M2)/400))

where M1 is the mean performance of the first player and M2 is the mean performance of the second player. This implies that the standard deviation of performance per player per game is 223, if I did my math correctly. (return)

What if I were playing a game of chess and was experiencing intestinal distress due to some poorly-cooked breakfast? I might not play my best game. Is this luck?

I would consider that luck.

Yeah, chess definitely involves luck, although there’s “less” of it, compared to a game like hold ’em poker.

Another way it is noticeable is when the players prepare for a game. You can study your opponent’s games, trying to figure out where their strengths and weaknesses may be. (How do things go when they play the French defense? Or do they never play it? And so forth.) But even with tons of preparation, you can be surprised. And the point is to prepare a bunch of different lines; but of course, in any given game, you’ll only be picking one (quasi randomly) opening out of the lot.

They were probably preparing a lot too, and they might have looked at the same/similar lines as you. That’s kind of unfortunate — luck strikes again. If they happen to find (during prep, before the game, mind you), a tricky/confusing/complicated set of moves that you didn’t analyze, then you might get into trouble. But even when that happens, with some combination of skill and luck and who knows what, you might be able to recover.

It’s also pretty common that a player remembers their opening (or the exact move order) incorrectly. They may get into an opening that they haven’t looked at for years, so things are a bit hazy for them now. Or it can happen for a lot of other reasons … they may be nervous, tired, ate some of Marcus’ bad breakfast, etc.

It’s always tragic when you can see them realize their mistake just after making the move. I often wonder if they would’ve seen it, if they had just thought for a little bit longer. (Or did they really need to see the thing, instead of imagining it?) That’s about luck too, in a way — you may not really know how much is the right amount of time to spend on this or that move. The clock is ticking, so you may have to decide that searching longer for the perfect move doesn’t fit into your remaining time budget, so you make a practical decision to go with a move that just looks reasonable. That may come back to bite you, but hopefully it’s good enough. Good players know how to manage their time well, but as always, you can’t look far enough ahead to see how complicated it might become. Typically, that is…. but if the game has already simplified a lot, because lots of pieces are off the table, etc., then you can get a better sense of that. On the other hand, even king+pawn endings (for example) which look very simple can be hiding a lot of surprises.

Some people have claimed that the kind of preparation people engage in these days (with the help of a very powerful computer and chess engine, if you’re a top GM) takes some of the skill out of the game, because it’s just a matter of the memorization you’ve done in the days or months prior to a tournament/match. I don’t think that’s completely fair: you still have to understand the moves and be ready for anything at almost any time. What I would say is that, beyond luck and skill, there’s a third ingredient in the mix which is something like “work” (or call it what you like). If you’re devoting lots of time and effort to it, that can pay off very well. It’s not skilled labor, or not as skilled as other aspects of the game, but that doesn’t prevent it from affecting the outcome.

Didn’t Louis Pasteur nail this one over a century ago?

Everything contains a portion of luck. From my studies, I remember that the flu reduces one’s mental capacity even before the symptoms manifest themselves. So if you get the virus before a match, you will perform poorly and not know why.

Why speculate about this without taking AlphaZero into account?

Perhaps it just got very lucky, very quickly, at multiple gaming tasks.

Back in the day, in internet time, that was.

Charly,

Everything physical perhaps does (though it could be argued that’s due to quantum effects or that it’s just that there are too many degrees of freedom for other than local optima to be found.

Chess, however, is a conceptual, not a physical game. And it’s a game with complete and perfect information of its history and state for both players, no more and no less than tic-tac-toe, though the latter is so trivial that its solution is old now.

@John Morales, #6

And deprive commenters of the opportunity to bring it up for themselves? But seriously, I don’t know anything about AlphaZero.

A large skill difference between competitors can overwhelm the contribution of chance to the outcome. But that’s not really the same as saying there’s no chance involved. It’s just saying that there’s a difference in skill (insofar as an AI can be “skilled”), and the game rewards a difference in skill.

Siggy, it’s pretty amazing stuff. Worth a check.

I hear you, but what about my comment to (ostensibly) Charly?

Any opinion? I think it’s relevant.

@John Morales #9,

I would guess that in sports the uncertainty doesn’t come so much from quantum effects, but rather the inability of humans to precisely control their physical actions. I’d argue that the same goes for mental actions, flu or no flu.

You could consider a purely conceptual chess abstracted away from the mental activity required for analyzing it. Maybe you consider two deterministic chess AIs playing against each other. Or you consider two hypothetical perfect players. I think in these two regimes chance doesn’t enter into it at all. But I also think that doesn’t have much bearing on real chess.

I have a simpler example: blind chess. Conceptual enough for me, and surely real chess. 😉

Only physical aspect of the game is when it’s instantiated, and that is the computational substrate (and the communication channel, to be pedantic), and that’s not the game itself.

And all examples of “luck” hitherto, IMO, depend on those physical aspects.

So, if chess is the physical act of playing a game, then I suppose so.

If it’s a virtual game, then arguably so.

If it’s the abstraction itself (the initial state and the rules and the iterated moves), then I don’t think so.

—

BTW, I recently saw a video of Magnus playing blind chess against multiple opponents. Clearly, he had every board, every position, every history in his head.

(Historical examples abound)

@John Morales,

If you’re referring to blindfold chess, that hardly seems abstracted away from the mental activity required to analyze chess, more like abstracted towards it.

But you know, in the OP my original argument really had nothing to do the uncertainties involved in mental activity. It’s more to do with our inability to see many moves ahead. The game state is a giant tree, and whether a position is winning or losing is determined by all the branches above it. But you can’t see all the branches because the number of branches exceeds the atoms in the universe or something. So you’re left with reasoning about what moves are likely winners or losers. This will be true even of AlphaZero.

Ta.

@John Morales, I fail to see how your answer to me is relevant to anything I have said. It seems to me like you read the first sentence only and either ignored or did not understand the rest.

John @7:

I’d love to see your argument!

Actually, at first blush it may not seem unreasonable. After all, relativity comes into play for GPS. The largest contribution there comes from the strength of the gravitational field at the Earth’s surface, and goes like a/R, where a is the Earth’s Schwarzschild radius and R is its radius. That number is on the order of 1e-9. Small, but with the accuracy required, the time difference between Earth and satellite clocks becomes significant.

For quantum effects on our macro scale, one could look at the parameter c/d, where c is the Compton wavelength of a macroscopic object (like a football, baseball, or a coin), and d is a macroscopic distance, say a millimetre. Give or take a couple of orders of magnitude, that gives c/d of about 1e-40. A bit handwavy, but I’m going to go out on a limb and say that quantum effects can be ignored in a game of Aussie rules football, or a coin toss.

Rob, were I to essay that, it would be breaching proposition 7, i.e. bullshitty.

But you got me.

—

Charly, sorry. Was riffing off you.

Hey, Siggy (and anyone else on the thread who’s interested).

If you’re at all keen on the philosophy of games, luck, chance, and all the rest, I’d like to recommend a book called The Grasshopper by Bernard Suits. It’s a prolonged philosophical investigation into the philosophical meaning of why we have games and what their purpose is. Also, it’s written in an engaging dialectic that makes it far more fun than most philosophy books. (The title character is the grasshopper of Aesop’s fable, who defends his life choices before his death via Socratic dialogue with his disciples.) It would also be fun to discuss it on your blog.

Indeed there is luck in chess: https://en.chessbase.com/post/chess-and-luck