In the last part of my series on the DNC hack, I mentioned that I watched a seminar hosted by Crowdstrike on how it was done. Some Google searching didn’t turn up much at first, but it did reveal other videos from Crowdstrike and other security firms. I’m still shaking my head at the view counts of some of these; shouldn’t reporters have swarmed them?

Ah well. If you’d like to see how these security companies viewed the DNC hack, here are some videos to check out.

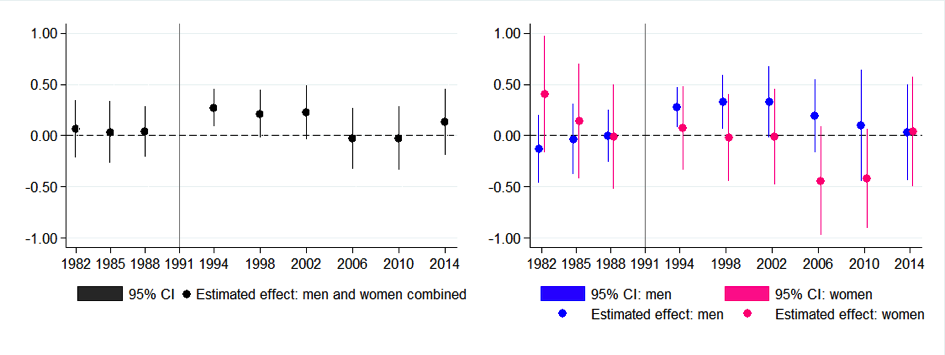

![The Cantor function, in the range [0:1]. It looks like a jagged staircase.](https://freethoughtblogs.com/reprobate/files/2017/05/cantor_function-1024x576.png)