[Part 2]

As a result of selection acting on information-behavior relationships, the human brain is predicted to be densely packed with programs that cause intricate relationships between information and behavior, including functionally specialized learning systems, domain-specialized rules of inference, default preferences that are adjusted by experience, complex decision rules, concepts that organize our experiences and databases of knowledge, and vast databases of acquired information stored in specialized memory systems—remembered episodes from our lives, encyclopedias of plant life and animal behavior, banks of information about other people’s proclivities and preferences, and so on. All of these programs and the databases they create can be called on in different combinations to elicit a dazzling variety of behavioral responses.[1]

“Program?” “Database?” What exactly do those mean? That might seem like a strange question to hear from a computer scientist, but my training makes me acutely aware of how flexible those terms can be.

Most contemporary programming uses an object-oriented metaphor. Concepts are translated into structures by treating them as “objects;” handling access to the network, for instance, could be the responsibility of a “Network” object. Underneath this object could be actions or “methods,” such as “listenForIncomingData(),” as well as data or “fields” like “lastPacketSeen.” Objects can call methods or access fields of other objects, though most of the time you can place restrictions on who can call or access certain things. All of this should be well-documented, perhaps to the point of having an informal “contract” about how each method behaves and “unit tests” that validate that behavior. Isolate objects into “modules,” then fit them together like Lego blocks and you have a “program.”

However, there are plenty of computer languages that don’t use the object metaphor, like Lisp or Fortran, yet they allow you to write programs. It has been heavily criticized. And there’s a reason why I keep using the word “metaphor;” most processors have no idea what an “object” is, which leads object-oriented programming languages to abandon that metaphor when bootstrapping themselves. Behind the scenes, all that structure is collapsed down into pools of executable code and data with little to no organization.

And “executable code” is just a synonym for “data.” Just-In-Time compilation depends on this, altering its own code as if it was data, and in the extreme it can be used to turn data lookups into “hard-wired” code.[2] Fast Programmable Gate Arrays are processors that you can re-configure on-the-fly, and don’t really have “instructions” at all. As Dr. Adrian Thompson learned last time, evolutionary algorithms will gleefully exploit this to reach greater fitness.

So at the most basic, a “program” is whatever code happens to be running at the time, and a “database” is a collection of information. The extra qualifications we add to those terms are just for our sanity’s sake, there is no reason a computation device has to include them.

Individual behavior is generated by this evolved computer, in response to information that it extracts from the internal and external environment (including the social environment). To understand an individual’s behavior, therefore, you need to know both the information that the person registered and the structure of the programs that generated his or her behavior.[1]

We can’t even understand highly-structured programs; self-imposed guidelines such as the object metaphor do little to prevent the complexity from getting beyond what a human mind can comprehend. The Linux kernel, a low-level operating system used to run programs, currently contains over twenty million lines of code from a good 14,000 contributors. There’s no way a single person could comprehend it all, and proof of that comes from the continual stream of bugs discovered in the code; if someone could easily understand the code, these bugs would never arise. Bear in mind that an operating system is responsible for providing an easy interface between the programs a computing device runs and the underlying hardware, too. If we truly are a collection of programs, we could very well require an operating system as well.

If we struggle to understand highly-structured programs with good documentation, how can understand the unstructured “programs” that evolutionary processes tend to generate without any documentation at all?

But there’s another problem lurking here. Let’s pluck a specific EvoPsych theory out of the ether and look carefully at what parameters would be involved. Some researchers have proposed a universal fear module, for instance.

The theoretical structure that we end up with, the fear module, comprises four characteristics: selectivity with regard to input, automaticity, encapsulation, and a specialized neural circuitry (see Fodor, 1983). Each of these characteristics is assumed to be shaped by evolutionary contingencies. The selectivity results to a large extent from the evolutionary history of deadly threats that have plagued mammals. The automaticity is a consequence of the survival premium of rapid defense recruitment. Encapsulation reflects the need to rely on time-proven strategies rather than recently

evolved cognitions to deal with rapidly emerging and potentially deadly threats. The underlying neural circuitry, of course, has been crystallized in evolution to give the module the characteristics that it has.[3]

EvoPsych places a lot of emphasis on preexisting neural hardware, so let’s define a “hard EP” that claims the inputs are entirely biological: predators eat mammals, mammals that survive go on to reproduce while the rest don’t, mammals that have a built-in “fear module” which steers them away from predators would survive more than those that don’t, and so once said “fear module” evolved it would fixate in the population. However, Tooby and Cosmides argue for two different parameters, biology and culture, so we’ll also propose a corresponding “soft EP.”

So which of these views is more in line with reality? Let’s look at some recent research.

A primary aim of the present experiments was to investigate whether threat stimuli that are evolutionarily significant (snakes) are more likely to capture attention than threat stimuli that are relevant in only an ontogenetic sense (guns). Thus, we compared response latencies for snakes, guns, and neutral items in a visual search task. When our experiments were completed we became aware of two other recent studies that also contrasted threat-related stimuli that differed in terms of phylogenetic history (Blanchette, 2006; Brosch & Sharma,2005). In both cases, it was found that the threat superiority effect was equivalent for phylogenetic (e.g., snakes, spiders) and for ontogenetic (e.g., guns, syringes) stimuli, which does not support the fear module theory.[…]

The present results demonstrate that the efficient detection of threat was not restricted to phylogenetic stimuli in a visual search task. Although participants rated ontogenetic stimuli (guns) as being comparable in threat value to phylogenetic stimuli (snakes), it was not the case that the snake stimuli were detected faster than guns, as would be expected by the hypothesis that an evolved fear module can be preferentially accessed by phylogenetic stimuli (Öhman, 1993; Öhman & Mineka, 2001, 2003). These results are consistent with earlier reports that phylogenetic stimuli do not have any advantage over ontogenetic stimuli in visual search tasks (Blanchette, 2006; Brosch & Sharma, 2005). Taken together, the general pattern of results is inconsistent with a strong view that an evolved fear module is triggered primarily by threat existing at the time of mammalian evolution. … [4]

That rules out “hard EP,” but-

… Instead it seems that, if a fear module does indeed exist, it may be flexible and can be triggered by threat-related stimuli of both ancient and recent origin. Although the evolved fear module hypothesis does not predict this finding, Öhman & Mineka (2001) did acknowledge that ontogenetic stimuli can also trigger the fear module. Using a computational model of fear conditioning (Armony & LeDoux, 2000), they argued that biologically “prepared” stimuli may gain access to a fear module by means of stronger preexisting connections between units representing features of certain stimulus–outcome combinations.[4]

-is entirely compatible with “soft EP.” Hang on a second, though: the “blank slate” approach also invokes the same two parameters, biology and culture. The difference is primarily one of emphasis; the “blank slate” argues for a thin level of biological positive or negative reinforcement, and states that given enough time and input complex behavior will result from it, while “soft EP” argues…

… it doesn’t really argue, come to think of it. EvoPsych researchers can’t agree on what a “mind module” is and what isn’t, so they have no means of placing a boundary of responsibility between biology and culture. This means they can place it anywhere, even in places that are entirely compatible with a “blank slate.”

When presented schematically, the organization of the processing streams seems to conform closely to models of information processing through sequential modules – information about wavelength, orientation, and spatial frequency is processed in the interblob areas of V1, from which the interstripe areas of V2 compute disparity and subjective contours, from which in turn V4 computes non-Cartesian patterns, and areas in inferotemporal cortex identify objects (van Essen & Gallant, 1994). […]

As I noted earlier in this paper, most advocates of narrow evolutionary psychology [such as Tooby and Cosmides] employ a notion of module that corresponds to overall abilities individual exhibit. The claim is that these are the kinds of entities which evolution can promote. But I have been arguing that the sorts of modules neuroscientific evidence points to are at a much smaller grain size (one corresponding to information processing operations) and that these procedures are likely to be far more integrated through forward, backward, and collateral processing than those envisioned by these psychologists. Does the repudiation of these kinds of modules mean the demise of an evolutionary account of cognition? By no means, although it may spell the doom of narrow evolutionary psychology as currently practiced.[5]

Nor are Tooby and Cosmides even the worst offenders here; there’s a trend within EvoPsych to add another parameter into the mix.

In contrast to hip size, to date, there have been few suggestions about the adaptive value of a small waist, other than indicating that a woman is not currently pregnant. Any case where preferences lie at or beyond the extremes of the population distribution should draw our attention (Staddon, 1975). Going forward, it will be important to more fully explicate the selection pressures that have driven evolutionary changes in female body shape and male preferences for it and to more fully explore the extent to which such preferences are universal or vary with features of the local ecology.[6]

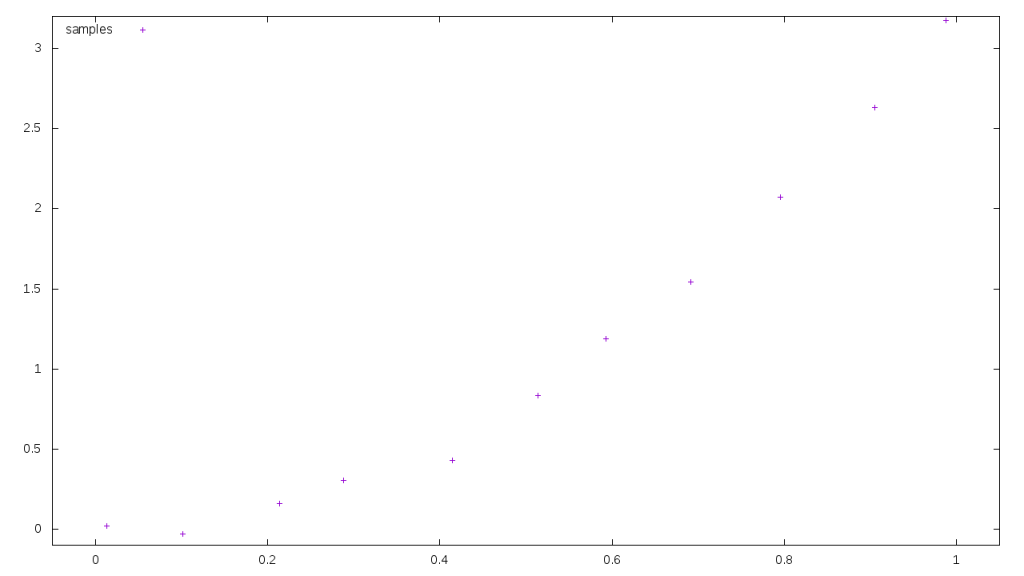

So the EvoPsych model has three basic parameters to choose from: universal biology, local variation in biology due to ecological variance, and culture. All of these are ill-defined, and can be stretched or warped in any way to confirm that model. There’s a term for this: overfitting. Consider this graph, a sample of points along a curve:

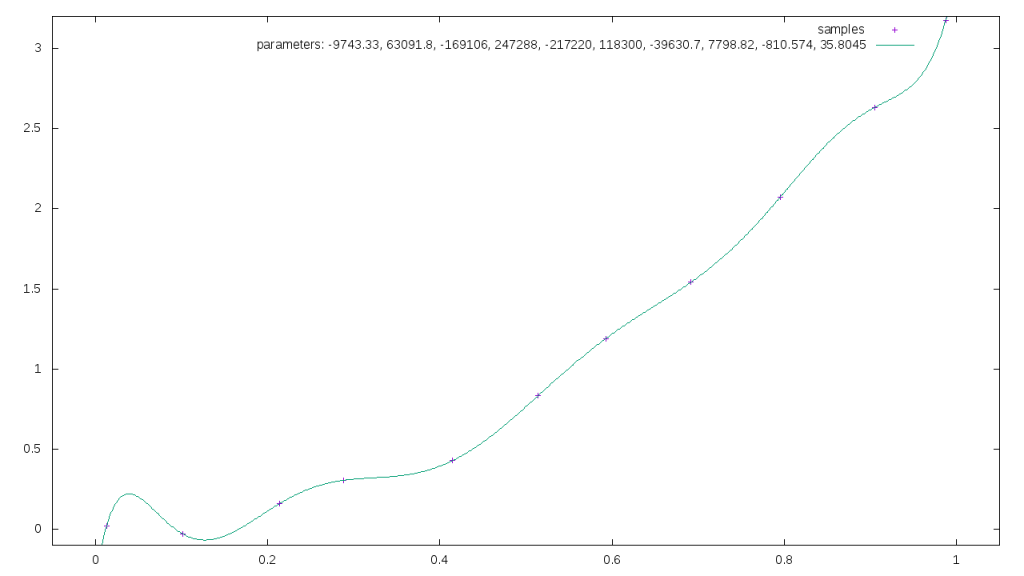

We can perfectly match that curve if we fit it to a polynomial with eleven parameters.

We can perfectly match that curve if we fit it to a polynomial with eleven parameters.

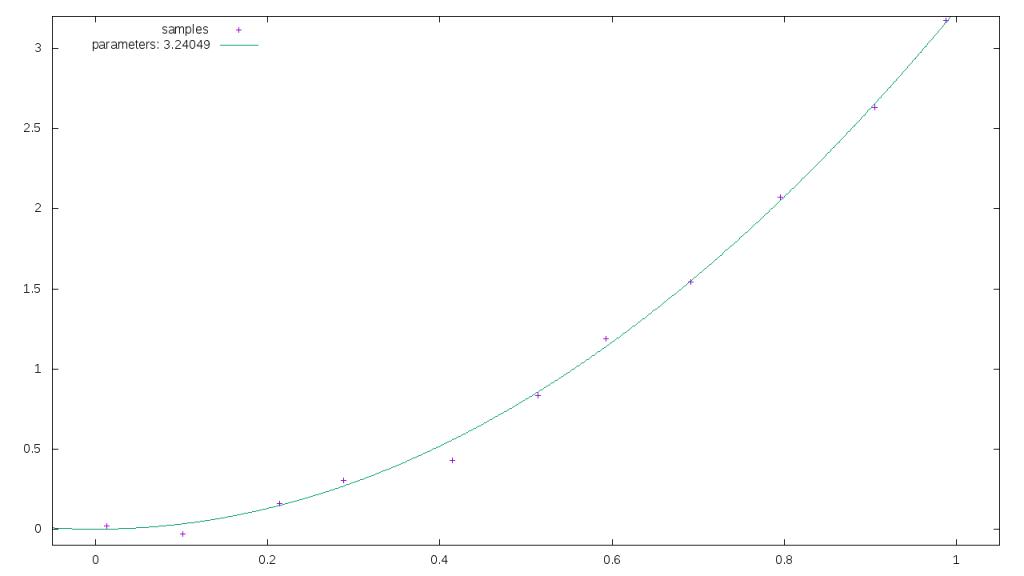

If we were to try a simple quadratic with one parameter, the fit wouldn’t be as good.

If we were to try a simple quadratic with one parameter, the fit wouldn’t be as good.

And yet the original curve used to generate those samples was almost exactly the same as the one-parameter version, I just injected a little randomness to make it more realistic. Adding extra parameters to the model made it look like we’d achieved a better fit, but in reality we fixated on noise and variation, leading us away from reality. This is a big problem within neural networks and deep learning, where there’s a ridiculous number of parameters to tune.

And yet the original curve used to generate those samples was almost exactly the same as the one-parameter version, I just injected a little randomness to make it more realistic. Adding extra parameters to the model made it look like we’d achieved a better fit, but in reality we fixated on noise and variation, leading us away from reality. This is a big problem within neural networks and deep learning, where there’s a ridiculous number of parameters to tune.

EvoPsych is falling into the same trap, and as a result it can make the same predictions the “blank slate” model does. If we are blank slates, for instance, you’d predict that we’d start off with no inherent fear of snakes, but that we’d respond to social conditioning and develop our fear based on that.

Gerull and Rapee (2002) showed that toddlers’ (aged 15-20 months) fear expression and their avoidance of fear-relevant stimuli (a rubber snake or a rubber spider) were greater after witnessing their mother displaying a negative (fearful/disgusted) facial expression. However, the mechanism of vicarious learning is unclear because a no-modeling control condition was not used. Field et al. (2001) showed 7-9 years old children’s self-reported fear beliefs increased, but non-significantly, from before to after watching a video in which an adult female acted fearful/avoidant with a novel toy monster. This methodology addressed many of the criticisms directed at past human vicarious fear learning research: it avoids the memory issues in the retrospective literature; it uses non-clinical samples to inform us about the development of fears in normal children; children are not forced to ascribe an existing fear to a particular onset pathway pre-determined by theoretical assumptions; the manipulation of vicarious learning experiences allows causal inferences about the mechanisms underlying any observed changes in emotional response systems; and the use of animals that children had not encountered eliminates the influence of previous experience on learning. It has also been used to show that verbal threat information has a highly significant effect on fear beliefs about and avoidance of novel animals (Field & Lawson, 2003; Field, 2006a), creates attentional bias (Field, 2006a,b), and interacts with temperament (Field, 2006a). [7]

This is also compatible with EvoPsych, though. According to it, our fear “mind module” would have been pressured to be flexible by variation in the local ecological conditions, and so would take input from cultural factors to properly function. It’s no wonder EvoPsych researchers tend to be so confident they’re correct, they’re able to fit every data point into their model perfectly.

And if your model is perfect, it’s probably wrong.

[Part 4]

[1] Tooby, John, and Leda Cosmides. “Conceptual Foundations of Evolutionary Psychology.” The Handbook of Evolutionary Psychology (2005): 5-67.

[2] Labschütz, M., S. Bruckner, M.E. Gröller, M. Hadwiger, and P. Rautek. “JiTTree: A Just-in-Time Compiled Sparse GPU Volume Data Structure.” IEEE Transactions on Visualization and Computer Graphics 22, no. 1 (January 2016): 1025–34. doi:10.1109/TVCG.2015.2467331.

[3] Öhman, Arne, and Susan Mineka. “Fears, Phobias, and Preparedness: Toward an Evolved Module of Fear and Fear Learning.” Psychological Review 108, no. 3 (2001): 483–522. doi:10.1037//0033-295X.108.3.483.

[4] Fox, Elaine, Laura Griggs, and Elias Mouchlianitis. “The Detection of Fear-Relevant Stimuli: Are Guns Noticed as Quickly as Snakes?” Emotion (Washington, D.C.) 7, no. 4 (November 2007): 691–96. doi:10.1037/1528-3542.7.4.691.

[5] Bechtel, William. “Modules, Brain Parts, and Evolutionary Psychology.” In Evolutionary Psychology, 211–227. Springer, 2003. http://link.springer.com/chapter/10.1007/978-1-4615-0267-8_10.

[6] Lassek, William D., and Steven J. C. Gaulin. “What Makes Jessica Rabbit Sexy? Contrasting Roles of Waist and Hip Size.” Evolutionary Psychology 14, no. 2 (June 1, 2016): 1474704916643459. doi:10.1177/1474704916643459.

[7] Askew, Chris, and Andy P. Field. “Vicarious learning and the development of fears in childhood.” Behaviour Research and Therapy 45.11 (2007): 2616-2627.