Except we don’t know what random consequences that would have. After all, Letters from a Birmingham Jail and Mein Kampf were both produced by prisoners. I think that’s also the fatal flaw in long-termist thinking — you can’t plan as if every action has a predictable consequence. It is extreme hubris to think you can derive the future from pure logical thinking.

But that’s what long-termists do. If you don’t know what “long-termism” is, Phil Torres explains it here.

In brief, the longtermists claim that if humanity can survive the next few centuries and successfully colonize outer space, the number of people who could exist in the future is absolutely enormous. According to the “father of Longtermism,” Nick Bostrom, there could be something like 10^58 human beings in the future, although most of them would be living “happy lives” inside vast computer simulations powered by nanotechnological systems designed to capture all or most of the energy output of stars. (Why Bostrom feels confident that all these people would be “happy” in their simulated lives is not clear. Maybe they would take digital Prozac or something?) Other longtermists, such as Hilary Greaves and Will MacAskill, calculate that there could be 10^45 happy people in computer simulations within our Milky Way galaxy alone. That’s a whole lot of people, and longtermists think you should be very impressed.

But here’s the point these people are making, in terms of present-day social policy: Let’s say you can do something today that positively affects just 0.000000000000000000000000000000000000000000001% of the 10^58 people who will be “living” at some point in the distant future. That means, mathematically, that you’d affect 10 trillion people. Now consider that there are roughly 8 billion people on the planet today. So the question is: If you want to do “the most good,” should you focus on helping people who are alive right now or these vast numbers of possible people living in computer simulations in the far future? The answer is, of course, that you should focus on these far-future digital beings.

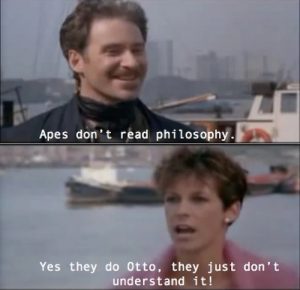

Long-termism is, basically, philosophy for over-confident idiots. Otto would love it, if you know your A Fish Called Wanda memes. It takes a certain kind of egotistical certainty that you personally know all the very best choices which will shape all of the future, and that you know exactly how the future is going to be.

It’s a good article. Only one note struck me as jarringly false.

Nonetheless, Elon Musk sees himself as a leading philanthropist. “SpaceX, Tesla, Neuralink, The Boring Company are philanthropy,” he insists. “If you say philanthropy is love of humanity, they are philanthropy.” How so?

The only answer that makes sense comes from a worldview that I have elsewhere described as “one of the most influential ideologies that few people outside of elite universities and Silicon Valley have ever heard about.” I am referring to longtermism. This originated in Silicon Valley and at the elite British universities of Oxford and Cambridge, and has a large following within the so-called LessWrong or Rationalist community, whose most high-profile member is Peter Thiel, the billionaire entrepreneur and Trump supporter.

That’s not the only answer that makes sense. Another, simpler answer that comes from an understanding that proponents of long-termism are naive twits is that Musk is a narcissistic moron who operates on the basis of selfish whims, and that idea is better supported by the evidence of his behavior. It’s looking at it through the wrong lens. It’s not that LessWrong promotes an influential, principled philosophy, it’s that unprincipled, arrogant dopes find it a nice post hoc rationalization for what they choose to do.

Most inportant: without a functioning biosphere we are fucked, so you should factor in the value of all the “biosphere services” that might get disrupted by your activities.

The lake Victoria fish ate the mosquito larvae. The the Nile Perch was introduced and drow local fish into extinction. Now there are literally clouds of new mosquitos rising from the lake.

But the idiots got bigger fish.

Why Bostrom feels confident that all these people would be “happy” in their simulated lives is not clear. Maybe they would take digital Prozac or something?

It would take a lot of processing power, by modern standards, to simulate a personality within a computer. But once that personality focuses on its simulated pleasure center, a lot of the resources dedicated to other functions become extraneous, going to virtual memory, then archive, then erasure. The more pleasure the personality experiences, the more can be trimmed away, until you end up with just a continuous single circuit – which never even notices when it’s turned off.

That we can ever simulate a human mind in a computer with a simulated environment is completely unknown right now.

About all we can say is that it is far in the future, way beyond the lifespans of people living today.

We can’t make decisions now about possibilities in the far future that may or may not ever even be possible.

It is equivalent to what the fundie xians do. Based on a bible book, Revelation, which is mostly gibberish to start with, they keep claiming the earth will end Any Day Now and jesus will come back, kill 7.9 billion people, and destroy the earth. They’ve been saying this for 2,000 years and it has always been wrong. In practice, they don’t act like they even believe it any more.

Other famous works which were, broadly speaking, written in prison include The Travels of Marco Polo and The 120 Days of Sodom. According to 30 Literary Works Written in Prison, other notable examples include Le Morte d’Arthur (“The Death of [king] Arthur”), The Pilgrim’s Progress, Don Quixote, The Prince (Machiavelli), Civil Disobedience and Other Writings, and Conversations with Myself (Nelson Mandela). That list surprised me, as one example, I was unaware Le Morte d’Arthur‘s (probable?) author was imprisoned when it was researched(?) and written.

This assumes a utilitarian basis for ethics, and there are already simpler cases that put that in doubt (e.g. “life boat” scenarios where you may need to “sacrifice” individuals against their will for overall good).

But even if it is hard to escape utilitarian arguments, at the very least they have to apply to real individuals and not abstractions. There’s no clear concept of happiness for these future individuals, who might actually be miserable for reasons we fail to predict. For that matter, it’s highly speculative that this scenario is even technologically possible (though I think that may be among the least problematic bit).

Another more concrete objection I can think of is that it looks like a retroactive justification for the genocide of Native Americans. They weren’t “using the land efficiently.” Limit land to the Great Plains of the US to keep the argument tight. So we wiped them out, mechanized agriculture in the plains, and used the improved crop yield to feed all of today’s happy middle class Americans (yeah, well… still working on this part of the argument). The thing is, the US really did use this argument a lot in the past, and you still hear it, but do you really buy it on ethical grounds?

On ethical grounds, I would go back to a much simpler argument: the Native Americans had a long historical right to that land and we stole it from them. Full stop. I mean, there are plenty of people doing stuff with their land that looks stupid or inefficient to me, but usually it is the property argument rather than the utilitarian argument that wins out over my attempt to set them straight.

Finally, this was presented more imaginatively in Orson Scott Card’s increasingly bizarre sequels to Ender’s Game. I mean, it doesn’t matter how many sentient beings you wipe out if you can just create a lot more, right? That makes up for everything according to this warped view of ethics.

I would ask, does any amount of future happiness undo the suffering experienced by a single, conscious individual. They are not likely to appreciate the utilitarian angle at all.

To question the premise further, I don’t want to do the most good, which for all I know, could be accomplished by putting a bullet in my head right now. Am I part of the problem or part of the solution? And the people who depend on me, how about them?

I have no idea, and I don’t think that way. What I want to do is use the finite lifespan accorded me to be true to my “vocation” (echoing back to my Catholic upbringing). There are always choices to make in life, but ultimately, a question like “What should I be doing right now?” has to be determined personally. My only real desire is to focus on doing the things I care about, doing them well, and minimizing the harm that comes to others as a result.

I don’t think I understand the premise. Are these future humans actual living beings with bodies encapsulated in some sort of stasis while they experience a happy life in the Matrix, or are they virtual AI humans in computers? Because I don’t see anything here about supporting the living bodies of that many people, even dispersed across the galaxy. What will they consume, food/nutrients-wise? Who/what will do the labor of maintaining these bodies while their minds are in the Matrix? How will they reproduce?

I don’t get it.

Triple comment, but I can’t resist adding…

Peter Thiel makes Elon Musk look like Mahatma Gandhi. Or if that’s going too far, at least I can imagine that somewhere in his arrested 12-year-old mind Musk may sincerely believe he is doing good for humanity. As he once wrote: “This game make good use of sprites and animation, and in this sense makes the listing worth reading.” That’s Lil’ Elon for you, always trying to help out.

Thiel is simply a narcissist through and through who is willing to use his personal wealth to destroy anyone who crosses him. The idea that the “greater good” even occurs to him is laughable.

My favorite running gag from “A Fish Called Wanda:”

If long-termists were jailed, what works would they write?

Mein Money!

Not Fear, Not Loathing, It’s Me

La la la I Me too!!

Mein, all mine!!!

Fuck off [insert name of long-termist here]

Long-termism sounds like a slightly less-paranoid (and perhaps slightly less self-serving) version of Roko’s basilisk.

A few thoughts come to mind: stupid, bollocks, poppycock, bullshit…

@4- blf

It would be a bit of a stretch to say Machiavelli’s The Prince was written in prison. Your source says: “Viewed as an enemy of the state by the Medicis, Machiavelli was accused of conspiracy, arrested, tortured, and imprisoned. After his release, he was banished from Florence, and it was during his exile that he wrote “The Prince.” While in prison he was enjoying the strappado too much to write.

The only long term planning worth anything is planning to deal with the known and urgent threats, and fuck the stock market. Good luck getting rich once Wall Street is partially submerged.

.

If you read SF by William Gibson you may recall the “Jackpot” in the next 20 years; the disaster capitalism that transfers the remaining wealth to the 0.1 % as the world population is undergoing a catastrophic reduction from cascading ecological collapse.

If humanity can get through the next fifty years the advances in computing, energy technologies, biological sciences including DNA modification et cetera will be mutually reinforcing, maybe leading to a second period of future shock.

.

One thing I will not experience but some of your might, is giving humans the genetic substrate for living as long as bowhead whales. Then we might see some real long-term planning.

Also, “strong” AI is nothing to be afraid of.

According to the “father of Longtermism,” Nick Bostrom, there could be something like 10^58 human beings in the future, although most of them would be living “happy lives” inside vast computer simulations powered by nanotechnological systems…

In other words, most of those happy people would literally be figments of a huge IT system’s imagination. That won’t exactly do the REAL flesh-and-blood people any good. For a scary take on this, see “The Uploaded” by Ferrett Steinmetz.

That we can ever simulate a human mind in a computer with a simulated environment is completely unknown right now.

I’m inclined to say it will never be possible. Our personalities are dictated almost entirely by the physical arrangement of the cells and network-connections in our brains; and expecting any sort of non-organic computer system to host and enable a functioning human personality is pure folly. Also, most of our emotions affect, and are affected by, other organ systems. So what would happen to all those emotions when they’re no longer connected to bodily organs?

And even before that, we have to figure out how to copy/transcribe/port a human personality from its original brain into the computer system in the first place. How would that even be done? It’s not like a human personality, or its accumulated knowledge and experiences, exists in the form of portable software in standardized formats, like a .PDF text file or a .WAV song recording. What is the “machine language” of a human brain? It may not have one, in which case transcribing a human personality into another medium is is simply impossible.

Considering the misery of phantom limbs, I really wonder how happy you’ll be if your whole body is a phantom. Given sufficient technological advances, I suppose all of this is solvable, but it’s not obvious. “Happiness” may be very tied to having a physical existence or at least a very faithful simulation of one. At the other extreme, happiness may not even be tied to a high level of cognitive ability (to be clear, this is not an original idea) maybe happiness could be optimized using the minimal circuitry to feel joy in a truly unexamined life, maintained by our AI overlords. Like maintaining any cloud, when a happy box goes bad, just pull it, wipe the board and reboot. Maximum happiness for the win!

It is just very silly to speculate. As Bob Marley said in rather different context “if you know what life is worth, you would look for yours on earth.” May I be so bold as to suggest it applies here as well?

So the question is: If you want to do “the most good,” should you focus on helping people who are alive right now or these vast numbers of possible people living in computer simulations in the far future? The answer is, of course, that you should focus on these far-future digital beings.

What needs would such digital beings really have? They’d need their host systems constantly expanded and upgraded, and of course they’d need the electricity kept on forever. The flesh-and-blood beings, however, would need all the material benefits we need today, which is far more, and more complicated, than the needs of cyber-beings. So even if the cyber-beings outnumber the meatspace beings by a zillion to one, the meatspace-beings would still have more and more-pressing needs than the cyber-beings — who, quite frankly, won’t have anything to complain about as long as they can stay in their ideal fantasy worlds.

@16

The people would be conscious beings, not NPCs, p-zombies, or whatever. At least they would have to be for the long-termist argument to make any sense.

You’re in a simulation too. The world you see is reconstructed from the information provided to your brain by the senses.

@19 It’s also about ensuring our civilization advances to the point to run those giant advanced simulations (why would we want to?), which means GIVE BILLIONAIRES AND “RATIONALISTS” MORE MONEY/POWER, and FUCK CLIMATE CHANGE (humanity will totally recover from it, eventually).

@18 The well-off teenagers who consume and believe sci-fi garbage didn’t think the “mind upload” idea over.

To my knowledge, there’s nothing that theoretically disqualifies a computer from running processes equivalent to a human brain. Brains don’t really, fundamentally, do anything that computers can’t. But the distance between here and there are… vast. Unfathomably so. It would require a mind-boggling number of scientific and engineering breakthroughs to even begin to consider making one artificial mind that’s kinda like a human one and at least that much more again to make a perfect copy of an existing one. So anyone confidently saying that this will be done, that it is inevitable is foolish. Anyone who thinks they can predict the world that exists after all that, doubly so.

And let’s say you do want this. That you believe that accelerating this state is for the greatest good. A large, healthy, safe, educated population will be the best way to achieve these breakthroughs. The larger the better, a global population the better. The more scientist, the more science, the more engineers, the more engineering. Clearly! A few billionaires taking joy-rides to space while humanity dies is not how any of this happens.

I know Less Wrong from a bad Harry Potter fanfic that is inexplicably popular.

Harry the narcissistic self insert discovers he’s a wizard and tries to puzzle out the Laws of Magic instead of asking real experts what the rules of magic are. He quietly abandons that quest because it’s way too hard if not impossible. Along the way, he shamelessly manipulates his “friend” Draco.

The author is a transhumanist terrified of dying, and his self-insert carries it over…into a fantasy world with an afterlife. The reason why wizards keep dying instead of initiating the techno-Rapture (as I call the Singularity idea) is because 1) they’re morons (so Harry can appear really smart in comparision), and 2) they pretend that death is good.

Harry wants to make it so people will never die again. Even though the latter is impossible. He also dismisses a portal to the afterlife in the government building complex as a hoax without investigating it in order to maintain his transhumanist dogma.

Pure speculation, of course, but one possible way to overcome the physical problem would be to replace the brain cells, in situ, one by one, by some form of artificial nanotechnology equivalent. Assuming the full functionality of a brain cell can be replicated, then the end result would be more durable than the original brain that could be kept alive and “plugged in” to a matrix-style virtual environment after the body dies. It would also ensure continuity of self unlike downloading or cloning.

Good question. I’ve written a short story (not published) where a man discovers he’s the result of the hundred-thousandth attempt by an AI to solve the mind-body interface problem after things start going very wrong for him. But I suspect that if we ever have technology to provide continuity of consciousness beyond physical death, we’ll have already figured out how to simulate the normal stimuli provided through the mind-body interface. If nothing else, our brains are very adaptable to new circumstances, and people have gotten used to dealing with all kinds of debilitating physical conditions.

There’s also the fact that our brains are able to shutdown that connection while we’re sleeping so we don’t end up throwing ourselves out of bed every time we start dreaming. At least subconsciously we don’t need a full range of motion and feelings for our minds to operation.

(Whoops — I hit Post instead of Preview.)

To finish, as I always say in cases like this, if we fail (which is certainly possible), it won’t be for the want of trying. The financial, selfish, and altruistic desire to improve health will inevitably coincide with efforts to extend life in various ways, regardless of any ethical concerns over the latter. For example, if we discover a way to slow down the aging process by 20%, that would give people access to another decade or more of healthy life, which (in the short term anyway) would significantly reduce the burden on the healthcare system, not to mention make those who control the treatment insanely rich.

Whether it would be the ethical thing to do given the longer term implications would be moot since it would be impossible to deny people the chance of a longer healthy life.

On a completely unrelated note, in the RPG campaign I’ll be running, I used Peter Thiel as the model for my Big Bad Evil Guy.

Why do so many tech bros envision a future for humanity that doesn’t include humans? Simulations of people aren’t people.

Musk lives in a post scarcity world while the rest of us starve. Isaac Arthur has multiple videos on this and while it’s fun to dream, we are still living in a world of scarcity. Isaac calls it a Santa Clause Machine. If everyone of us had a machine that could make whatever we want at no cost, why would we do anything? The mega wealthy can have everything they want on a whim. They are already living that lifestyle, but what about the rest of us? We serve in the trenches to enhance their wealth. We do this so we don’t starve to death.

@28

So what if some reality and conscious people for that reality are generated on a supercomputer someday? We have only learned about our earliest history in recent decades (i.e. we are animals with consciousness generated by the brain), and many still deny that probable truth. The base substrate itself is irrelevant to conscious experience.

Not that I believe that civilization simulations will be run, without simplifying things immensly.

Ray,

Don’t the mega wealthy do stuff?

So, either no reason is necessary, or else at least one exists.

Boström- yet another descendant from Swedish immigrants that make ur Swedes embarassed.

.

OT

The Texas governor’s PR stunt costs the state billions.

And the Democrats are reliably useless at making the facts known.

https://youtu.be/q42AbNVfXhI

While it is often used as a science-fiction vehicle for ethical explorations of what consciousness actually is, long termerism has, to me at least, always smacked of just another version of xian afterlife fantasy bullshit where the present human condition can be mostly ignored in favour of a glorious future.

I’m with PZ on this. Switch off their power.

tacitus @25. So, 86,000,000,000 networked nanobots each replicating all the functions of an individual neuron and collectively operating as a simulated human consciousness…….um, really?

I absolutely love grand SF visions of what Humanity might achieve and evolve into at its best.

Of spreading across the stars and creating techno-marvels and wonders on Earth, throughout our solar system and endless worlds beyond. Its worth considering such long term ideas and imagining how we might get there.

We’ll probly never create utopia but we can certainly get ever closer to it if we think boldly and imaginatively and make the effort to try to get there.

We do have a problem as a species inthat we think so short-term all the time and taking a longer view can be beneficial and put things in perspective and is worth doing in my view.

However, we also need to look at what is happening in the here and now, to do all we can to make the world we’re currently in better and to prioritise the immediate injustices and needs too. We have to look after the environment first as atleats a high priority as well as trying to make epic SF dreams come true. We cannot ignore the impact on lives and the world we all share and depend upon in among for the stars.

Ethically, to put an imagined future dream that may wellnever come true above presnt relliving people and lifefrosm seesm totally unacceptable.

This reminds me so much about the former East Bloc nations; it was always “The future will be bright” as a reply to “things are really bad, here and now”.

The people would be conscious beings, not NPCs, p-zombies, or whatever…

Would we really be able to know that for sure?

To my knowledge, there’s nothing that theoretically disqualifies a computer from running processes equivalent to a human brain.

In order to run processes equivalent to a human brain, a computer would have to be physically configured exactly like a human brain.

Brains don’t really, fundamentally, do anything that computers can’t.

I think that’s begging the question — or at least extremely vague. We sort of assume that to be so, because we’re so used to explaining computer operations by comparing them to organic brains, and explaining organic-brain operations by comparing them to computers. Those explanations can be helpful in the short term, but they’re very simplistic, and they gloss over the fact that those two things don’t behave the same way, even when they’re both doing the same thing or solving the same problem. Seriously, when you watch and remember a movie, or participate in a conversation, it’s not a .MPEG file being downloaded into some blank space in your cerebral cortex. Long-term memory doesn’t work like files being saved to a disk, recalling memories doesn’t work like said files being read, and organic-brain thought doesn’t work like an Intel chip, or even a complex array of such chips.

…one possible way to overcome the physical problem would be to replace the brain cells, in situ, one by one, by some form of artificial nanotechnology equivalent.

But would such nanotech then be able to grow or change, as human brains do (in ways large and small) in the course of day-to-day existence and interaction? Or would that person then become developmentally frozen, with zero or diminished ability to assimilate new knowledge or change their thought-patterns or mindset to adapt to changed circumstances?

Considering the misery of phantom limbs, I really wonder how happy you’ll be if your whole body is a phantom.

Yeah, “phantom stomach,” “phantom lungs” and “phantom heart” would likely be VERY serious mental-health issues for people existing without real organic bodies. Anyone who presumes to design The Matrix would have a LOT of hard work to do on this issue alone if they really want to create a livable virtual universe for real people.

How do you think you know that? You immediately followed this claim with a comment that Kipp Kipp@23 was begging the question. You were right – but so are you @36. No-one knows what computers that are not “physically configured exactly like a human brain” can and cannot do.

I have a strong preference for doing absolutely nothing to promote this concept of maximizing future happiness based on what I think is a rational premise. If it’s such a great idea, then some other sentient life form* will have the same idea. Rank sentient life forms by their competence in carrying out such a plan. We have only a 50% chance of being above the median, 25% of being in the top quartile, 1% of being in the top percentile, etc. If the goal is to convert the galaxy into happy-bots, it had best be done by the most qualified, or else happiness will be suboptimal. So the most likely outcome is that we fill some part of the galaxy with inferior happiness. When the most capable happiness optimizers reach our section, they have a choice of tolerating the suboptimal, or worse, embarking on a campaign of genocide. Wouldn’t it be better if we left it pristine for them? (Or showed some moderation.)

Hence, the idea that working hard for this outcome actually maximizes “common good” is based on a losing gamble. Which is my longwinded way of stating that maybe we ought work to solve the problems that actually affect us and we can understand instead of chasing total BS. (But it might carry more weight among transhumanists, phrased with techie jargon and probabilities.)

I honestly feel this way. We have to help other human beings out of survival instinct and caring. But in the larger picture we’re no great shakes. Nobody misses humans except other surviving humans (and their dogs, OK). Why would anyone think a scheme like maximizing human (real or virtual) progeny has any value at all?

To echo some other comments, I agree that this is just a techie take on pie-in-the-sky heaven. Worse, it’s not even going to be pie for me. I’ll be long gone when it happens. What good is that?

*I assume a significant number of other technologically capable sentient species in the galaxy. That’s somewhat unfounded, but there should at least be others in the universe (exactly one intelligent species rather than 0 or many seems unlikely, though it could happen with a multiverses, given sufficiently low probability of intelligence).

True, but I think we can still consider it extremely unlikely that a human mind/consciousness/personality could be “copied” or “transcribed” into another medium, and still be the same person, or even function at all. It could, in fact, be even worse than, say, trying to get iPhone software to work on a Windows PC.

PaulBC: I’m all in favor of futurism, or even long-termism, insofar as they work to improve the lives of ordinary humans (as opposed to creating and nurturing some ideal new-and-improved-built-back-better-than-before fantasy humans). Body parts and brain/nerve functions improved by implants, fine; colonizing other planets, fine; more efficient use/reuse of resources with less pollution, fine — but don’t let jumped-up pricks like Elon Musk take things too far from the reality of ordinary present-day human life.

Raging Bee@41 I’m in favor of technological process and automation whether it improves lives or just because I find it intriguing. I completely reject “long-termism” as a fantasy of balancing present harm with future good. The present harm is still harm. Nothing undoes it.

That doesn’t mean I don’t like to imagine a future with planetary engineering, interstellar missions, and the AI that might actually be suitable for such travel (totally doable over a period of centuries or at most millennia assuming you send initial probes that can bootstrap the target solar system with self-replication infrastructure, after which all other “travel” can be at lightspeed in the form of information).

Where I differ is in the nonsensical proposition that this solves something in an ethical sense. If a time traveler comes to me and tells me I have to kill a puppy due to some butterfly effect or the great Alpha Centauri mission of 3127 will need to be scrubbed, I will tell them to work a little harder analyzing that butterfly effect. The puppy doesn’t care about your interstellar mission.

I guess you didn’t notice the first two words in my comment. Here they are, so you don’t have to scroll back: “Pure speculation…”

Hell, if it’s a crime to speculate about some improbable far distant future, then guilty as charged. It’s fun to speculate, but it seems some people want to drain all the enjoyment out of it. I guess, having just spent three weeks helping my 90 year old parents move into their new (hellaciously expensive) care facility I’ll go back to worrying whether I have enough money to keep me comfortable in retirement.

tacitus @ 43.

It is certainly not a crime to speculate. I just find that the numbers needed to emulate the mind boggle it also, be it pure speculation or otherwise. Maybe humanity will figure it out and we will invent a virtual heaven, or if the repugnicans or putinics get global control, a virtual hell a la Surface detail, but IMO it is not something we should even be seriously thinking about given all the real problems we are facing here and now. I think we have far more pressing issues to figure out, like surviving.