Several weeks ago, I published the “Cat Person or Dog Person?” survey. It’s a silly survey that asks the same question over and over again in different ways, and then you see the results. It’s basically an interactive art piece, and your interpretation of it is as valid as mine.

Now that several weeks have passed, I’m going to explain some of the thought process behind the survey. This should be thought of as “explaining the joke”–the survey was funny, this explanation will not particularly (cat meme excepted).

I was preparing a talk about The Ace Community Survey, to be presented at the Ace & Aro Conference 2019. I wanted an interactive activity, with people participating in a “live survey” by raising their hands in response to questions. The “cat person vs dog person” dichotomy is an idea I borrowed from another activity I had seen in queer student groups. The moderator writes “cat person” on one side of the blackboard, and “dog person” on the other side, and asks people to classify themselves by writing their names on the board. People write their names on all parts of the board, and it helps people understand false binaries. I thought I’d adapt this into a more survey-oriented activity.

Then I thought it would be cool if in addition to the “live survey”, I published a real survey online. Then, in the talk, I could show images of the online survey questions and results.

The talk was recorded, and this post partially overlaps with what I said in the talk. I’ll let you know when the recording is up.

Now, let’s go through the questions.

Q1: Dichotomy

Question 1: This is just your standard survey question, asking you to fit yourself into exactly one of several given categories, failing to consider that you might not.

Q2: Reversal

Question 2: Asking the same question but reversing the order of the options can change the response! I think this is more of a concern for questions with many options, because respondents might just read the responses until they find one that fits, and then skip reading the rest. At time of writing, the results of question 2 and question 1 differ by about 3% but I think that’s just people trolling a survey that is obviously trolling them back.

Q3: Optional dichotomy

Question 3: What if the question is optional? This might change the results if, for example, dog people are more likely to skip the question because they don’t like dichotomies. Fun fact: if you accidentally select an option, the software won’t let you deselect it, and the question effectively becomes mandatory. (ETA: This is fixed in newer versions of Google Forms.)

Q4: Checkboxes

Question 4: One way to give respondents more options, is to allow them to select any number of answers, instead of exactly one. Why the “Neither” answer? Because if you don’t select any of the answers, it will look like you’ve skipped the question! The funny thing is that it now permits self-contradicting responses, such as checking off both “dog person” and “neither”. Which is precisely the answer I gave. I grew up with dogs but I don’t want any pets, so I’d say I’m a dog person and also neither.

Q5: More categories

Question 5: Another way to give respondents more options, is to simply write out more options. At least some professional researchers have used a “cat person/dog person/both/neither” classification…

…hold up. There are scientists studying this question? Really?

As I was saying, at least some professional researchers have used a “cat person/dog person/both/neither” classification, but I added “unsure” and “it fluctuates” to suggest there are other possible answers. No matter how many options are provided, it won’t be enough.

Q6: Write-in

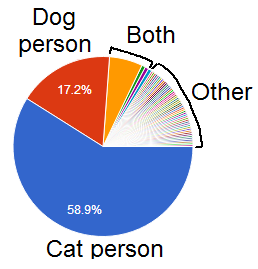

Question 6: If you want to give respondents infinite options, you may allow them to write in their own answer. Survey respondents really like this. But if you look at the automatically-generated pie chart, there are lots of tiny little slices, and that data is basically useless without processing.

One way to process this is by reading all the answers and placing respondents into a smaller number of categories. But if we were going to put people into boxes, why not be upfront about it, and let people choose their boxes directly like in Question 5?

Another thing you might have noticed is that a bunch of people (7%) wrote in “Both” or “both” or “Both!”, but it’s not nearly as many as the 29% of people who selected “Both” in response to question 5.

Q7: Pay attention

Question 7: People who don’t work on surveys often worry about people lying. People who work on surveys worry about another thing: people just not paying attention. In the literature this is called “satisficing”–respondents do the bare minimum to get through the survey without reading or thinking carefully. My survey found that about 87% of people were awake. You should all be proud of yourselves, it’s hard to not be asleep!

Q8: Definitions

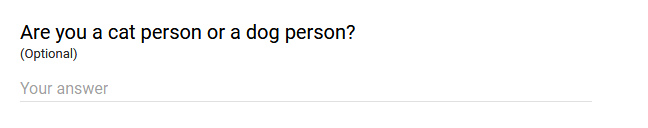

Question 8: Something that survey respondents complain about, is when a term is left undefined, and they’re not sure what the survey-writers intended. Often survey-writers don’t have any particular definition in mind, and just want respondents to answer the damn question. Sometimes survey-writers do have something specific in mind, and will explicitly define terms, but this is not without pitfalls. For example, the explicit definition might conflict with the respondent’s definition, leaving respondents confused, or suspicious of the survey’s intentions. Furthermore, some respondents just don’t read that stuff.

Q9: Free response

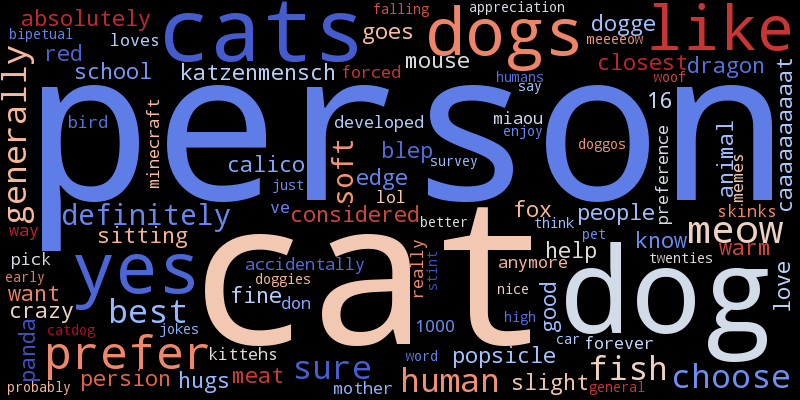

Question 9: Survey respondents love free-response questions. But for large data sets, these questions are hard to use, unless you want a word cloud or something. I did enjoy reading these responses though. And here’s a word cloud.

Q10: Likert

Question 10: When it comes to things like gender, we’re always saying stuff like “It’s not a binary…” *spreads arms dramatically* “…it’s a spectrum!”

“such option” “so spectrum” “very include” “impress” “much broad” “no binary” “wow”

What’s that? Liking cats doesn’t mean not liking dogs? I’m sorry, but the spectrum offers infinite possibilities, and therefore what you say is not possible.

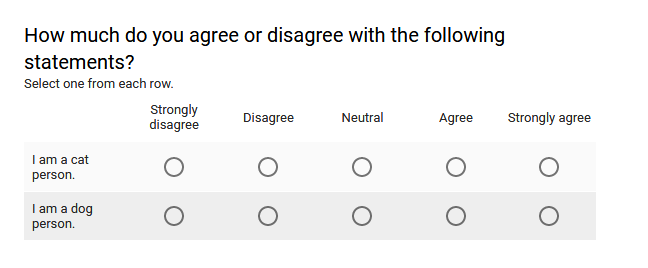

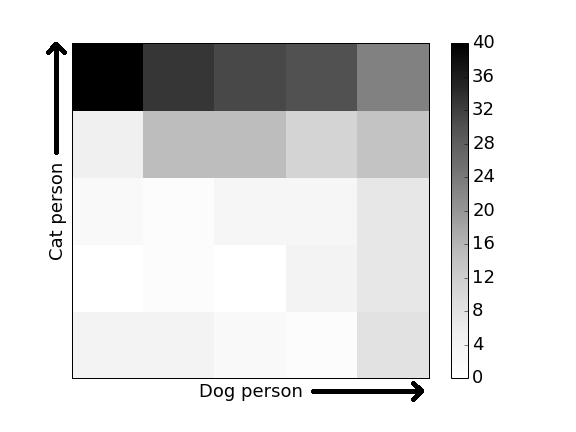

Q11: Multiple likerts

Question 11: Instead of a single likert question, you could have two likert questions. Or more! If I really wanted to understand what it means to be a cat person or dog person, I’d make a bunch of likerts, examining what pets people prefer and what they think is cute. Likert questions also give you the ability to do fancy schmancy stuff like confirmatory factor analysis or whatever, so it’s what a lot of professionals use for more serious subjects. But for just two likerts, well I can make these color charts.

Q12: Feedback

Question 12: As previously mentioned, free response questions are really hard to use, and the same is true of questions asking for feedback. Sorry to say, in all likelihood people aren’t reading your feedback, or else they’re just not doing anything with it. But I did read all the answers. Some of my favorites:

Why do you ask the same question over and over again and call it a survey?

There were an inadequate number of questions. More data makes everything better.

I suddenly feel like I no longer know myself

Just an FYI to clear some things up: I’m a cat person

what is a survey

What indeed.

woof

I was already laughing my ass off, but i fucking lost it at the doge xD

Thank you for your hard work!

That was me. I selected “cat person” for the first question and “dog person” for the second question. My purpose in doing so wasn’t trolling, it’s just that I like both cats and dogs, thus I can call myself either a cat person or a dog person.

@Andreas Avester,

Yeah, that makes sense. Often these “conflicting” responses actually have a bit of logic to them, which you don’t get to understand until you ask people about them. The trouble is that there isn’t consistency to them–most people who like both cats and dogs don’t respond the way you did.