Computer Science is weird. Most of the papers published in my field look like this:

We describe the architecture of a novel system for precomputing sparse directional occlusion caches. These caches are used for accelerating a fast cinematic lighting pipeline that works in the spherical harmonics domain. The system was used as a primary lighting technology in the movie Avatar, and is able to efficiently handle massive scenes of unprecedented complexity through the use of a flexible, stream-based geometry processing architecture, a novel out-of-core algorithm for creating efficient ray tracing acceleration structures, and a novel out-of-core GPU ray tracing algorithm for the computation of directional occlusion and spherical integrals at arbitrary points.[1]

A speed improvement of two orders of magnitude is pretty sweet, but this paper really isn’t about computers per se; it’s about applying existing concepts in computer graphics in a novel combination to solve a practical problem. Most papers are all about the application of computers, and not computing itself. You can dig up examples of the latter, like if you try searching for concurrency theory,[2] but even then you’ll run across a lot of applied work, like articles on sorting algorithms designed for graphics cards.[3]

In sum, computer scientists spend most of their time working in other people’s fields, solving other people’s problems. So you can imagine my joy when I stumbled on people in other fields invoking computer science.

Because the evolved function of a psychological mechanism is computational—to regulate behavior and the body adaptively in response to informational inputs—such a model consists of a description of the functional circuit logic or information processing architecture of a mechanism (Cosmides & Tooby, 1987; Tooby & Cosmides, 1992). Eventually, these models should include the neural, developmental, and genetic bases of these mechanisms, and encompass the designs of other species as well.[4]

Hot diggity! How well does that non-computer-scientist understand the field, though? Let’s put my degree to work.

The second building block of evolutionary psychology was the rise of the computational sciences and the recognition of the true character of mental phenomena. Boole (1848) and Frege (1879) formalized logic in such a way that it became possible to see how logical operations could be carried out mechanically, automatically, and hence through purely physical causation, without the need for an animate interpretive intelligence to carry out the steps. This raised the irresistible theoretical possibility that not only logic but other mental phenomena such as goals and learning also consisted of formal relationships embodied nonvitalistically in physical processes (Weiner, 1948). With the rise of information theory, the development of the first computers, and advances in neuroscience, it became widely understood that mental events consisted of transformations of structured informational relationships embodied as aspects of organized physical systems in the brain. This spreading appreciation constituted the cognitive revolution. The mental world was no longer a mysterious, indefinable realm, but locatable in the physical world in terms of precisely describable, highly organized causal relations.[4]

Yes! I’m right with you here. One of the more remarkable findings of computer science is that every computation or algorithm can be executed on a Turing machine. That includes all of Quantum Field Theory, even those Quant-y bits. While QFT isn’t a complete theory, we’re extremely confident in the subset we need to simulate neural activity. Those simulations have long since been run and matched against real-world data, the current problem is scaling up from faking a million neurons at a time to faking 100 billion, about as many as you have locked in your skull.

Our brains can be perfectly simulated by a computational device, and our brain’s ability to do math proves they are computational. I can quibble a bit on the wording (“precisely describable” suggests we’ve faked those 100 billion), but we’re off to a great start here.

After all, if the human mind consists primarily of a general capacity to learn, then the particulars of the ancestral hunter-gatherer world and our prehuman history as Miocene apes left no interesting imprint on our design. In contrast, if our minds are collections of mechanisms designed to solve the adaptive problems posed by the ancestral world, then hunter-gatherer studies and primatology become indispensable sources of knowledge about modern human nature.[4]

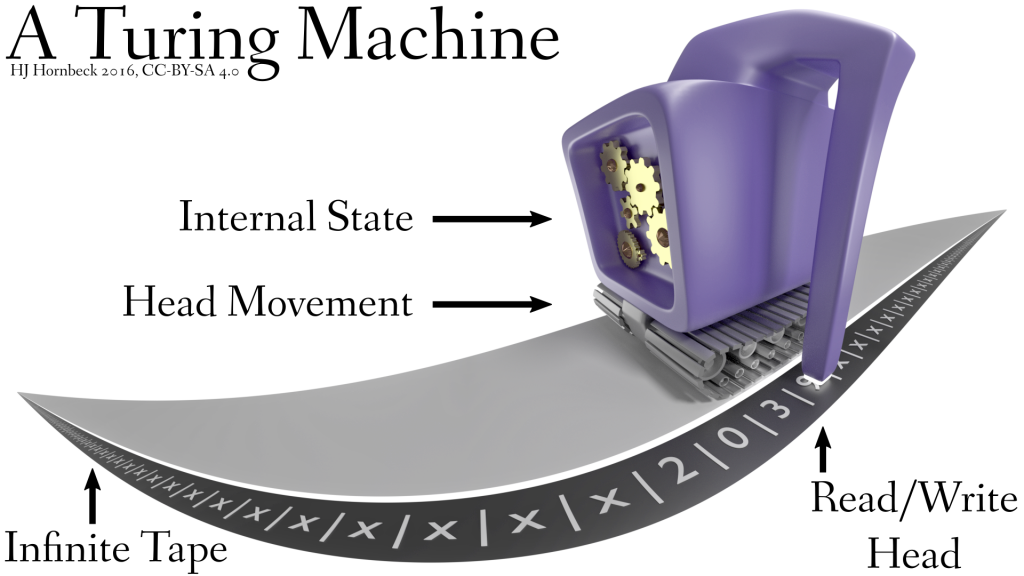

Wait, what happened to the whole “our brains are computers” thing? Look, here’s a diagram of a Turing machine.

A read/write head sits somewhere along an infinite ribbon of tape. It reads what’s under the head, writes back a value to that location, and moves either left or right, all based on that value. How does it know what to do? Sometimes that’s hard-wired into the machine, but more commonly it reads instructions right off the tape. These machines don’t ship with much of anything, just the bare minimum necessary to do every possible computation.

This carries over into physical computers as well; when the CPU of your computer boots up, it does the following:

- Read the instruction at memory location 4,294,967,280.

- Execute it.

Your CPU does have “programs” of a sort, instructions such as ADD (addition) or MULT (multiply), but removing them doesn’t remove its ability to compute. All of those extras can be duplicated by grouping together simpler operations, they’re only there to make programmers’ lives easier.

There’s no programmer for the human brain, though. Despite what The Matrix told you, no-one can fiddle around with your microcode and add new programs. There is no need for helper instructions. So if human brains are like computers, and computers are blank slates for the most part, we have a decent reason to think humans are blank slates too, infinitely flexible and fungible.

[Part 2]

[1] Pantaleoni, Jacopo, et al. “PantaRay: fast ray-traced occlusion caching of massive scenes.” ACM Transactions on Graphics (TOG). Vol. 29. No. 4. ACM, 2010.

[2] Roscoe, Bill. “The theory and practice of concurrency.” (1998).

[3] Ye, Xiaochun, et al. “High performance comparison-based sorting algorithm on many-core GPUs.” Parallel & Distributed Processing (IPDPS), 2010 IEEE International Symposium on. IEEE, 2010.

[4] Tooby, John, and Leda Cosmides. “Conceptual Foundations of Evolutionary Psychology.” The Handbook of Evolutionary Psychology (2005): 5-67.