You walk down an ordinary drab aisle in a supermarket, but you see grocery items stand up and dance a jig, below them are prices, special offers, and nutrition profiles. In the corner of your eye a counter keeps track of the total bill for items already chosen, taxes included, and flashes red when it reaches a predetermined value. A neighbor speaks to you in Mandarin, but text scrolls past your field of vision, maybe a tiny speaker whispers the english translation in your ear. Oh, and if the power goes out, no matter. You can see in the dark.

This may sound like the future, but if Google’s new project succeeds, the future starts now.

TPM— It’s unclear just what features the first editions of Google’s computerized glasses, Google Glass, will include when mailed out to a selected crop of software developers in early 2013.

But a recent update to Google’s “Search by Image,” service, as well as open statements by Google researchers working on the hi-tech specs, point to a future in which people wearing Google Glass will be able to look at just about anything and receive a wealth of information about the objects and subjects in their view.

We have monkey eyes, recently evolved to make instant life and death decisions about grabbing that tree branch or spotting ripe fruit before your rivals see it, then hastily tweaked by a million years of natural selection to serve us as both predator and prey on the ground. Our eyes, more accurately the fat connections they have to the visual processing centers in the brain, are amazing. Humans are incredibly visual creatures, which is why, in this modern world, eyes could be so much more.

There’s a reason we developed lanterns and radar, there’s a reason we use x-rays and infrared goggles, there’s a reason we lug around paper books and Kindles. It’s all done so that vast amounts of data canbe stored organized, and presented to our brains on cue. What if, instead of peering into radar portholes and flat screens, or groping around in the dark, we could see in those respective wavelengths and have the data arrayed however we wish? The latter is key: the visual cortex has considerable bandwidth, but it’s not infinte. Applications managing what we want to focus on versus what we want in the background or on call, being able to parse data six ways from Sunday, are as important as the hardware.

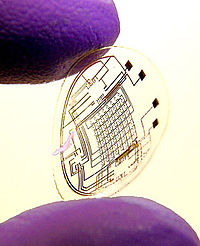

Revolutionary advances in visual software and hardware could be the next gift technology puts under mankind’s Christmas tree. What form it will take is unclear. It could be a pair of glasses, that sounds like a good way to start, maybe the bionic contact lens shown right will become a viable product, perhaps the visual prosthesis now being crudely mapped onto the visual cortex in the blind will be vastly improved. Imagine a world where the visually impaired have an edge of some kind or another over people with normal vision!

We’ll see what all the Google Glasses do and don’t over the next year or two — the features in the grafs above are speculation on my part, they’re not meant to be a review of the GG prototype. These partcular glasses may turn out to be mere curiosities, or bugged out pieces of shit, they could even prove to be dangerous. But Augmented Vision is coming. And the visual-sensorial enhancement technology Google’s glasses represent could change our world more than cell phones and the Internet combined.

Television will be a boon to culture and learning. Think how it will set us free.

Oh, I was a little late with that prediction. Google Glass? Hmm…

I can see it now.

There’s also a reason why it’s not recommended that you talk on your cellphone whilst driving. It’s because your brain has limits on how much data it can process in a finite amount of time and tends to filter out what seem to be “nonessentials.”

Although I’m sure the Google Glass will revolutionize dating.

“The Americans need the telephone, but we do not. We have plenty of messenger boys.” — Sir William Preece, Chief Engineer, Royal Mail, 1878

“There is no reason why anyone would want a computer in their home.” — Ken Olson, Chairman, Digital Equipment Corp, 1977

On the other hand, somebody taught monkeys to get a reward by clicking a computer mouse on an icon on the screen. Then they hooked up some electrodes to read the monkey’s brain waves when it moved the mouse. Then they programmed the computer to move the cursor according to the brain waves and disconnected the mouse. The monkey learned on its own to move the cursor without moving the mouse.

I expect they will bypass the visual input and have electronics reading and writing to brain waves. It might be expensive but marketing resistance is futile. You will pay through the nose to be assimilated. It will cost an arm and a leg, maybe more.

The whole idea of animated food makes me faintly ill. The notion that shopping will turn into an Internet-like experience with pop-up advertisements and special offers is repulsive. I don’t even like the fact that my stop at a Shell gas station this afternoon was accompanied by noisy TV monitors atop each pump. Fie! I had avoided this station for a couple of years, but today the car’s gas gauge persuaded me to stop in. I must learn to be more careful!

You want to block dancing cans of coffee? There’s an app for that …

Huh. A lot of the tech stuff I read says it is a solution in search of a problem.

Really, I think these are fine as I/O devices, but this wearing them in public wherever you go thing is just asking for more hurt. People can’t reliably pay attention and lack situational awareness as it is. Hell, as a matter of fact, it hasn’t been an hour since someone almost ran me over, from a stop, at a crosswalk.

When web ads started doing that, I installed an adblocker. I will never ever again use a browser that is unable to block annoying, intrusive and attention-grabbing ads.

It’s quite bad enough to have that crap on the internet, I’m not going to accept it anywhere else.

You want my attention? Then stop trying to steal it.

Hey, evolution is great, it’s just a bit slow for our needs. We’ve evolved to the point where we don’t need to rely on evolution anymore. Technology is how the human race will advance in the foreseeable future.